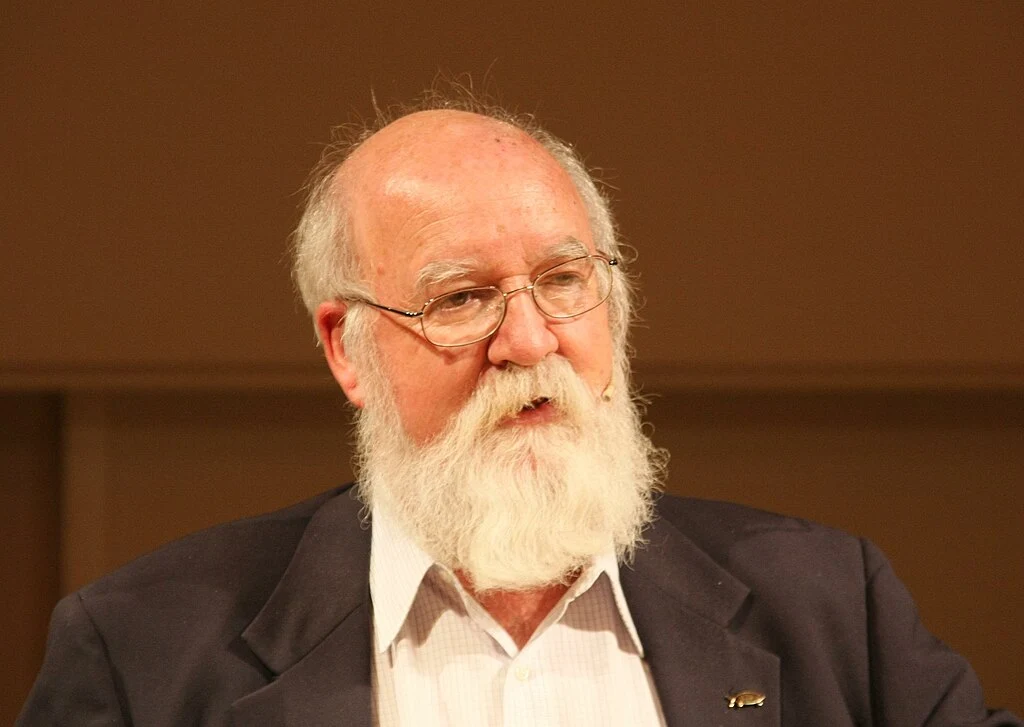

Yesterday Daniel Dennett died. He was 82, about the same age as my father when he died a few years ago. I think I've mentioned before that the first writer I read on consciousness was Susan Blackmore. But I know Dennett wasn't far behind, likely based on Blackmore's positive discussions of his work, but also … Continue reading Some thoughts on Daniel Dennett’s ideas

Fallout

Fallout, like many recent TV shows, demonstrates the old rule, of video game adaptations always being awful, is obsolete. At first Fallout seems similar to a lot of other post-apocalyptic shows. There's been a nuclear war and the world is a wasteland. Life on the surface is a brutal battle for survival, even two hundred … Continue reading Fallout

Halo

Shows based on video games have gotten better in recent years, and Halo seems to fit this trend. I never got into the games, so my knowledge of the premise only comes from the show. Humanity is at war with an alien civilization known as the "Covenant". The Covenant seems determined to eradicate humanity for … Continue reading Halo

3 Body Problem

The TV show, 3 Body Problem, is an adaptation of Liu Cixin's novel, The Three Body Problem. I read Cixin's book several years ago, back when it was up for the Hugo Award, which it eventually won. The book explores a lot of ideas, such as the difficulty in making predictions with inherently chaotic systems, … Continue reading 3 Body Problem

Blade (Inverted Frontier Book 4)

Blade is the penultimate book in Linda Nagata's Inverted Frontier series. I've written about this series many times. It's a sequel to her earlier series: The Nanotech Succession. These books describe a civilization that has mastered nanotechnology, to the extent that mind uploading and new bodies on demand are possible, so everyone is essentially immortal. … Continue reading Blade (Inverted Frontier Book 4)

Dune: Part Two

This week I saw the second part of Denis Villeneuve's adaptation of Dune. You've probably seen the glowing recommendations. I'll confirm that the movie is very good, a visually stunning experience. I certainly think it cements Villeneuve's adaptation as the definitive cinematic treatment of Frank Herbert's novel. I recommend seeing it, although it's worth first … Continue reading Dune: Part Two

Avatar: The Last Airbender: the live actioning

Yesterday I watched the live action adaptation of Avatar: The Last Airbender. Live action remakes of anime (or anime inspired) shows has been a dodgy proposition over the years, with the 2010 attempt for this franchise being a stark example. I actually enjoy most of them, but historically I'm an outlier. (I should note that … Continue reading Avatar: The Last Airbender: the live actioning

How to study reality

SMBC on how to get access to the secrets of reality. Click through for source and red button caption: https://www.smbc-comics.com/comic/secrets-2 I guess this is philosophical comic sharing week for me. Somewhat related, I've been slowly working my way through Sean Carroll's The Biggest Ideas in the Universe: space, time, and motion. I know a lot … Continue reading How to study reality

Existential Comics: the philosophy of magic

What is the difference between magic and science? It's been a while since I shared an Existential Comic. This one gets at a question we've discussed before, although it's been several years. What exactly is the distinction between the physical and non-physical, in this case between science and magic? Credit: https://existentialcomics.com/comic/537 Corey Mohler, the author, has a … Continue reading Existential Comics: the philosophy of magic

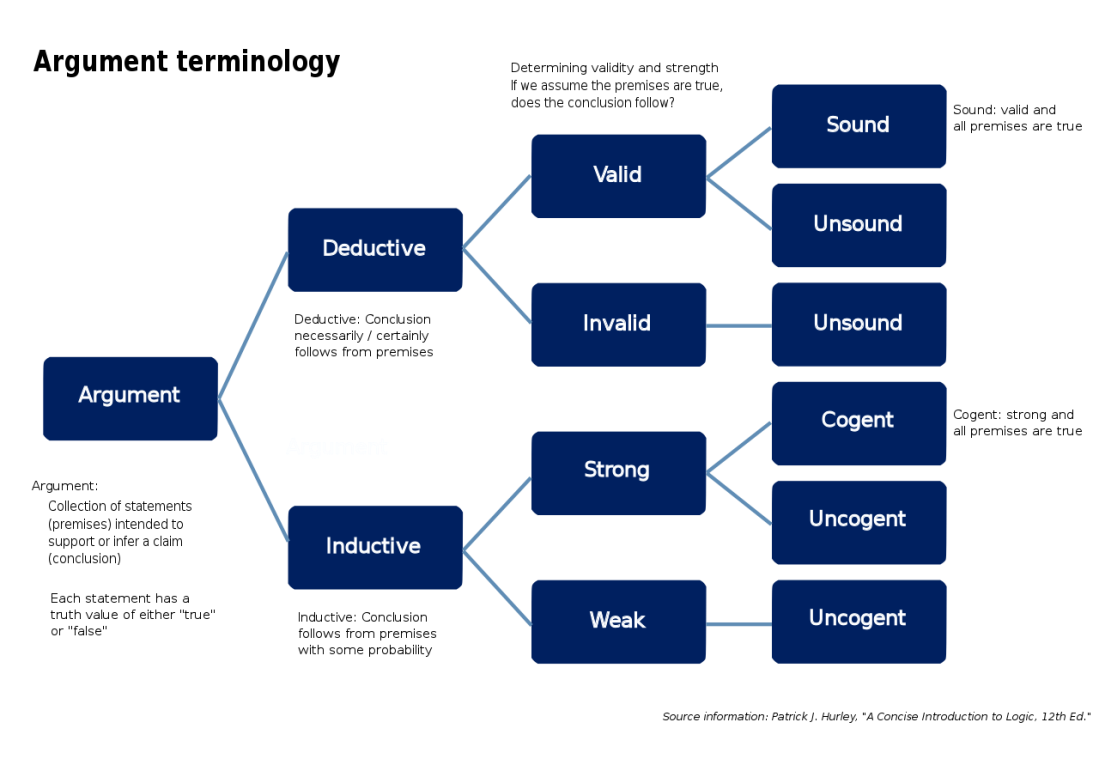

Reasons and conclusions

I think the reasons someone reaches a conclusion are at least as important as the conclusion itself. Recently someone I know changed their mind about a topic. Where they had previously disagreed with me on something, they now agree. Which was great, except, I found their reasons for the change problematic. It reminded me that I often have … Continue reading Reasons and conclusions