Spurred by conversations a few weeks ago, I've been thinking about physicalism, the stance that everything is physical, that the physical facts fix all the facts. A long popular attack against this view has been to argue that it's incoherent, since we can't give a solid definition of what "physical" means. And so physicalism seems … Continue reading The attitude of physicalism

Category: Philosophy

Chill about metaphysics

This week I had to block a couple of people on different platforms. Neither seemed able to make their point without lacing in insults. One seemed to be on a mission to make me feel as bad about my outlook as possible. The disagreements were on purely metaphysical grounds, physicalism vs non-physicalism. And seem to … Continue reading Chill about metaphysics

If usefulness isn’t a guide to what’s real, what is?

Seems like I've been writing a lot about quantum mechanics lately. Apparently so have a lot of other people. One thing that keeps coming up is the reality or non-reality of the quantum wave function. Raoni Arroyo and Jonas R. Becker Arenhart argue for non-reality: Quantum mechanics works, but it doesn't describe reality: Predictive power … Continue reading If usefulness isn’t a guide to what’s real, what is?

Why I’m a reductionist

The SEP article on scientific reductionism notes that the etymology of the word "reduction" is "to bring back" something to something else. So in a methodological sense, reduction is bringing one theory or ontology back to a simpler or more fundamental theory or ontology. The Wikipedia entry on reductionism identifies different kinds: ontological, methodological, and … Continue reading Why I’m a reductionist

Is quantum immortality a real thing?

In discussions about the Everett interpretation of quantum mechanics, one of the concerns I often see expressed is for the perverse low probability outcomes that would exist in the quantum multiverse. For example, if every quantum outcome is reality, then in some branches of the wave function, entropy has never increased. In some branches, quantum … Continue reading Is quantum immortality a real thing?

Many-worlds without necessarily many worlds?

IAI has a brief interview of David Deutsch on his advocacy for the many-worlds interpretation of quantum mechanics. (Warning: possible paywall.) Deutsch has a history of showing little patience with other interpretations, and this interview is no different. A lot of the discussion centers around his advocacy for scientific realism, the idea that science is … Continue reading Many-worlds without necessarily many worlds?

Why I’m an ontic structural realist

Scientific realism vs instrumentalism A long standing debate in the philosophy of science is about what our best scientific theories tell us. Some argue that they reveal true reality, that is, they are real. Others that scientific theories are only useful prediction frameworks, instruments useful in the creation of technology, but that taking any further … Continue reading Why I’m an ontic structural realist

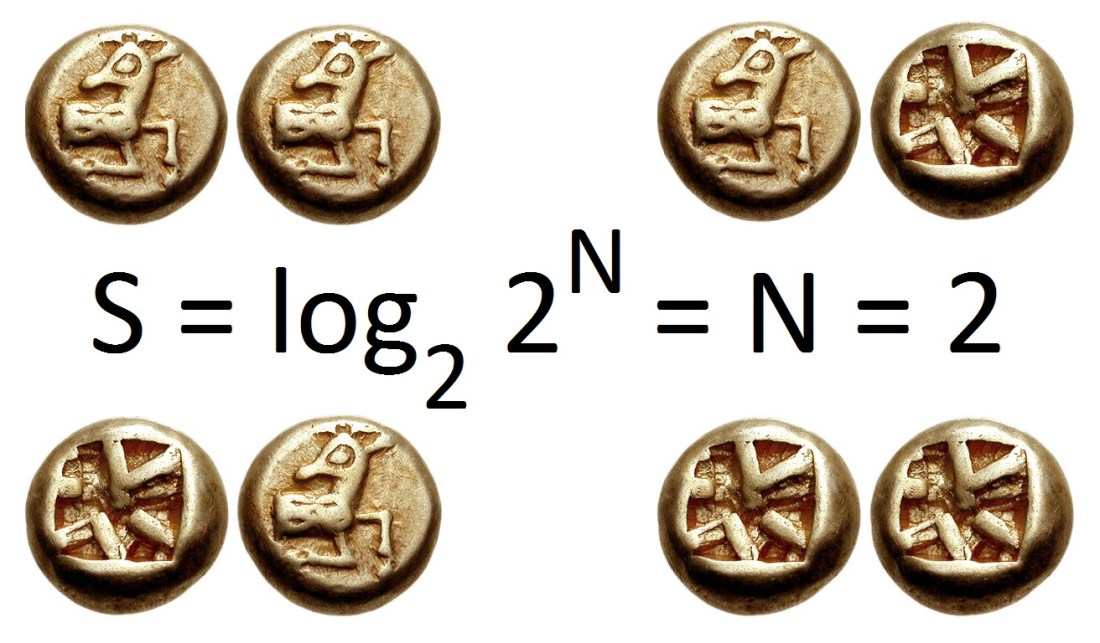

Entropy transformers

What is the relationship between information, causation, and entropy? The other day, I was reading a post from Corey S. Powell on how we are all ripples of information. I found it interesting because it resonated with my own understanding of information (i.e. it flattered my biases). We both seem to see information as something … Continue reading Entropy transformers

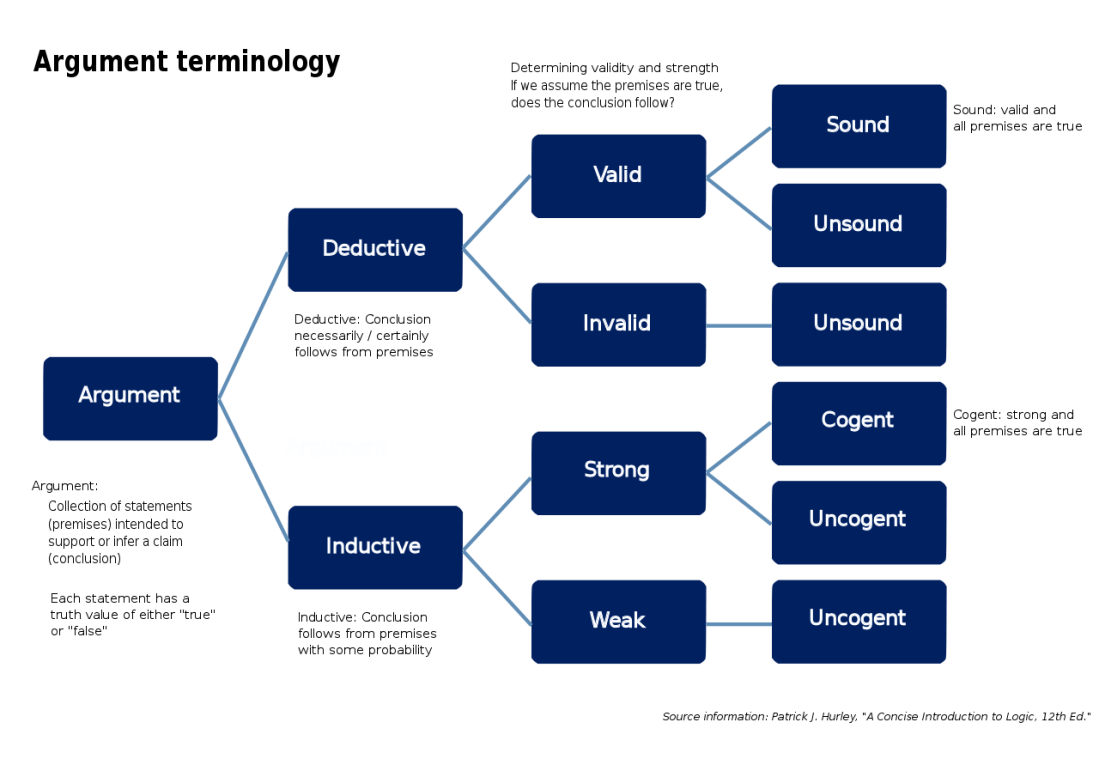

Reasons and conclusions

I think the reasons someone reaches a conclusion are at least as important as the conclusion itself. Recently someone I know changed their mind about a topic. Where they had previously disagreed with me on something, they now agree. Which was great, except, I found their reasons for the change problematic. It reminded me that I often have … Continue reading Reasons and conclusions

Causal completeness

It seems like theories that are causally complete are better than ones with gaps. In thinking about this, I'm reminded of a Psyche article I shared a few years ago on fostering an open mind. One of their pieces of advice resonates with an outlook I've had for some time. If embarking on a full-on explanation … Continue reading Causal completeness