A new paper is been getting some attention. It makes the case for biological computation. (This is a link to a summary, but there's a link to the actual paper at the bottom of that article.) Characterizing the debate between computational functionalism and biological naturalism as camps that are hopelessly dug in, the authors propose … Continue reading Biological computation and the nature of software

Tag: Artificial intelligence

Why I still think Turing’s insight matters

Nature has an article noting that language models have killed "the Turing test" and asking if we even need a replacement. I think the article makes some good points. But a lot of the people quoted seem to take the opportunity to dismiss Turing's whole idea. I think this is a mistake. First, we need … Continue reading Why I still think Turing’s insight matters

Does consciousness require biology?

Ned Block has a new paper out, for which he shared a time limited link on Bluesky. He argues in the paper that the "meat neutral" computational functionalism inherent in many theories of consciousness neglect what he sees as a compelling alternative: that the subcomputational biological realizers underlying computational processes in the brain are necessary … Continue reading Does consciousness require biology?

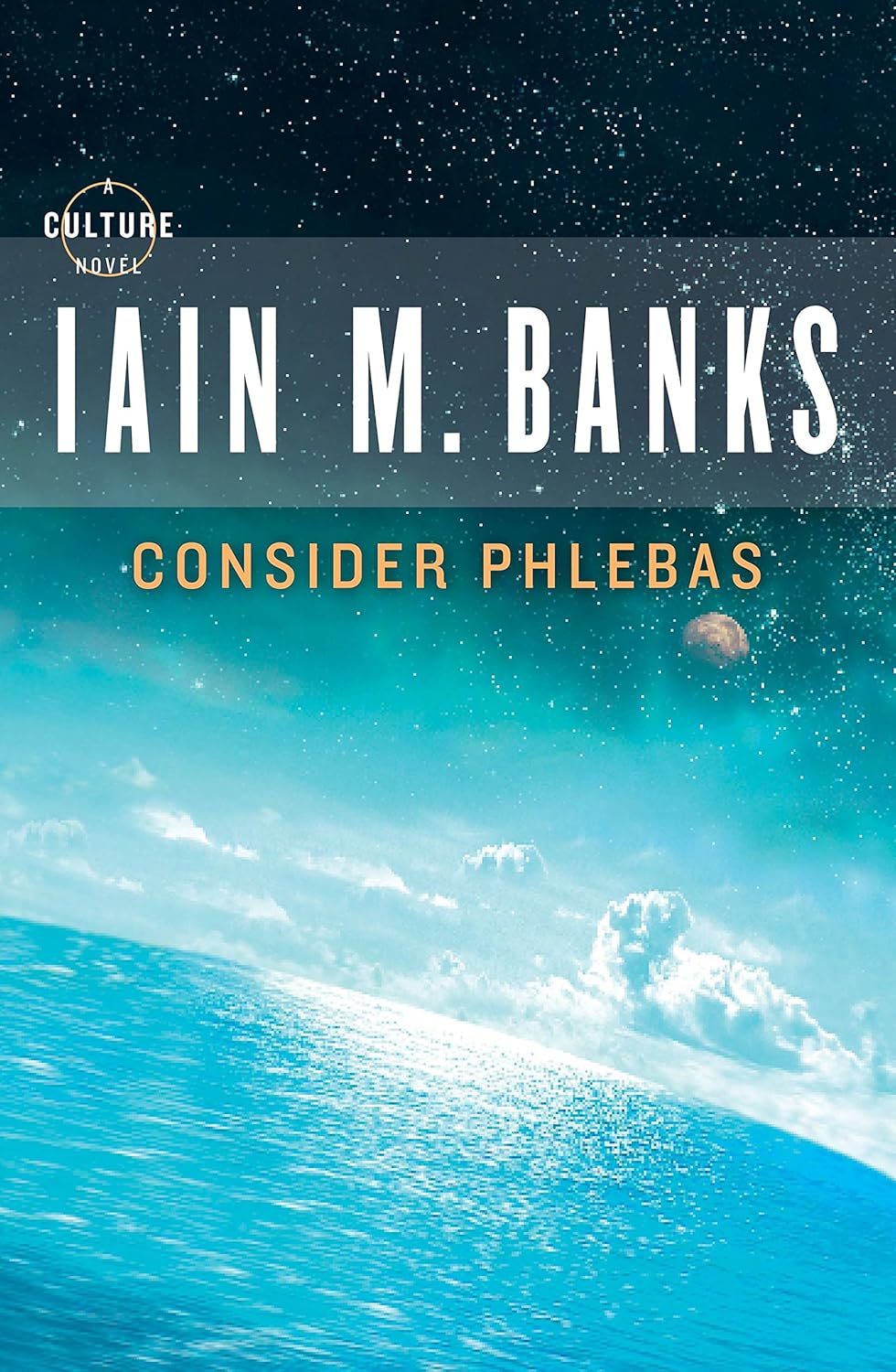

A reread of Consider Phlebas

Iain Banks' Culture setting is probably the closest thing to outright paradise in science fiction. It's an interstellar post-scarcity techno-anarchist utopia, where sentient machines do all the work and the humans hang around engaging in hobbies or other hedonistic pursuits. Some do choose to work, but there's no requirement for it since money isn't required. … Continue reading A reread of Consider Phlebas

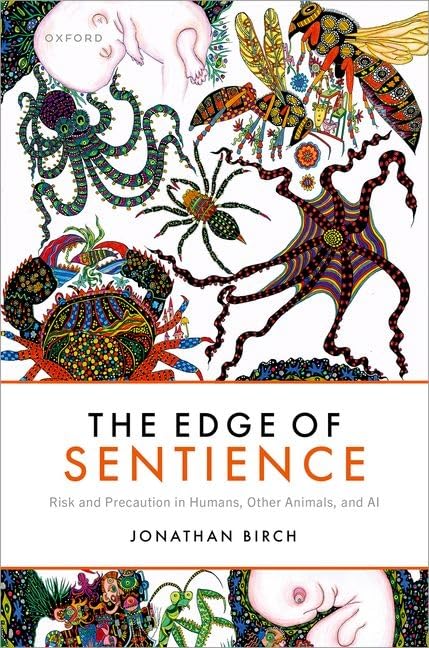

The edge of sentience in AI

This is the fourth in a series of posts on Jonathan Birch’s book, The Edge of Sentience. This one covers the section on artificial intelligence. Birch begins the section by acknowledging how counter intuitive the idea might be of sentience existing in systems we build, ones that aren't alive and have no body. But he urges us to … Continue reading The edge of sentience in AI

The semantic indeterminacy of sentience

I'm currently reading Jonathan Birch's The Edge of Sentience, a book focusing on the boundary between systems that can feel pleasure or pain, and those that can't, and the related ethics. While this is a subject I'm interested in, I'm leery of the activism the animal portions of it attract. I have nothing in particular … Continue reading The semantic indeterminacy of sentience

AI intelligence, consciousness, and sentience

Can the possibility of AI consciousness be ruled out? Anil Seth has a new preprint on the question of AI consciousness. Seth is skeptical about AI consciousness, although he admits that he can't rule it out completely. He spends some time attacking computational functionalism, the view that mental states are functional in nature, that they … Continue reading AI intelligence, consciousness, and sentience

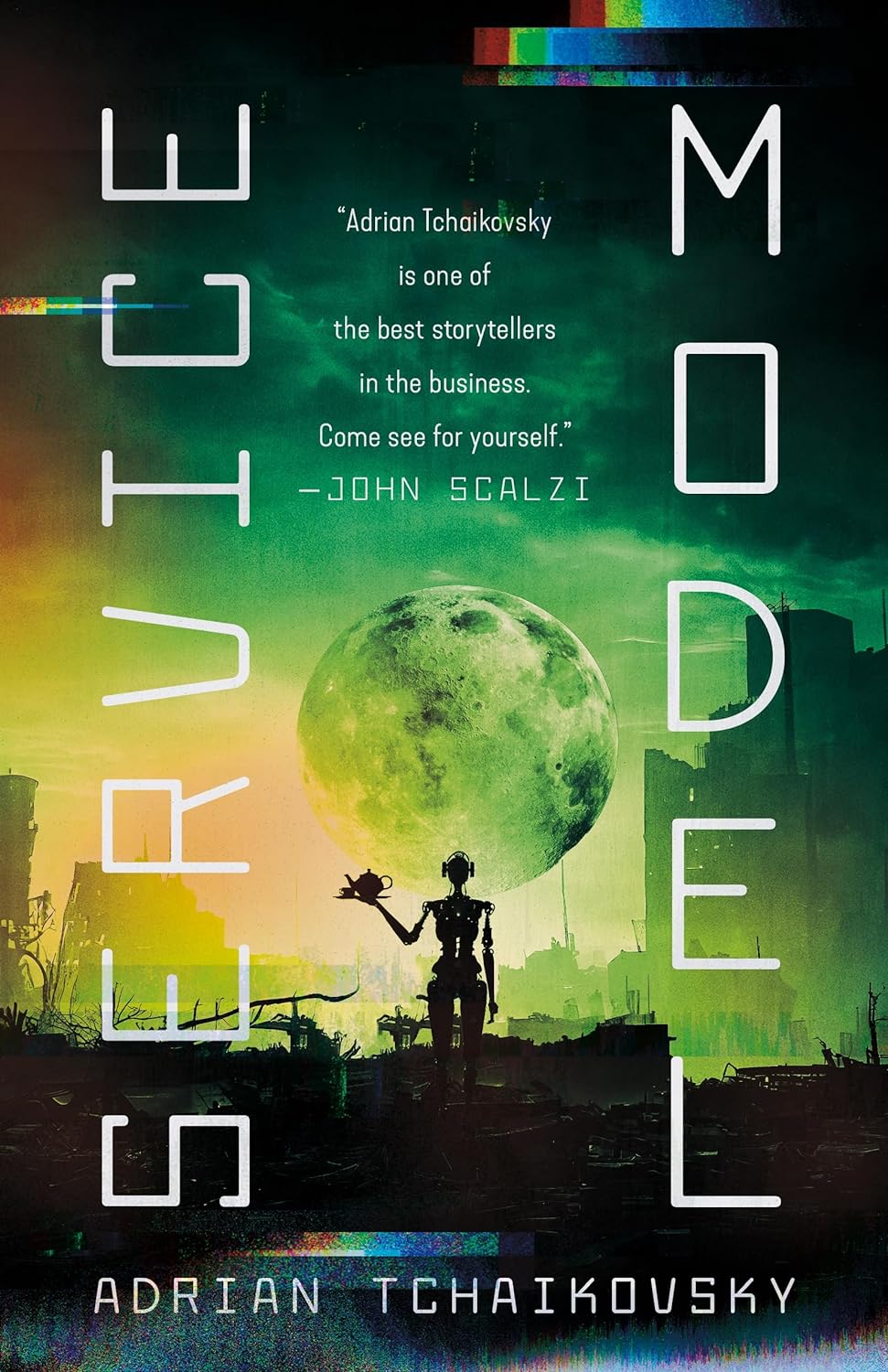

Service Model

Adrian Tchaikovsky's Service Model is a fresh take on what can go wrong in a world of robots and AI. Charles is a robot valet. He works in a manor performing personal services for his human master, checking his travel arrangements, laying out his clothes, shaving him, serving meals, etc. However, it appears to have … Continue reading Service Model

Is AI consciousness an urgent issue?

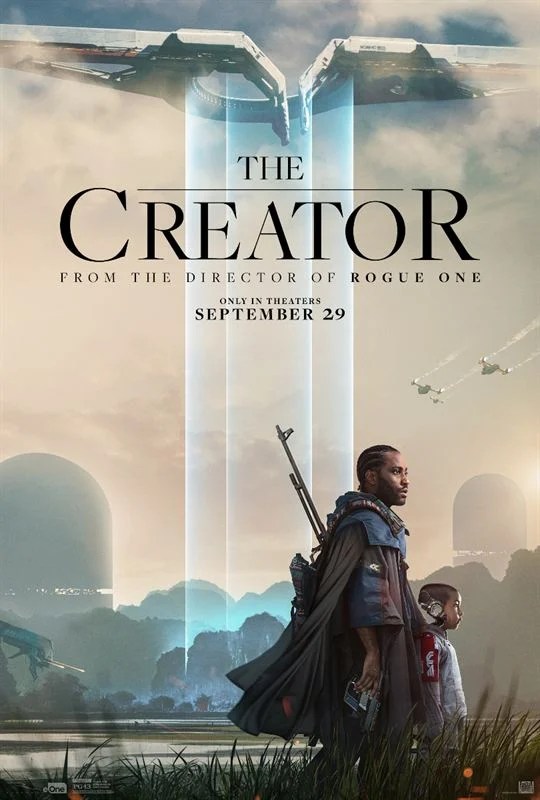

AI consciousness seems like an easier thing to ponder when you approach it from a functionalist viewpoint. Sunday I watched the movie The Creator. The premise is a few decades in the future, we've managed to create sentient robots. At first, all seems well, with them being a boon for humanity. Then a nuclear bomb goes off in … Continue reading Is AI consciousness an urgent issue?

How to tell if AI is conscious

An interesting preprint was released this week: Consciousness in Artificial Intelligence: Insights from the Science of Consciousness. The paper is long and has has sixteen authors, although two: Patrick Butlin and Robert Long, are flagged as the primaries. The list of contributors includes Jonathan Birch, Stephen Fleming, Grace Lindsay, Matthias Michel, and Eric Schwitzgebel, all … Continue reading How to tell if AI is conscious