In the last post, I discussed Amanda Gefter's critique of Vlatko Vedral's view that observers have no special role in reality. Conveniently, Vedral published an article at IAI discussing his view: Everything in the universe is a quantum wave. (Warning: possible paywall.) Vedral puts his view forward as a radical new interpretation of quantum mechanics. … Continue reading Everything is a quantum wave?

Category: Science

Testing Everettian quantum mechanics

The Everett theory of quantum mechanics is testable in ways most people don't realize. Before getting into how or why, I think it's important to deal with a long standing issue. Everettian theory is more commonly known as the "many worlds interpretation", a name I use myself all the time. But what's often lost in the discussion … Continue reading Testing Everettian quantum mechanics

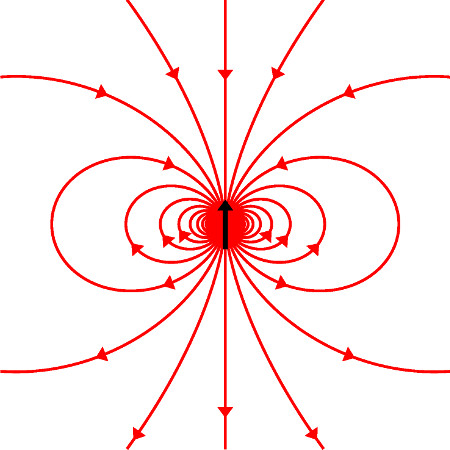

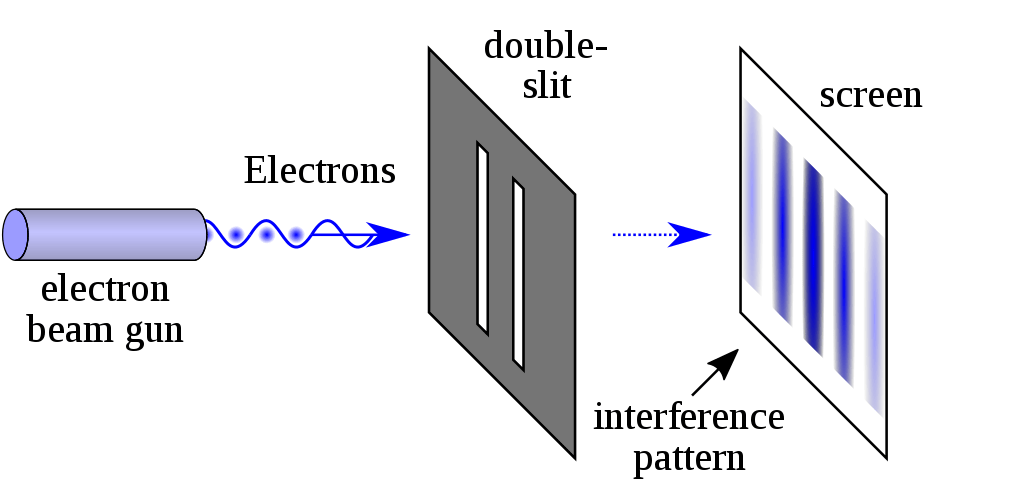

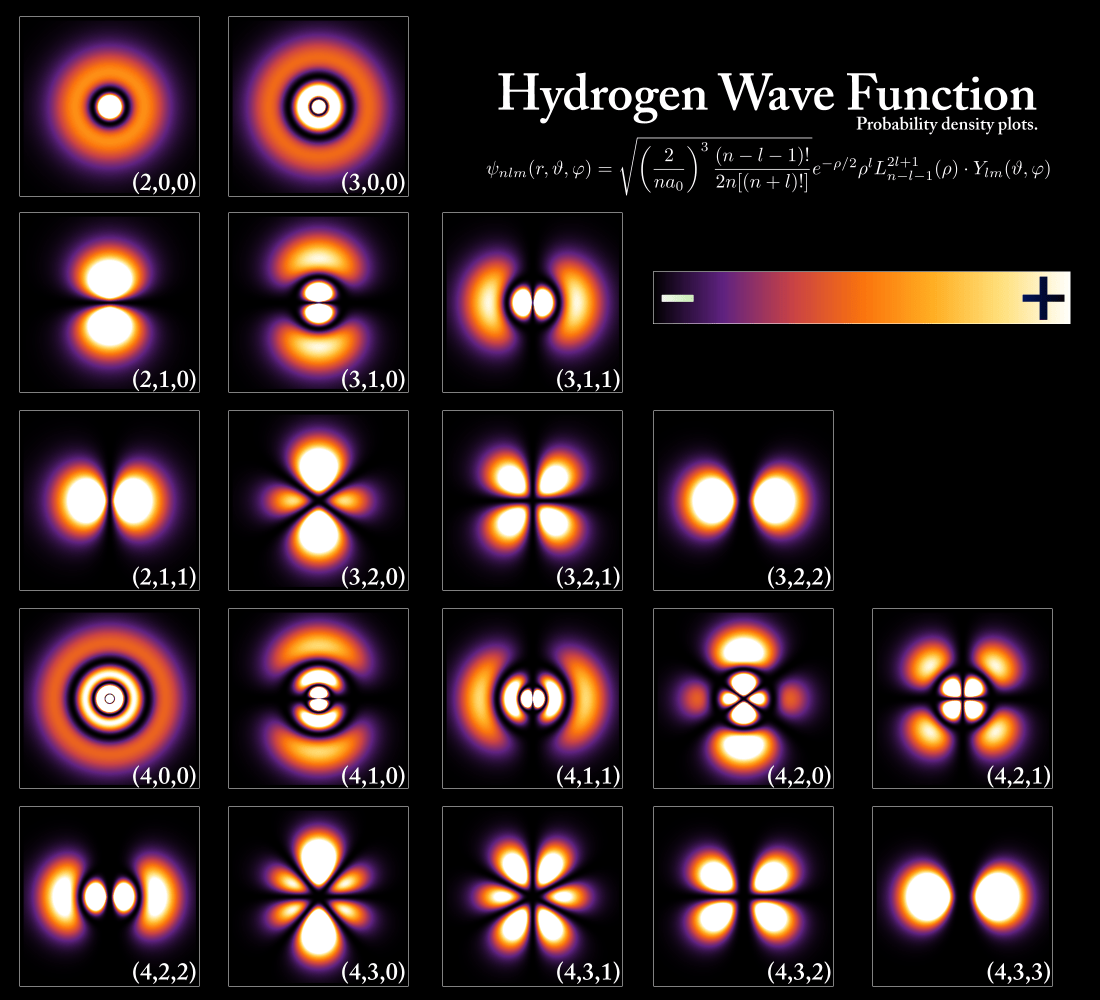

Those inconvenient quantum interference patterns

Are quantum states and the overall wave function real? Or merely a useful prediction tool? The mystery of quantum mechanics is that quantum objects, like electrons and photons, seem to move like waves, until they're measured, then appear as localized particles. This is known as the measurement problem. The wave function is a mathematical tool for modeling, … Continue reading Those inconvenient quantum interference patterns

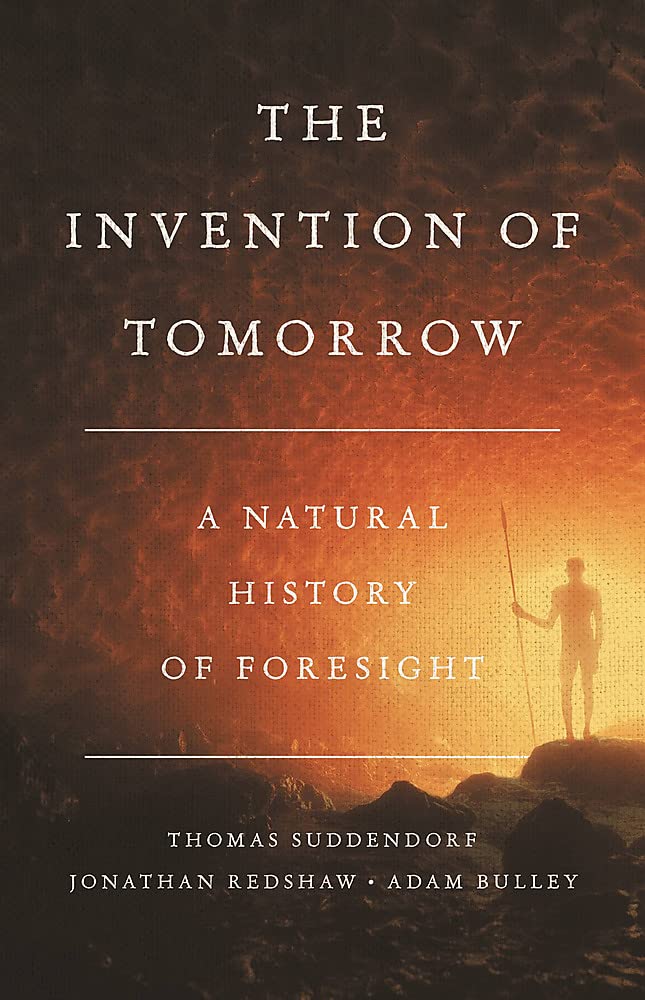

The Invention of Tomorrow

This week I read (actually listened to) The Invention of Tomorrow: A Natural History of Foresight by Thomas Suddendorf, Jonathan Redshaw, and Adam Bulley. I was alerted to the existence of this book by Sean Carroll's interview of Bulley on his podcast, which provides a good overview of their overall thesis. People have long struggled … Continue reading The Invention of Tomorrow

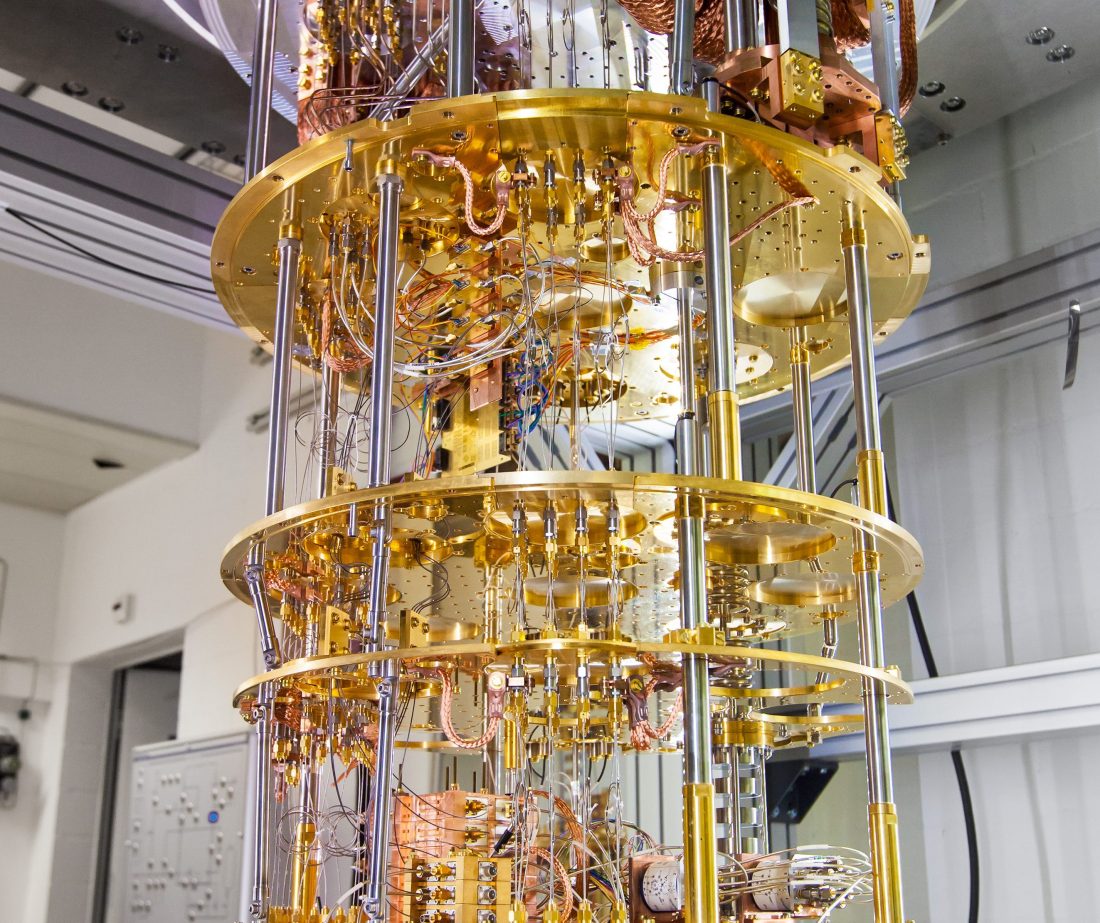

A way to understand quantum computing

The other day I shared a video on quantum computing, which I thought was informative, but the feedback I received is that it wasn't for anyone not already versed in the subject. Since I once struggled to understand this subject myself, I tried to think of a way of describing it that would actually help. … Continue reading A way to understand quantum computing

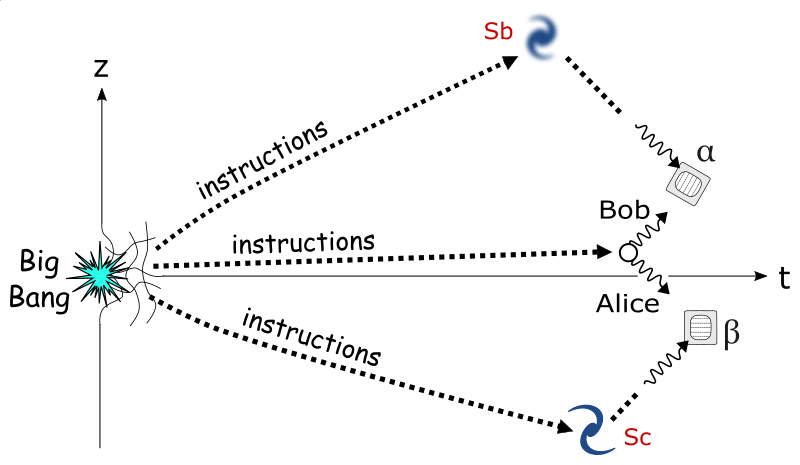

Many-worlds and Bell’s theorem

Sean Carroll's February AMA episode is up on his podcast. As usual, there were questions about the Everett many-worlds interpretation of quantum mechanics (which I did a new primer on a few weeks ago). This time, there was a question related to the correlated outcomes in measurements of entangled particles that are separated by vast … Continue reading Many-worlds and Bell’s theorem

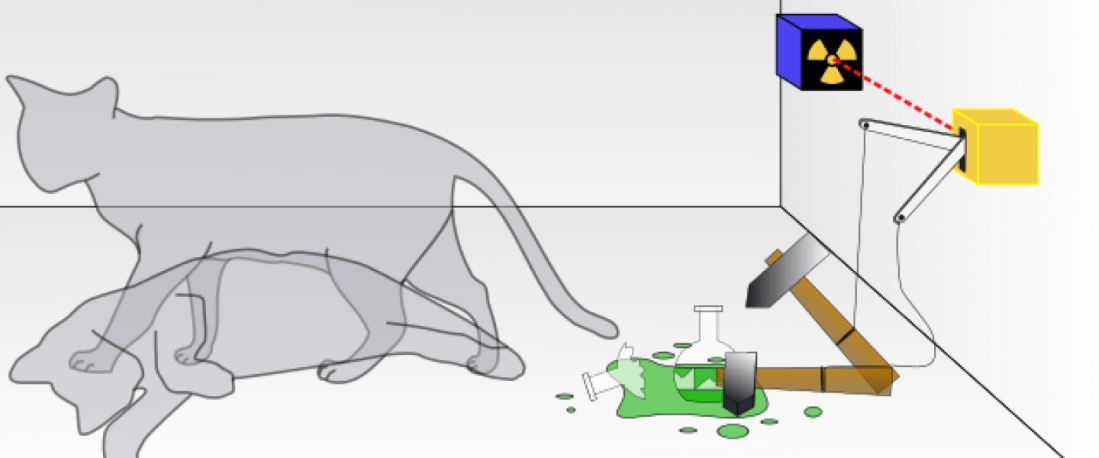

The entanglements and many worlds of Schrödinger’s cat

I recently had a conversation with someone, spurred by the last post, that led to yet another description of the Everett many-worlds interpretation of quantum mechanics, which I think is worth putting in a post. It approaches the interpretation from a different angle than I've used before. As mentioned last time, the central mystery of … Continue reading The entanglements and many worlds of Schrödinger’s cat

The benefits of wave function realism?

The central mystery of quantum mechanics is that quantum particles move like waves but hit and leave effects like localized particles. This is true of elementary particles, atoms, molecules, and increasingly larger objects, possibly macroscopic ones. It's even true of collections of entangled particles, no matter how separated the particles may have become. People have … Continue reading The benefits of wave function realism?

Superdeterminism and the quandaries of quantum mechanics

Last week, Sabine Hossenfelder did a video and post which was interesting (if a bit of a rant at times at strawmen) in which she argued for a little considered possibility in quantum mechanics: superdeterminism. In 1935, Einstein and collaborators published the famous EPR paradox paper, in which they pointed out that particles that were … Continue reading Superdeterminism and the quandaries of quantum mechanics

Reconciling the disorder definition of entropy

In last week's post on entropy and information, I started off complaining about the most common definition of entropy as disorder or disorganization. One of the nice things about blogging is you often learn something in the subsequent discussion. My chief complaint about the disorder definition was that it's value-laden. I asked: disordered according to … Continue reading Reconciling the disorder definition of entropy