Posting has been a little light lately. A lot going on in real life. But I have managed to sneak in some TV time in the last few months. I read the book Project Hail Mary several years ago and thoroughly enjoyed it. I'm a bit late in watching the movie, but I caught it … Continue reading Dorohedoro and other TV notes

The Astropolis trilogy

In the last post I discussed my recent exploration of Reddit. The one conversation I've started so far was a request for posthuman space opera recommendations, particularly ones without FTL, stories that envision what the future might be like if we can't get around the speed of light limit. One hidden gem recommended was Sean … Continue reading The Astropolis trilogy

Social networks and exploring Reddit

It's been a while since I've done a social media post, something I did more often back in the days of Twitter's convulsions and as people fled to various other platforms. For a long time, I held out hope that the Fediverse would be the new paradigm, but while there remain many enthusiasts, and my … Continue reading Social networks and exploring Reddit

The Faith of Beasts

The Faith of Beasts is the second book of The Captive's War trilogy, authored by James S. A. Corey, the pen name of the writing duo: Ty Franck and Daniel Abraham, best known as the authors of The Expanse. This book continues the story of a far future human population conquered by an alien empire, … Continue reading The Faith of Beasts

Children of Strife

Adrian Tchaikovsky's Children of Time books are about exploring different types of minds. In the first book he looked at spider minds, specifically uplifted Portia spiders. In the second it was octopuses and an alien group mind. In the third it was mated birds and another type of mind. In Children of Strife, he continues … Continue reading Children of Strife

Is the eliminative stance productive?

A number of recent conversations, some I've been in, and others witnessed, left me thinking about eliminative views like the strong illusionism of Keith Frankish and Daniel Dennett. This is the view that access consciousness, the availability of information for verbal report, reasoning, and behavior, exists. But phenomenal consciousness, the qualia, the what it's like … Continue reading Is the eliminative stance productive?

Slow Gods

Claire North's Slow Gods is a grim look at what happens to far future human societies in the vicinity of a supernova. It's a novel with a strong literary feel, one that explores a number of very distinct cultures, including a hyper-capitalistic dystopia, a highly artistic society, and lots of others in between. Early in … Continue reading Slow Gods

Project Hanuman: information as the fundamental reality

Stewart Hotston acknowledges that his Project Hanuman is inspired by Iain Banks' Culture novels. The society he describes, known as the Archology, is very similar to the Culture in many respects. However, where Banks' books usually have the Culture as the dominant civilization technologically, and always have them coming out on top, Hotston's Archology finds … Continue reading Project Hanuman: information as the fundamental reality

The attitude of physicalism

Spurred by conversations a few weeks ago, I've been thinking about physicalism, the stance that everything is physical, that the physical facts fix all the facts. A long popular attack against this view has been to argue that it's incoherent, since we can't give a solid definition of what "physical" means. And so physicalism seems … Continue reading The attitude of physicalism

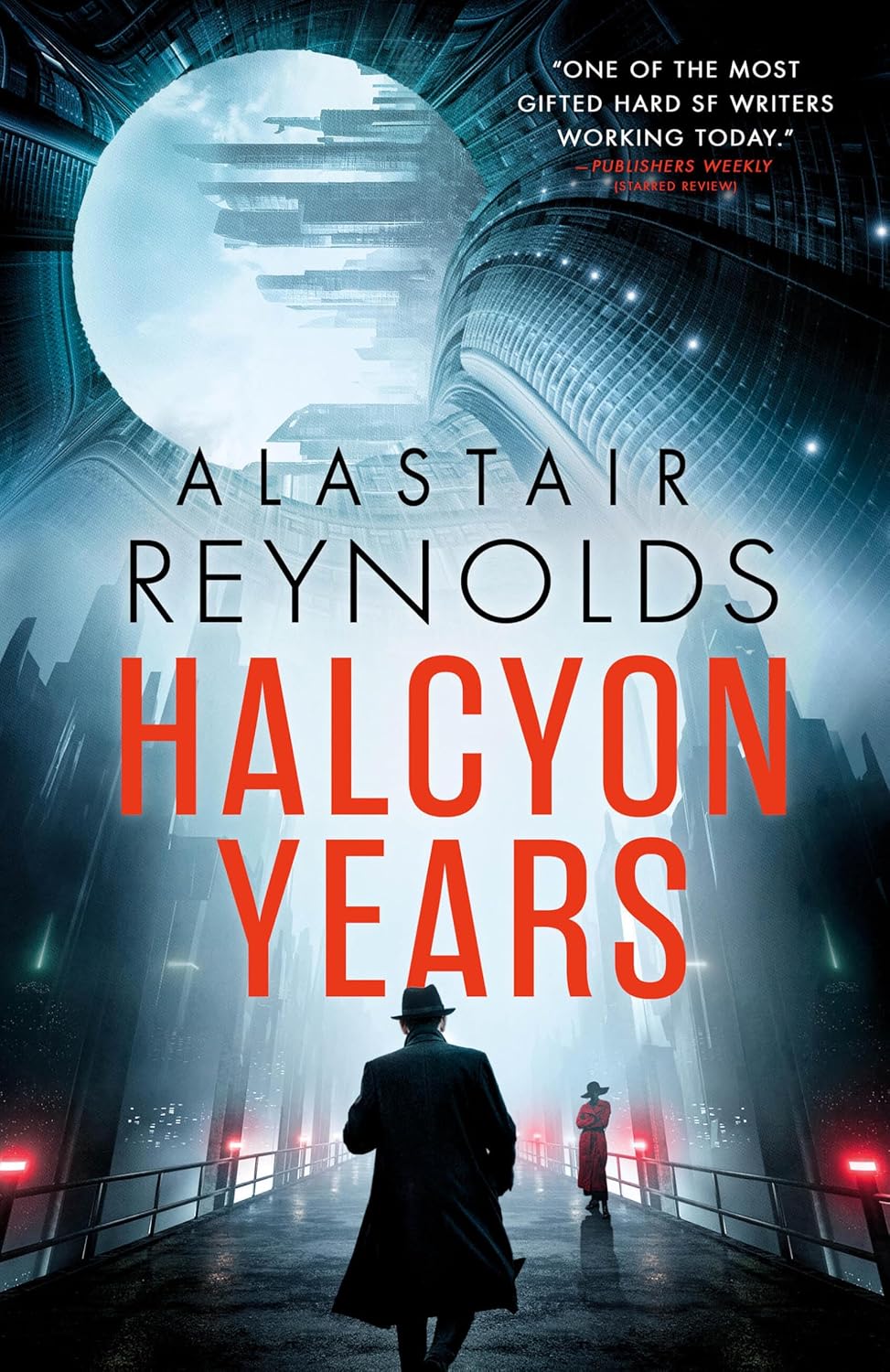

Halcyon Years

Alastair Reynolds' new novel, Halcyon Years, starts off as a murder mystery that takes place on an interstellar generation ship, a sealed O'Neill cylinder type environment, with cities, rivers, lakes, and forests. The ship is ruled by two rich families, the Urrys and the DelRossos, who hate each other. And while there are separate municipal … Continue reading Halcyon Years