A new paper is been getting some attention. It makes the case for biological computation. (This is a link to a summary, but there's a link to the actual paper at the bottom of that article.) Characterizing the debate between computational functionalism and biological naturalism as camps that are hopelessly dug in, the authors propose … Continue reading Biological computation and the nature of software

Tag: Neuroscience

Mind uploading and continuity

As a computational functionalist, I think the mind is a system that exists in this universe and operates according to the laws of physics. Which means that, in principle, there shouldn't be any reason why the information and dispositions that make up a mind can't be recorded and copied into another substrate someday, such as … Continue reading Mind uploading and continuity

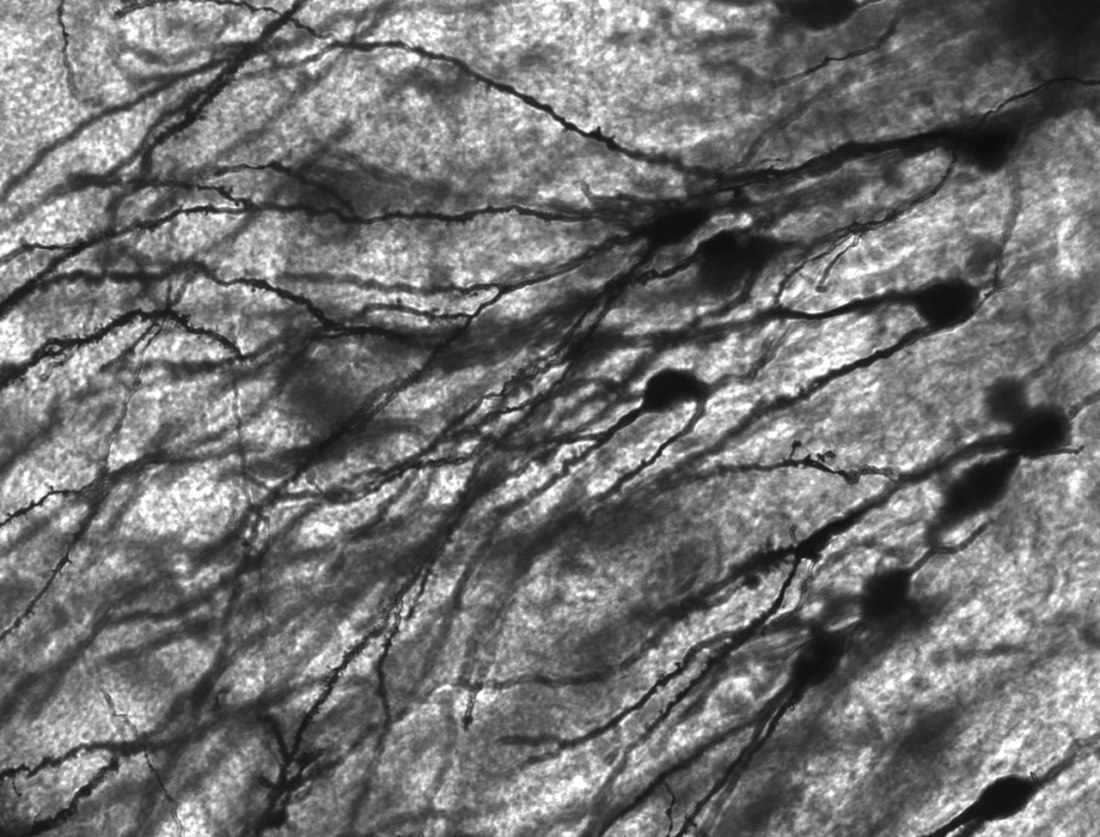

Classic and connectionist computationalism

Spurred by a couple of recent conversations, I've been thinking about computation in the brain. It was accelerated this week by the news that the connectome of the fly brain is complete, a mapping of its 140,000 neurons and 55 million synapses. It's a big improvement over the 302 neurons of the C. Elegans worm, … Continue reading Classic and connectionist computationalism

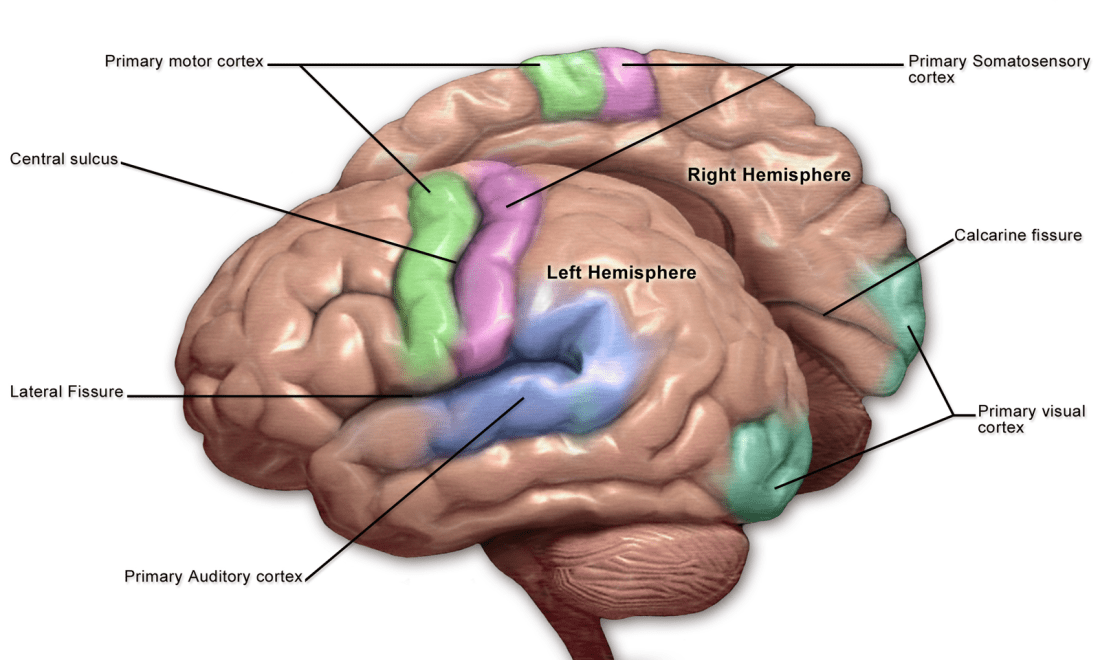

The unity of storage and processing in nervous systems

I think the brain is a computational system and what we generally refer to as the mind and consciousness are some of its computations. But I'm also aware that the brain works very differently from how a typical digital computer works. One criticism of computationalism that I have some sympathy with is the word "computation" … Continue reading The unity of storage and processing in nervous systems

Integrated information theory as pseudoscience?

It's been an interesting week in consciousness studies. It started with Steve Fleming doing a blog post, a follow up to one he'd done earlier expressing his concerns about how the results of the adversarial collaboration between global neuronal workspace (GNW) and integrated information theory (IIT) were portrayed in the science media. GNW sees consciousness … Continue reading Integrated information theory as pseudoscience?

Experiencing without knowing?

On Twitter, the Neuroskeptic shared a new paper, in which an Israeli team claims to have demonstrated phenomenal consciousness without access consciousness: Experiencing without knowing? Empirical evidence for phenomenal consciousness without access. A quick reminder. In the 1990s Ned Block famously made a distinction between phenomenal consciousness (p-consciousness) and access consciousness (a-consciousness). P-consciousness is conceptualized … Continue reading Experiencing without knowing?

Workspace vs integration: results starting to come in

A few years ago it was announced that The Templeton Foundation was funding an adversarial collaboration on theories of consciousness. The initial plan was to pit Global Neuronal Workspace Theory (GNWT) against Integrated Information Theory (IIT), although the initiative plans to move on to other theories once these have been tested. Early on, I had … Continue reading Workspace vs integration: results starting to come in

The Great Consciousness Debate: ASSC 25

This is a long video. The first hour or so features presentations on the Global Neuronal Workspace Theory by Stanislas Dehaene, Recurrent Processing Theory by Victor Lamme, Higher Order Thought Theory by Steve Fleming, and Integration Information Theory by Melanie Boly. It also has some brief recorded remarks from Anil Seth on Predictive Coding. (Fleming … Continue reading The Great Consciousness Debate: ASSC 25

Graziano’s non-mystical approach to consciousness

Someone called my attention to a new paper by Michael Graziano: A conceptual framework for consciousness. I've highlighted Graziano's approach and theory many times over the years. I think his Attention Schema Theory provides important insights into how top down attention works. But it's his overall approach that I find the most value in. He's … Continue reading Graziano’s non-mystical approach to consciousness

How much can we change the causality of the brain and keep consciousness?

James of Seattle clued me in to a thought experiment described by Dr. Anna Schapiro in a Twitter thread. https://twitter.com/AnnaSchapiro/status/1512866137809195011 It's very similar to one discussed in a new preprint paper: Do action potentials cause consciousness? Like all good thought experiments, it exercises and challenges our intuitions. In this case, it forces us to contemplate … Continue reading How much can we change the causality of the brain and keep consciousness?