I recently completed Anil Seth's new book, Being You: A New Science of Consciousness. Seth starts out discussing David Chalmers' hard problem of consciousness, as well as views like physicalism, idealism, panpsychism, and functionalism. Seth is a physicalist, but is suspicious of functionalism. Seth makes a distinction between the hard problem, which he characterizes as … Continue reading Anil Seth’s theory of consciousness

Tag: Neuroscience

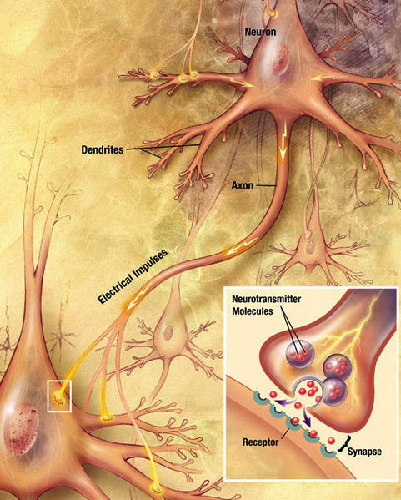

Sources of information on neuroscience

It's been a while since I listed good sources to learn about neuroscience and the brain. I think anyone interested in consciousness and the mind should get a grounding in the basics. It's a bit of work, but the introductory accounts aren't anything unmanageable for someone who can parse philosophically dense material. And it enables … Continue reading Sources of information on neuroscience

Perceptions are dispositions all the way down

(Warning: neuroscience weeds) Some years ago, I reviewed Antonio Damasio's theory of consciousness, based on his book, Self Comes to Mind. (He has a newer book, The Strange Order of Things, which I haven't read yet, so this may not represent his most current views.) In that book, Damasio makes a distinction between two types … Continue reading Perceptions are dispositions all the way down

Stimulating the prefrontal cortex

(Warning: neuroscience weeds) This is an interesting study getting attention on social media: Does the Prefrontal Cortex Play an Essential Role in Consciousness? Insights from Intracranial Electrical Stimulation of the Human Brain. Ned Block is one of the authors. (Warning: paywalled, but you might have luck here.) The study looks at data from epileptic patients … Continue reading Stimulating the prefrontal cortex

The global playground

(Warning: neuroscience weeds) Stanislas Dehaene recently called attention to a paper in Nature studying the brain dynamics of something becoming conscious. The study supports the global neuronal workspace theory that consciousness involves "bifurcation" dynamics, an "ignition", a phase transition between preconscious and conscious processing. Prior to the transition, the processing is feedforward and fleeting. After … Continue reading The global playground

Mark Solms’ theory of consciousness

I recently finished Mark Solms' new book, The Hidden Spring: A Journey to the Source of Consciousness. There were a few surprises in the book, and it had what I thought were strong and weak points. My first surprise was Solms' embrace of the theories of Sigmund Freud, including psychoanalysis. Freud's reputation has suffered a … Continue reading Mark Solms’ theory of consciousness

The location of the global workspace

(Warning: neuroscience weeds) I've discussed global workspace theories (GWT) before, the idea that consciousness is content making it into a global workspace available to a vast array of specialty processes. More specifically, through a neural competitive process, the content excites key hub areas, which then broadcast it to the rest of the specialty systems throughout … Continue reading The location of the global workspace

The dual nature of affects

Mark Solms is coming out with a book on consciousness, which he discusses in a blog post. Solms sees the key to understanding consciousness as affects, specifically feelings, such as hunger, fear, pain, anger, etc. In his view, the failure of science to explain the hard problem of consciousness lies in its failure to focus … Continue reading The dual nature of affects

The consciousness of crows

Last week, Science Magazine published an interesting study on bird consciousness: A neural correlate of sensory consciousness in a corvid bird. The study conducted an experiment where crows were trained to respond to a sensory stimulus. The stimulus itself could be at the threshold of perceptibility, above that threshold, or missing. After the stimulus (or … Continue reading The consciousness of crows

The unproductive search for simple solutions to consciousness

(Warning: neuroscience weeds) Earlier this year I discussed Victor Lamme's theory of consciousness, that phenomenal experience is recurrent neural processing, that is, neural signalling that happens in loops, from lower layers to higher layers and back, or more broadly from region to region and back. In his papers, Lamme notes that recurrent processing is an … Continue reading The unproductive search for simple solutions to consciousness