(Warning: neuroscience weeds)

Some years ago, I reviewed Antonio Damasio’s theory of consciousness, based on his book, Self Comes to Mind. (He has a newer book, The Strange Order of Things, which I haven’t read yet, so this may not represent his most current views.) In that book, Damasio makes a distinction between two types of processing regions in the brain: sensory image forming and dispositions. In terms of evolution, he sees dispositions as being far more ancient. He concludes that we are sometimes conscious of the image content, but never of the dispositions.

This distinction between images and dispositions shows up in the writing of a number of other authors, perhaps influenced by Damasio. For instance, Todd Feinberg and Jon Mallatt follow it in their book, The Ancient Origins of Consciousness. Their take is that sensory or primary consciousness, which they equate with phenomenal consciousness, amounts to the presence and utilization of these images. So for them, the rise of image forming regions during the Cambrian explosion amounted to the rise of consciousness.

In my write up of Damasio’s views, I expressed some misgivings, that the sharp distinction he seemed to be making between the image forming regions and the dispositional ones was probably far messier then he was implying. Over time, with more reading, it’s progressively seemed even messier than I thought back then, to the extent that now I’m not sure any division between dispositions and images really makes sense. The division implies that there are sensory images, and things the brain does with those images. But that implies the images themselves are something passive.

To be sure, we have mental images. The question is how these map to the neural processing in the brain. I think conceiving them as localized neural image maps in sensory cortices, which is often the implication, is far too simple.

To illustrate the issue, let’s consider vision. The following brief summary is inspired by Richard Masland’s excellent book, We Know It When We See It, as well as a number of other sources. (I’m indebted to James Cross for recommending Masland, a top notch writer I was sad to discover passed away in 2019.)

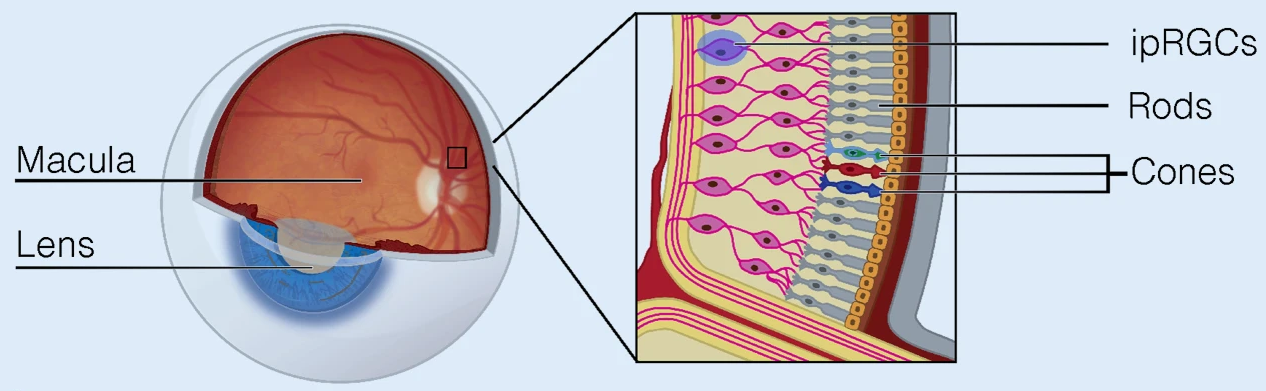

Vision begins with photons striking photoreceptor cells in the retina lining the inside of the eye, including rod cells which detect levels of light and cone cells which are excited by different wavelengths of light. Cone cells are often described as red, green, or blue sensitive, but it’s more accurate to label them as L (long), M (medium), or S (short) wavelength sensitive, since actual color perception is a later conclusion of the brain. The patterns formed on the photoreceptors is the closest we come to a passive image in the visual system.

The retina is a multilayer nerve net, a neural network. The connections from multiple receptor cells in the first layer converge on ganglion cells in the final layer. In the fovea, the central region of the retina where visual acuity (resolution) is at its highest, the number of photoreceptor cells connecting to any one ganglion cell is small, but gets progressively higher as we move out toward the periphery, resulting in lower acuity. In all, about 126 million receptor cells connect to around a million ganglion cells.

There are many different types of ganglion cells (around 30 according to Masland), each of which have receptor fields of varying sizes. Some of these ganglion cells care about color, others light level, or changes in light level, whether there is an edge present, or whether there is movement in a particular direction. For many types, it’s not yet known what they care about. But each point in our field of vision has roughly 30 separate analyzers.

It’s often said that the connections from the retina are topographically preserved all the way to the visual cortex. And that’s true, but misleading. When I first read this years ago, I took it to mean that the pattern of photoreceptor firings get transmitted to the visual cortex. But that’s not right. It’s the topography from the ganglion cells that are preserved: the 30 different types of analyzers, their initial conclusions, interpretations, and dispositions about the stimuli coming in. In other words, the nervous system starts interpreting visual information right from the beginning, and it is that interpretation which gets passed to the brain.

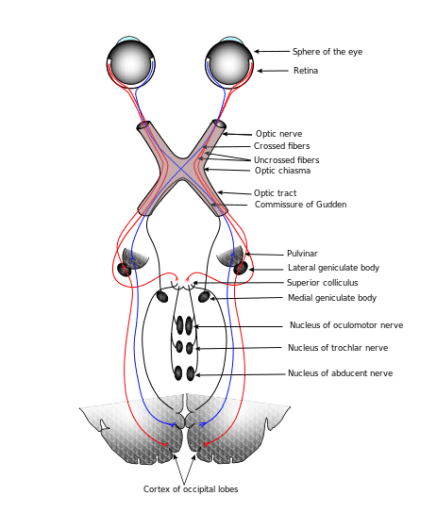

The axons of the ganglion cells run up the optic nerve to the LGN region of the thalamus, a region in the central floor of the cerebrum. It’s not well understood how the signals might be modified there, although it’s thought that attention levels are the primary factor. The ganglion axons synapse to LGN neurons which have axons that run to the visual cortex.

The visual cortex is divided into many regions, with V1 feeding into V2, which feeds into V3, then V4, etc. That’s the feed forward flow, but it’s worth noting that all these cortical regions interconnect and feedback with each other. The neurons in V1 tend to get excited by edges in various orientations, allowing shapes at various spots to be detected. V2 becomes more selective, with the cells gaining some “positional independence”. V3 and V4 start caring about things like colors, movement, or depth.

There are broadly two “streams” in the visual system, the dorsal (top) one, which is the “where” stream, and the ventral (lower) one, which is the “what” stream. The “what” stream flows into the temporal lobe, with regions which light up for various types of objects. For example, a number of regions light up for faces. Moving anterior (forward) in the temporal lobe, the regions gradually become more abstract, more likely to light up for a particular face or recognized object, no matter which angle it’s being viewed at.

I’m obviously vastly oversimplifying here, and there are wide gaps in current knowledge, although what is known forms an outline of a vast nerve net, or network of interacting nerve nets, reaching conclusions about the visual stimulus. Initially these conclusions are simple ones, like brightness, movement, or color. (See these color illusions to see how our perception of color is a preconscious determination made by the nervous system.) As we move through the system, the conclusions progressively become more complex.

The question is, where in all these layers of processing is the image? As noted above, images are often described as being in the early sensory regions, but these are simply early conclusions about the sensory information. Since our introspective access only reaches so early into the process, it’s tempting to regard the image as being the conclusions on that introspective boundary.

But if you look at your current surroundings, the first thing you might perceive is a gestalt, a “gist” of the scene (office, living room, park, etc). If you then focus on a detail, you might notice the gestalt of that smaller scope, such as a desk, TV, or tree. If you focus on a particular surface, you might notice its color and texture, but this is itself arguably just another gestalt, a conclusion, albeit possibly getting closer to the most basic ones we have access to.

I think normal conscious visual perception flits between these various gestalts so fast that it gives us the impression we’re taking in the entire scene at once, but in reality we’re focusing on different aspects of it at a time. Those aspects are always there when we check. Of course, we can view it as the entire brain taking in the entire scene at a time with the focus of attention moving around.

On the neural image, there might be a few of ways to look at it. One is to regard the activations throughout the entire perceptual system for that modality as the neural image. For vision, that would include activity in the occipital lobe and much of the temporal and parietal lobes, a much broader scope than what is commonly understood. Another is to regard just the current focus of attention as the image. So when we’re focused on a high level gestalt, the image might be assemblies firing in the anterior temporal lobe. When focused on a particular color, it might be assemblies in V3 and V4, unless we’re thinking about the concept of the color, in which case we might be back in the temporal lobe, or some combination.

The key point is there is no sharp boundary, just a somewhat arbitrary one of convenience for us, a way of thinking about it. The brain itself doesn’t appear to make any distinction. It just continues the processing from lower order perception, to higher order perception, and then to thoughts we might not think of as perception, such as beliefs. So another way of looking at it, perhaps the most productive, is that there are no neural images, just vast distributed assemblies of conclusions, dispositions, which add up to the mental images we perceive. In this view, perception is disposition all the way down.

What does this say about Damasio’s theories, or Feinberg and Mallatt’s? In Damasio’s case, not much beyond the simple distinction he makes being problematic. I don’t recall the rest of his theories hinging much on that particular understanding of images. In Feinberg and Mallatt’s case, they could use one of the interpretations of image I described above, but it makes the simple criteria of images being present… not so simple. Although their conception of affect consciousness should be unaffected.

Overall though, I think it puts pressure on outlooks that insist that phenomenal consciousness is separate from access consciousness. I actually think the motivation to see simple versions of neural images is often driven by these outlooks. But data should trump philosophy.

Unless of course I’m missing something? Are there reasons to draw sharp boundaries between neural images and dispositions that I’m overlooking?

The “visual system” is not plug-and-play? Shocking! 🙂

LikeLiked by 1 person

Glad you liked the book and your summary of vision was pretty good.

“The key point is there is no sharp boundary, just a somewhat arbitrary one of convenience for us, a way of thinking about it. The brain itself doesn’t appear to make any distinction. It just continues the processing from lower order perception, to higher order perception, and then to thoughts we might not think of as perception, such as beliefs. So another way of looking at it, perhaps the most productive, is that there are no neural images, just vast distributed assemblies of conclusions, dispositions, which add up to the mental images we perceive. In this view, perception is disposition all the way down”.

I agree. I’m not even sure it is fair to call one part lower and another part higher since it implies one part is cruder and the other more refined. In reality, I think it we could say equally either there are no neural images or the neural images are everywhere. or are composites of everything. That also possibly explains why there is so much recurrent processing. Essentially the information from every place needs to be spread out everywhere. In cases, as much or more goes “down” as comes “up”.

Quoting Masland:

“Amazingly, more axons (80 percent of the total input to the geniculate) run from the visual cortex to the lateral geniculate. Although there have been a number hypotheses, nobody knows for sure what the immense feedback circuit does.”

Didn’t you have a post about a year or so ago about there appeared to be evidence of sensory signals mixed up in brain circuits that didn’t appear to have much to do with sensory processing? I may not be stating that exactly right.

LikeLiked by 2 people

Thanks James.

The “higher” and “lower” nomenclature does tend to give people the wrong impression. All “higher” usually means is further from early sensory sites and closer to action oriented ones. And it’s completely relative. You can have higher order occipital processing which is lower order than parietal or temporal processing.

On the cortical-thalamic feedback circuit, I wonder if this paper came out too late for Masland to include in his book, or if he had issues with it. https://www.pnas.org/content/114/30/E6222

Not sure on a post. I think I remember you, Eric, and I having a conversation about non-sensory signals propagating into sensory regions. If I recall correctly, it was research by Ann Churchland. Masland described how a lot of historical sensory research was done on anesthetized animals. (Mainly for humane reasons since it was very invasive, and often fatal.) There was an article somewhere showing that motor signals propagate into sensory regions, which modulate the sensory processing. It also mentioned the anesthetized work and how these feedback signals weren’t really detected until brain scanners became usable and pervasive.

From what I’ve read in general, a lot of it appears to be so the sensory prediction processing can take into account sensory events resulting from the animal’s own movement decisions.

LikeLiked by 1 person

Perhaps I’m remembering incorrectly.

I do know that we have to compensate for eye blinks and there is also special handling to keep an object stable as either we or the object moves. I think Masland may even talk about that. Actually when we think of movement, it is quite amazing that we can maintain a stable image of bird flying or football player running down the field.

LikeLiked by 1 person

Again, really nice post. I really appreciate your explanation of the visual system. And I think your conclusions are likely correct. So let me frame this in my current understanding:

“ So another way of looking at it, perhaps the most productive, is that there are no neural images, just vast distributed assemblies of conclusions, dispositions,” unitrackers. The early unitrackers in V1 track edges, etc. These unitrackers feed forward to subsequent unitrackers for shapes, objects. But feedback to prior unitrackers also happens, which would be the predictive part of predictive processing. So the question becomes, how does access consciousness work.

[bumping speculation level up a notch]

Each of these unitrackers sends an axon to the thalamus as well as gets axon(s?) back from thalamus. Thus the 80% of axons to the geniculate mentioned by James Cross. The thalamus then becomes a central processing hub which potentially reflects/influences potentially all of the processing going on in the cortex. (Cortex is all unitrackers!). Attention could be controlled by influencing which unitrackers (“duck” or “rabbit”) actually fire in the thalamus (40hz?). Imagination could happen by instigating unitrackers from the the thalamus in the absence of visual input (“Visualize a green leaf”). Those feedback paths to prior unitrackers would then become “predictive”, making them more likely to fire given ambiguous visual input.

Okay, that was fun.

*

[cmon, get on team unitracker]

LikeLiked by 1 person

Thanks James!

What would you say is the difference between a neuron and a unitracker? For example, I mentioned the 30 different types of ganglion cells in the retina, each triggering on something they care about, such as a region being red/not green or blue/not yellow, brightness change, motion in a particular direction, etc. Wouldn’t these be unitrackers?

It seems like both unitrackers and neurons “care” about one thing due to causal convergence on downstream connections to them, and when they fire, they flag a conclusion to their downstream neurons. For example, ganglion cells trigger after photoreceptors signal (or not) to bipolar cells, whose processing is modulated by horizontal cells influenced by neighboring photoreceptors and bipoler cells, which then signal (or not) to the ganglion cells, which are modulated by amicrine cells influenced by neighboring ganglion cells. Each of these cells types have numerous sub-types, all of which appear to care about specific things. It makes the ganglion cells something of a higher order tracker taking into account all the conclusions upstream of it.

Switching to a different part of the system, the anterior temporal lobe appears to be the site of concept neurons, such as the Jennifer Aniston neuron. Again, it seems like that neuron has the one thing it cares about and fires when it’s detected.

If a unitracker isn’t a neuron, what work does it do beyond the neuron?

LikeLike

Thanks for the considered response.

In a sense, the ganglion cells could be considered unitrackers, but you mention amicrine cells (which I haven’t heard of) modulating the ganglion cells. I think those modulating cells would be included as part of the unitracker, depending on whether those same cells also modulate other ganglion cells. (I would have to consult Millikan’s book to verify). Hunh, new thought: maybe the cortex is a neo-retina? Maybe this kind of arrangement was “canonized” in the cortex?

As for the Anniston neuron, I look at the structure of cortical mini columns, which I believe have in the range of 100-150 neurons, exactly one of which (I think the pyramidal in layer 5?) sends an axon to the thalamus and maybe receives inputs from the thalamus via dendrites in layer 1. All 150 of the column neurons could be involved in determining whether the outgoing neurons fire, including that single corticothalamic neuron as well as others within the group which send their axons to neighboring columns. Any one of those outgoing axons might look like an “Anniston” neuron. Also, you could have some of those outgoing axons firing while others are suppressed, for whatever reason. (“duck!” “No, rabbit!” “No, duck!”)

*

LikeLike

On amacrine cells (sorry misspelled it above), Masland describes them like this:

Frank Amthor describes them as

(As I indicated in the post, I left out a lot of details.)

Michael Graziano characterized local inhibition as widespread in the cortex. It’s how attention is thought to work, with varying content all striving to win a competition biased by higher order directives, and the various assemblies all trying to inhibit each other. So the retinal principle of lateral inhibition is definitely something inherited from the rest of the CNS.

On the minicolumns, do we know that’s it’s just one pyramidal neuron to the thalamus and one axon coming in from that thalamus, or is that speculative? From what I’ve read, pyramidal neurons also connect with other subcortical regions (amygdala, hippocampus, basal ganglia, etc) as well as other cortical regions. And I think columns and minicolumns have extensive lateral connections with each other. All of which seems to make the picture more complex. (It’s rare the picture gets less complex. 🙂 )

LikeLike

If I had to provide support for my current understanding of microcolumns, I would probably rely on this paper: https://www.biorxiv.org/content/10.1101/2020.09.09.290601v1.full.pdf

If you have time to read it, I’d like to know what you think.

*

LikeLiked by 1 person

I didn’t read the article but what irks me about these sort of papers is shown in this statement:

“The cortical circuit model is derived by systematically comparing the

computational requirements of this model with known anatomical constraints.”

The model begins with a computational assumption, then works backward from anatomy(?) to derive (surprise surprise) a computational model. What if the entire computational approach is wrong? It might be like the Ptolemaic view of the solar system, it works to a high degree of accuracy but is completely wrong.

LikeLike

Computation is basically assumed in computational neuroscience, and in most of neuroscience overall, the same way evolution is assumed in biology. You can argue that the prevailing paradigm is wrong, but it remains an extremely fruitful one, despite longstanding and constant claims it’s failing or is just about to fail anytime now. I think if it ever really starts to fail, it’ll be abandoned pretty quick in favor of whatever might be better. But the increasing collaboration taking place between neuroscience and AI research shows, if anything, the holdouts are coming around.

LikeLike

Much of the same could have been said of the Ptolemaic view.

It isn’t difficult for me to see that the modern obsession with computing and information as explanation might be a little like finding every problem a nail when the only tool is a hammer.

LikeLiked by 1 person

The Ptolemaic comparison can be made to any scientific paradigm, no matter how successful. It’s a constant reminder that all scientific theories are provisional. But while they work, the onus is on opponents to show specifically where they’re failing.

LikeLike

I think there is a little involved here than that. The problem as I see is that almost natural process with some degree of order can be modeled by a computational method or methods. However, there is no guarantee that the process being modeled actually is a computational process or that, if the process is computational or quasi-computational, that the model matches the process.

Flip a coin a hundred times and record each result as a bit. Now convert the bits to a series of number. Derive an algorithm to generate the numbers or an approximation of the numbers. The original generation of the numbers was not computational and is there no any guarantee we might not find a different algorithm that could generate the same numbers.

LikeLike

Again, the criticism you’re leveling here applies to all of science. Theories are formulated on past observations, but there is no guarantee they represent the underlying causal reality. Future data could always require revision or throwing the whole model away. That’s science.

LikeLiked by 1 person

Perhaps. But it is especially an issue in neuroscience in my view because it plays implicitly into the assumption that consciousness and brain activity is just information processing, that neurons and circuits are like logic gates which carries with it a digital bias.

There are a few papers around that reject a computational paradigm.

https://www.tandfonline.com/doi/full/10.1080/10407413.2019.1615213?casa_token=_uHoY-itsdIAAAAA%3A-JT40fvTTe5c54-LQHpWO6hPQTvyKklmITJEUhIELwJySNiyDDQYU3y348-WnMkLU9ZS2AmI3iKI

https://www.ingentaconnect.com/contentone/imp/jcs/2017/00000024/f0020001/art00010

LikeLiked by 1 person

This article makes an interesting argument that distinguishes between computers and emulators or simulators. that is approximately what I am getting at. It specifically talks about how the brain probably can’t scale to handle complexities of the real world if it is only computing and that simulation would be the alternative.

“We will argue that machines implementing non-classical logic might be better suited for simulation rather than computation (a la Turing). It is thus reasonable to pit simulation as an alternative to computation and ask whether the brain, rather than computing, is simulating a model of the world in order to make predictions and guide behavior. If so, this suggests a hardware supporting dynamics more akin to a quantum many-body field theory”.

“Beyond this example of the motor system, if the brain is indeed tasked with estimating the dynamics of a complex world filled with uncertainties, including hidden psychological states of other agents (for a game-theoretic discussion on this see [1, 2, 3, 4, 9]), then in order to act and achieve its goals, relying on pure computational inference would arguably be extremely costly and slow, whereas implementing simulations of world models as described above, on its cellular and molecular hardware would be a more viable alternative”.

https://link.springer.com/chapter/10.1007/978-3-319-95972-6_3

In this case, the jump from computing to simulation corresponds to the jump from neurons alone firing to neurons firing + some wave like capability. In follows naturally from an evolutionary standpoint that additional adaptation became blocked because of energy and resource limitations of the neuron circuits alone, so an additional mechanism needed to be added.

LikeLiked by 1 person

If you dig, you can find papers for just about anything, particularly by philosophers. Even with scientific papers, they are part of an overall conversation in the field. Trying to argue by paper citation is plucking out fragments of that conversation. It’s why I prefer write ups by experienced experts, like Masland, who know what to take seriously in the field and what’s just noise. The info isn’t as current, but it’s a lot less likely to be misleading.

LikeLike

Actually Xerxes D. Arsiwalla apparently is a physicist who has done a lot of work in complexity in networks, neuroscience, but has some papers on stars and black holes.

LikeLike

For sure if we looking at neurons, brains, and vision, I would go with Masland too.

When we get to issues like consciousness, there isn’t really any consensus and the issue branches into many different areas. I think you have written a good deal on Chalmers and Dennett among others so you don’t seem limited yourself to consensus neuroscientists.

LikeLike

Certainly expertise is domain specific, something we should keep in mind when someone is proposing a radical theory in another field, particularly when virtually no one in the actual field takes it seriously. Chalmers and Dennett are good examples of people who avoid that trap. They both leave neuroscience to the neuroscientists. In Dennett’s case, he’s basically interpreting neuroscientific findings, in Chalmers’, he positing things beyond the science, but in a way that’s compatible with it.

LikeLike

Thanks. I’ll try to parse it this week.

LikeLike

Interesting post Mike.

Just a nit about a common language usage: We don’t perceive color because there is no color in the world. Instead, we perceive wavelengths of light which are represented/simulated in conscious images as colors.

LikeLiked by 1 person

Thanks Stephen.

On the nit, that seems like it’s in the territory of: “If a tree falls in the forest with no one around, does it make a noise?” It depends on how we define “noise” or “color”.

LikeLike

Actually I would say we do perceive color.

I would probably also say there is color in the world – it’s in our perception which is not only about the world but is of the world. There likely is not color in the world external to our perception but we can’t be completely sure there are wavelengths either since that is an hypothesis about the world.

LikeLike

“I think normal conscious visual perception flits between these various gestalts so fast that it gives us the impression we’re taking in the entire scene at once, but in reality we’re focusing on different aspects of it at a time.”

This may be a little off topic, but this part of your post made me think of something. When I started learning to draw based on direct observation, it was sort of a reality-bending experience, and I think what you said explains why. In my daily life, if I walk into a room, it does feel like I just take in the entire scene all at once. But if I sit down with a sketch pad intending to draw a picture of the room, I’m sort of forcing my visual perception to slow down and focus on one “gestalt” at a time. It really feels like a completely different experience of reality.

LikeLiked by 1 person

That’s interesting. I’d imagine artists are much more aware than the rest of us how little we really take in during a quick glance of a scene. That you’d be confronted with it as soon as you try to draw it. And I can see the need to slow down, explicitly look at each part of the scene at a time, a much more active type of observation.

It reminds me of a conversation I had a year or two ago about the colors of the walls in the men’s bathroom at work. I was absolutely convinced they were some shade of white or grey, something colorless. The next time I went in, to a room I’d been going in daily for decades, I was shocked to discover they were maroon and blue. I had just never paid attention to the colors in there before, and wasn’t the least conscious of the omission.

LikeLiked by 1 person

Makes sense to me. There are a lot of things in the world that just go unnoticed, unless you’re given some reason to notice them.

I’m actually planning to do a post on color perception at some point. That’s another thing that gets weird for me, depending on the color pallet I’m using in my current WIP. For example, a while back I was working on a drawing that used a lot of turquoise. Then, when I stepped outside for a few moments, my brain conceptualized the sky and the trees as two different shades of turquoise, rather than as blue and green.

LikeLiked by 1 person

On a thread a while back, we got into a conversation about red-green color blind monkeys who had had their genetics changed to make their vision trichromatic instead of the dichromatic vision they were born with. We got into a long conversation about whether they would actually have been seeing a new color or just now be able to distinguish two different shades of an existing color. Since then, I’ve come to realize, there’s no fact of the matter, just what their nervous system eventually decided warranted making different conclusions about.

It’s a very counter-intuitive conclusion, but it matches up with your experience, and what appears to be the case in the neuroscience.

Anyway, looking forward to your color perception post!

LikeLiked by 1 person

Just a question Mike; I’m never been clear on what is meant by the term ‘disposition’. In the context of neural networks including the human brain I’m guessing it means something like “a particular neuron or neuronal assembly is disposed (or tends?) to prefer certain “conclusions” (resultant states?) over others. But that’s just my guess, based on readings in cognitive science, including your excellent blog. I’d appreciate a brief description of your understanding of the term.

Regards,

Jim

LikeLiked by 1 person

That’s a good question Jim. You made me go back to Damasio’s book to see how he discusses it. He largely equates them with reflexes, survival programs, or automatic actions. Although with the distinction he ends up making, labeling everything in the brain as either an image or disposition, they effectively become any thought or belief that isn’t sensory in nature.

Here he is discussing it.

As I indicated in the post, I’ve become suspicious of how productive this distinction is. It seems like all we have are increasingly sophisticated assemblies of dispositions. Even if we do keep the image map category, we have to admit that the distinction is far blurrier and messier than he describes.

LikeLike

Ah, thanks Mike. If that’s what’s meant by ‘disposition’ (I read the Damasio excerpt describing disposition as roughly analogous to a software conditional statement) then I’m inclined to agree that it’s “dispositions all the way down”. It might be useful to bracket a special class of dispositions for those that are taking input from the map or model of one’s self.

LikeLike

Thanks Jim. I definitely think it’s productive to categorize the processing into classes like sensory (modal and multimodal), motor, planning, etc. I even think words like “representation” or “model” are appropriate. But “image” feels like it conveys the wrong idea unless we’re speaking from the inner mental perspective. It’s often more carefully put as “image map” which is better, but “map” gets dropped far too often.

LikeLike