Last week, Sabine Hossenfelder did a video and post which was interesting (if a bit of a rant at times at strawmen) in which she argued for a little considered possibility in quantum mechanics: superdeterminism.

In 1935, Einstein and collaborators published the famous EPR paradox paper, in which they pointed out that particles that were entangled would collapse into compatible states when measured simultaneously, even if separated by vast distances. This appeared to violate locality, a bedrock principle of relativity. It seemed to require faster than light communication between the particles. Einstein argued that there had to be hidden variables that determined the states from the time the particles were entangled. In other words, quantum mechanics was incomplete.

Three decades later, John Stewart Bell discovered a way to test this proposition. Bell noted that when measuring spin states along various axes, the statistics of the results would be different if Einstein was right instead of quantum mechanics. In the years that followed, the tests were conducted. The results were compatible with quantum mechanics. Einstein was wrong. This result is usually taken to indicate that no local hidden variable theory can reproduce the predictions of quantum mechanics. (Non-local hidden variable theories weren’t impacted, although they have other issues.)

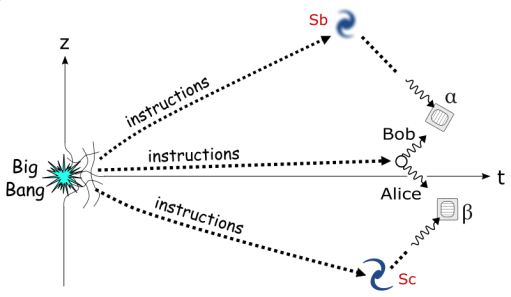

However, Bell admitted there was still a loophole for his theorem. If the decisions the experimenter made to measure the particle and the state of the particle itself were somehow forced to be correlated (violating statistical independence), it was still possible to produce a local hidden variable theory. Einstein couldn’t be right, but perhaps some prior common cause in the history of the experimental setup and the particle was keeping things in synch.

Over the decades, various experimenters have pushed the boundary on when these prior causes might exist. One a few years ago used signals from quasars billions of light years away to ensure the causes of the experimental setup were as far into the past as possible. If a prior cause is determining the correlation, it now has to be found in the early universe.

I think this is why most physicists consider superdeterminism unlikely to bear fruit. It’s not an issue of determinism, but of unlikely correlations the entire universe has to enforce.

However, Hossenfelder argues that the causal history isn’t relevant. The only thing that matters is the state of the measuring device at the time of measurement. What the particle does, she asserts, is dependent on how it’s measured. At first glance, this doesn’t seem that different from conventional views of quantum mechanics. Except for the assertion that it’s all deterministic.

How can this be? Going through her preprint paper on this, it appears that Hossenfelder is relying on a type of retrocausation. Although she objects to the term “retrocausation” because it implies that information is somehow going backward in time, which isn’t what’s being argued. She prefers the term “future-input dependence”.

If I’m understanding correctly, the point is that all physical theories are time symmetric. They don’t prescribe the order of cause and effect. All theories, that is, except for the second law of thermodynamics, which states that entropy always increases, which is what usually gives the classical order between cause and effect. But the second law is emergent from the particle physics of large populations of particles. It doesn’t exist in fundamental interactions between particles. The idea, I think, is that the future state of these particles could impinge on their past states.

It’s an interesting proposition, but my initial take is that the experimental set up to measure a particle is a macroscopic system with a vast population of particles, one where the second law should definitely apply and so the normal directionality of cause and effect. There’s a discussion in the paper on causality that may address it, but if so it was over my head. I suppose it’s conceivable that the future-input dependence could funnel through one or a small number of particles, perhaps the particles that initially interact with the one to be measured. These initial particles might be in their state due to the state of the overall measuring apparatus.

I can’t say I find this line of reasoning too convincing, but I’m not a physicist and so could be missing important facts. I’d be curious to see what other physicists think.

I think a bigger issue for me is the fork Hossenfelder takes earlier in her reasoning, although it’s a common one, to deny wave function realism. I’ve always had an issue with that move, because it seems to ignore the main reason we have the wave function in the first place, the observed interference effects. If the wave function isn’t modeling something real, where are those effects coming from? What is interfering with what? Or what is giving us the impression of that interference?

I can understand why many make this move, because accepting wave function realism puts you in a quandary. It means the wave function collapse, if it happens, has to be a physical event, which seems to bring in a huge pile of mysteries and the paradoxes Einstein objected to. Or we have to accept that the collapse itself isn’t real, which puts us in either pilot-wave or many-worlds territory. And once there we have to choose between explicitly non-local dynamics or an ontology many consider hopelessly extravagant.

But as Hossenfelder herself states, it’s a mistake to reject a proposition just because we don’t like the philosophical implications. In her view, that means we accept what “all those experiments have been screaming us into the face for half a century” that superdeterminism is reality. Of course, others will contend that she herself is only tempted to take the hidden variable path to avoid the other philosophical implications she dislikes, indeterminancy, non-locality, many-worlds, etc.

Still, superdeterminism doesn’t seem like an option we can completely rule out. Hossenfelder is calling for more measurements of the very small and very cold to stress the regular quantum formalism. Even if all it does is confirm quantum mechanics, that seems worth it. And if it does find cracks, well, who knows what they might reveal. Although I suspect the hope that it will make things less weird is likely to be forlorn.

As with all discussions about quantum mechanics, if it doesn’t leave your conception of reality shaken, you haven’t understood what’s at stake.

What do you think about superdeterminism? A promising path, or a misguided attempt to wrench things back into classical physics?

“And if it does find cracks, well, who knows what they might reveal. Although I suspect the hope that it will make things less weird is likely to be forlorn.”

Are you saying that if a new theory comes along to replace quantum mechanics, it will be even weirder than quantum mechanics? Because that’s a really interesting thought. I could totally see that being the case.

LikeLiked by 1 person

It does seem like the history of science is that when old theories are replaced by newer ones, the newer ones are almost never less bizarre. The Ptolemaic view had to give way to the Newtonian one, which eventually had to give way to the Einsteinian and quantum ones, each a shift that challenged our preconceptions. It was arguably those preconceptions which prevented us from even considering those newer theories earlier.

It makes sense if you think about. We wouldn’t have needed 500 years of science if reality matched our old intuitions.

LikeLiked by 1 person

I viewed her presentation about the same time you did. Being a chemist, this is way above my pay grade, but I have a few thoughts

Like scriptures supposedly being inspired or even written by an all-powerful god, if they need interpretation, they were poorly written, and hence not god-sourced. Similarly, if a foundational theory like quantum mechanics, the most accurate theory ever devised, requires interpretation, then this universe wasn’t created by an all-powerful god. (Why make it do difficult if there are many easier structures? We could live quite well in a Newtonian universe, can’t we?)

I keep reading, and occasionally viewing such presentations in the hope that someone who will make enough sense that a chemist with a 140+ IQ score can make some sense of QM. (A little sense, it is all I ask! :o)

LikeLiked by 2 people

Yeah, if there is a God, QM might be slightly beyond the edge of what he intended us to see. Either that or it might mean he’s more Loki than kindly father and set things up to piss us off.

Although it’s worth remembering that Newton and Darwin solved puzzles that were ever bit as perplexing to people of their time as QM is to us. Newton’s contemporaries struggled to understand what determined how the heavenly bodies moved, until Newton showed that it was the same force that made apples fall. And Darwin (and Mendel) basically explained life.

But definitely, all we can do is keep learning, and be cautious when we think we’ve arrived at the answer.

LikeLike

FWIW, I’m trying to do that very thing with my QM-101 series. It’s meant for people who’ve followed QM at the pop science level but would like to peek under the mathematical hood a little. It’s been said that no intuition can grasp QM and understanding the math is necessary to move beyond what we can vaguely intuit. I’ve been on a mission to try to learn that math, and it turns out they’re right. It kinda changes your view.

LikeLiked by 1 person

I’m not sure we could live in a Newtonian universe, given that so much of chemistry etc depends on quantum mechanics. Open to correction, as you’re a chemist with a 140+ IQ and I’m not!.

LikeLike

You’ll have to put me down for a misguided path. I don’t believe the universe is deterministic, let alone superdeterministic. I can only repeat views I’m sure you know I have: my guess is we live in a single universe (no multiverses) and the wavefunction is obviously real (at least in some sense) at the quantum level but becomes literally incoherent at the classical level. I don’t see collapse as the bugaboo many do, but see superposition, interference, and entanglement, as the deep quantum mysteries we need to solve. Within that, I believe quantum measurement is indeed random, so the universe cannot be deterministic. (On top of that I think brains might be the one non-deterministic classical mechanism reality has produced.)

So,… thumbs down on superdeterminism. 😉

LikeLiked by 1 person

Definitely that assessment matches your previous stances. Hossenfelder is calling for tests of the very small and cold. The tests for physical collapse seem more aimed at the increasingly large and cold. If there is a physical collapse, hopefully we’ll eventually be able to see it in the data. Although I suppose it depends on just how far into the process it happens. If it takes 10^20 particles, it might be a long time.

I’m sure you’ve seen the reports of someone claiming to have entangled a tardigrade. (Of course, the tardigrade survived it.) Still waiting to see how seriously to take that. But there are more substantiated reports of other macroscopic objects: https://physicsworld.com/a/quantum-entanglement-of-two-macroscopic-objects-is-the-physics-world-2021-breakthrough-of-the-year/

Although the temperature itself could be a confound.

If we do discover a physical collapse, the thing to do then would be to see if it’s fundamental, or can be reduced in any way.

LikeLike

Unlike Doctor Who, I am consistent! 😀

In her usual talk, Chiara Marletto has a slide about how far up the size scale we’ve come in terms of superposition. I can’t find her slide online, but I think it’s between a virus and a bacterium. The slide is about how far we have to go to demonstrate true macroscopic quantum behavior — to illustrate macro-objects have wavefunctions. (I don’t dispute they do, but I think talking about their quantum behavior is like talking about the conversation being held in a packed stadium.)

One test has been getting interference by sending the object through a grating, but the larger the object the smaller its wavelength, and at some point, there’s no physical way to make a grating small enough. Another (such as in the article you linked to) is superposition, getting a physical object such as a cantilevered beam or drumhead to vibrate and be in two places at once. As you say, cold is a big part of this. (So is vacuum and serious EMF shielding.)

The tardigrade was frozen to 0.01 Kelvin and used as the dielectric in a capacitive qubit (something called a transmon). Near vacuum, too: 0.000006 mbar. Then that qubit was entangled with another (normal?) qubit. As a component of the first qubit, presumably the tardigrade was part of that entanglement. Later, the tardigrade was retrieved, warmed up, and was alive. I wouldn’t attach much meaning to the experiment. Some believe entanglement exists in wet messy environments anyway. A question I have is whether the tardigrade became something like a Bose-Einstein condensate and the whole qubit system has a single quantum state, or do the particles of the tardigrade have their own, but are able to participate in the entanglement of the qubit? Or, in being just part of the qubit, is the question moot? Bottom line, I’ll get excited when they have actual quantum cats.

Without getting too far into the weeds, I’ve come to think “collapse” is a misleading term. “Interaction” might be better. In the mathematics we use, interaction causes the state vector to suddenly jump to an eigenstate (if it wasn’t already there). This isn’t magic; it’s accounted for by the application of an operator. The classical math describing the parabola of a thrown ball likewise suddenly jumps when the ball hits the ground. The problem isn’t mathematical, it’s what Einstein really meant when he said “spooky action at a distance” — that an apparent wave suddenly vanishes into a point of interaction. That is still a mystery, and I think it’s outside QM — we need QFT to solve what happens during interaction.

LikeLike

I’ve seen a couple of Marletto talks but I don’t recall that slide. I might have to dig up that presentation. I wonder if she did it for the On the Shoulders of Everett conference. I’ve watched a few of those so far, but not hers. Those talks spend a lot of time over my head. But I didn’t know we’d gotten to virus size yet, although I suppose that might apply to those drums.

I like the packed stadium analogy.

One thing reading about the history of science has done is make me leery about saying anything is impossible to measure. There may eventually be innovations, ways to measure we haven’t thought of yet.

Yeah, I have no idea on the tardigrade. I’ll let the physicists duke that out. On entanglement in warm wet biology, it seems like there’s a lot of noise around quantum biology, with lots of breathless stories in the press, but the details never seem to include conclusive evidence. And I always wonder how superpositions can be remain coherent for long enough to make a difference in biology. But a lot of people are looking; maybe we’ll have evidence soon.

Interaction is the term Rovelli uses in his relational quantum mechanics interpretation. Although he takes an anti-real stance on the wave function, so for him it’s more of an epistemic collapse or update. Of course, for him any interaction involves that update, but only for the interactees.

LikeLike

I believe Marletto does use that slide in her Everett talk (which isn’t really about Everett at all but about the Constructor Theory she and Deutsch have been working on for nine years). Those Everett talks have quite a range. Some are very good, but that guy with the long white beard painted a picture that put consciousness center stage. I stopped watching that one after a while.

Of the three QM aspects I mentioned, entanglement is kind of the oddball. Despite what Feynman said the two-slit experiment embodying everything weird about QM, entanglement isn’t part of that picture. We see QM entanglement weirdness in Bell’s experiments, though. And I sometimes hear about large-scale entanglement, lots of particles somehow all entangled. It somehow feels like a separate aspect of QM, and it might be that, like “decoherence,” the term gets used somewhat loosely or broadly.

I think with biological systems it’s good to remember that chemistry is a quantum effect. At the molecular level there are clear quantum effects, but we don’t see them large-scale. The question might be to what extent chaos magnifies those small-scale effects. With photosynthesis, for example, some believe small-scale quantum effects make energy exchanges more efficient than classical mechanics accounts for.

Careful you don’t take that stadium analogy too much to heart. You’ll end up being as skeptical of the MWI as I am! 😀

LikeLike

On the conference, so far I’ve only watched the David Wallace and Scott Aaronson ones. I somewhat followed Wallace but remember being quickly lost by Aaronson a while back. Perusing the channel, looks like a lot of interesting ones. Something to maybe do during the break this week. I’ve seen other versions of the constructor talk, so maybe I’ll prioritize the others. Thanks for the warning on the Don Page one.

At this point, I think of entanglement as just being a larger system in superposition. Reportedly atoms and molecules sent through the double slit show the same effects, and those seem like basically collections of entangled elementary particles. Hossenfelder makes a good point that the spatially separated entangled particles just shine a light on an issue that exists with wave function collapse in general. But you’re right that the double slit experiment doesn’t flush out that particular issue, at least not obviously.

We might be taking away different things from the stadium analogy. I saw it as being about the difficulty of accounting for decoherence.

LikeLike

I haven’t watched the Wallace one. The title is certainly provocative. 🙂 I watched the ones by Aaronson, Marletto, Vaidman, Saunders, Hemmo, and Zurek. And Don Page, but I only got partway through that one.

“I think of entanglement as just being a larger system in superposition.”

They’re quite different. Both have different formal mathematical definitions. A superposition is multiple quantum states added together, and they retain their individuality (they can be individually affected). In entanglement, the wavefunction is “inseparable”. The system cannot be described in terms of separate wavefunctions, and anything that affects part either affects the whole or breaks the entanglement.

Large-scale two-slit experiments (multi-slit gratings, actually) are looking for the same thing photon and electron versions do: interference due to superposition of multiple paths.

The stadium analogy, yes, involves the decoherence of all the particles of a macro-system, but it goes on to compare the idea of a measuring instrument going into superposition with the idea of stadium conversations being affected by a single conversation. (The other analogy is throwing ping-pong balls at an ocean liner.)

LikeLike

I’ve had far less exposure to the math, but based on what I have seen, it didn’t seem as categorically different to me as it does to you. My impression was that every allowable permutation of the individual states of the particles are multiplied together, and then all those composite states are added together for the composite superposition, just as they are for a single particle. Except for particles that are anti-correlated, then the composite states are subtracted. But I’m at the limits of my understanding and may well be missing something.

I think the point Hossenfelder makes in this video, is that entanglement really just highlights issues with superposition and the putative collapse: http://backreaction.blogspot.com/2021/05/what-did-einstein-mean-by-spooky-action.html

With the stadium analogy, due to its organization, it seems like not everyone in the stadium is an equal player. People on the field have an outsize effect on the rest of the stadium. The entire stadium could be viewed as a measuring device for what happens on the field, taking a crowd of undifferentiated average sentiments and splitting them into cheering and booing branches. (Don’t hit me! 🙃)

LikeLike

I’m sorry Mike, but they are categorically different. Superpositions are separable, entanglements in inseparable. For details, I recommend chapter 5.3.2 Entanglement in Scott Aaronson’s Quantum Computing Lecture Notes PDF, but simply put, given two quantum states, we take the tensor product:

Which is fine until we have an entangled state like this:

Which is called a Singlet or Bell Pair. But there is no way to decompose this into separate states, because we require a×c=1/2 and b×d=1/2 (so none of a, b, c, or d, can be zero), but also require a×d=0 and b×c=0 (which obviously requires some zeros somewhere).

Meanwhile, a superposition is just:

In her Spooky Action blog post, Hossenfelder is saying the same thing I am. We’re in complete agreement on this one.

The stadium analogy… you’re such a literalist! The point is a huge collection of people (aka particles) each contributing to the whole. The MWI effectively asserts that one person walking into that crowd can take over all those conversations in an instant.

LikeLike

I’m starting to wonder if maybe we’re talking past each other.

Reviewing Aaronson’s discussion in 5.3.1 and 5.3.2, he’s comparing qubits that are not yet entangled with ones that are. So we’re talking about operations on separate superpositions vs operations on a composite superposition. Those are different, but they’re still both superpositions. It’s just one has multiple particles in it with correlations from their interaction(s). In both cases, taking a measurement collapses the superposition. I’m sure there are differences as well in which QC operations you can perform on them, but it doesn’t seem to change the fact that they’re both superpositions.

Hossenfelder’s point in the video was that Einstein’s issue was with collapsing superpositions in general. He’s just as concerned with one particle whose wave function has grown across vast distances instantly collapsing as he is with an entangled pair in superposition that have been separated by the same distances. It’s just that the entangled one served to illustrate the issue more vividly in the EPR paper.

LikeLike

Well, all I can say is I stand by what I said.

And, yes, I agree with Hossenfelder.

LikeLiked by 1 person

Unless we can prove retrocausation happens, the theory is worthless. Can it be proven in any way?

LikeLiked by 1 person

Good question. Under time symmetry, anything we could interpret as being retrocausal we could also interpret as being simply causal. So (entropy aside) evidence for one is evidence for the other. Hossenfelder seems to be arguing that there can be time sequenced correlations we could interpret as retrocausal that can’t be interpreted the other way. That does seem unlikely to me.

LikeLike

“Under time symmetry, anything we could interpret as being retrocausal we could also interpret as being simply causal. So (entropy aside) evidence for one is evidence for the other.”

Only if you are willing to accept irrealism about the wavefunction, or Everettianism (absence of a single definite outcome), or Bohmian non-locality, or the conspiracy-theory kind of superdeterminism. If you’re looking for a Get Out Of Bell’s Theorem Cheap card, and those other options are too expensive, future-input dependence to the rescue!

BUT. Such future-input dependence has to be stronger than merely viewing causal connections from the future looking back, if it’s to solve the Bell problem. This has a cost. To wit, you can no longer pick a single foliation(? – is that the right word?), or time-according-to-some-inertial-reference-frame, and explain the whole history of the universe with that plus the laws of nature. No, now you need an initial and final time, to explain whatever comes in between. And if time is open-ended at least in one direction (the future), now you have an infinite set of states in your minimum-sized theory of everything.

LikeLike

Not sure if I follow all the logic here, but Hossenfelder takes an epistemic stance toward the wave function. She sees it as emergent from something else. It’s not really clear to me why it being emergent should cause us to take an anti-real stance. Thermodynamics is emergent yet real. Heck, anything above quantum fields is really emergent.

My understanding is that Everettian and Bohmian physics are both time symmetric, at least in principle. Of course in practice, decoherence is a one way process, but that’s an entropic irreversability. (And if not allowed to go to far, there have been experiments that succeeded in recohering a system.)

I think I follow what you’re saying about needing to have two both the initial and final state to be able to calculate the intermediate states. But it’s not clear to me such a regime is only depending on time symmetry anymore. That said, it’s very possible I’m missing something here.

LikeLiked by 1 person

FWIW, WRT the unreality of the wavefunction, the problem is that the wavefunction lives in Hilbert space, which has way more dimensions than three (in some cases an infinite number), and each of those uses complex numbers.

So, the question is how that complex mathematical object can be physically made real. Interference tells us something is there, but wavefunction interference is weird. It’s not like the normal superposition of waves (like water or sound). It’s a quantum beast.

LikeLiked by 2 people

My bad, my explanation was too fast. I agree that if you need both the initial and final states to calculate the intermediates, then you’ve gone beyond time symmetry. Like you say, interpretations like Everett are time symmetric but you only need one time, the specification of the wavefunction at that time. From there you can calculate the wavefunction at any other time.

Vaidman’s take on the Two State Vector Formalism seems to be that it’s *a* way, a very natural one, to do QM, so he might not quibble with an Everettian who wants to just derive everything from one time-slice. But I think Hossenfelder would? I have only skimmed her paper so far.

LikeLiked by 1 person

I thought wrong about what Hossenfelder wants; she DOES want to be able to derive everything from any one time-slice:

She then goes on to resist charges of finetuning by constructing what I think is an overly narrow definition of finetuning. One which requires you to already know a frequentist based probability distribution before you can call something improbable.

LikeLiked by 1 person

Hossenfelder:

So, translating into my own words, I think she is saying that Psi is an abstraction in roughly the way “the average American wage” is an abstraction; there need not be anyone who makes exactly 56,123.45 dollars per year (or whatever).

LikeLiked by 1 person

Isn’t she just saying that superdeterminism can be based upon time sequenced correlations like any other theory, and that simply because the detector settings are a variable in the underlying theory doesn’t mean they cause events to occur in the past any more than running Newton’s laws backwards means the Knicks winning the game means Charles Oakley makes the shot?

Also, I kind of understood the really weird thing about superdeterminism to be that the correlations are a property of the universe, and not necessarily something that come into being through specific interactions between certain particles. But she does kind of talk on both sides of this point, saying in one place that “this is just how it is” (an everywhere correlation) and then later suggesting certain correlations only emerge in time… But maybe they only emerge practically in time? I don’t know…

LikeLike

I don’t either. She does at times seem to hint that the future-input dependency thing is really just a convenience to recognize the vast collection of causal effects leading to the correlations. But if so, then it seems like we’re back to the most common complaint about superdeterminism, that the entire universe conspires to enforce these correlations.

I struggle to see how she can have it both ways. Either the causal history matters or it doesn’t. If it does, then the Bell loophole tests seem to show that superdeterminism is pretty unlikely. If it doesn’t, then it means future conditions must impinge on past ones in a manner not readily apparent when we reverse the sequence.

If there’s a path through these two problematic options, I’m currently missing it.

LikeLiked by 2 people

“Either the causal history matters or it doesn’t.” In the option where it does matter, tell me if this is a good paraphrase: from the history alone, and ignoring the future-input, one could in principle use the hidden variables to predict the outcome.

And also whether this is a good paraphrase: given all the Bell’s-Theorem-inspired tests that physicists have performed, and given the assumptions at play in Bell’s Theorem, for superdeterminism, only a conspiratorial/finetuned initial condition can give the observed results if future inputs play no part.

Assuming I’ve paraphrased correctly, I think you have a good point.

LikeLiked by 1 person

Thanks Paul. Good summation. And I appreciate you pointing out that it depends on Bell’s assumptions, since there is another assumption other than statistical independence to question, that measurements have single outcomes.

LikeLike

As a mathematician, I understand the elegance of deterministic theories. But, apart from that, I cannot see any reason to suppose that the cosmos is deterministic.

From my study of human cognition, it seems evident (at least to me) that scientific theories are human constructs. We do not read these theories from nature. We construct them as tools to study nature. So we should expect them to be an imperfect fit.

LikeLiked by 2 people

How do you feel about mathematical abstractions? Are you a platonist, nominalist, empiricist, or something else? I ask because I’ve read that most mathematicians are mathematical platonists.

On scientific theories, a lot depends on when you’re ready to stop and declare victory. If you buy into the mechanistic philosophy, or something like it, then your assumption is that we don’t truly understand something until we have a deterministic theory. On the other hand, if you reject the mechanistic philosophy and see it as a mistaken concept, then you’re more likely to be satisfied with an indeterministic theory.

I have to admit I lean toward the mechanistic philosophy. But I’m also aware that we can never be sure that we’re really done. Whatever our current paradigm, it could be replaced in the future with something else. Although the new outlook will have to replicate all the successes of the current one. Which implies we’re getting closer and closer to some truth “out there”, although we may never reach it completely. In that sense, my biggest criticism of Bohr and Heisenberg isn’t their interpretation, but the fact that they declared quantum mechanics complete and resisted further exploration.

LikeLiked by 1 person

No, I am not a platonist. I’m a fictionalist, and I guess that’s just a fancy term for a nominalist.

I’m fine with mechanism. Yes, science is mainly mechanical. But at best we get approximations. When our mechanical approximations don’t quite work, we look at the residuals and attempt to mechanize those, too. And science thereby encompasses more and more.

But what if reality is not actually mechanizable? In that case the process must eventually break down. And it looks to me as if quantum wierdness is what you should expect when you reach that point where it breaks down.

LikeLiked by 1 person

Could be. I’ve often wondered if QM isn’t just beyond the edge of what we can employ any meaningful metaphors to understand. From here on, we might only be able to understand things mathematically, if at all. If so, then interpretations in general are just misguided attempts to cram those mechanics into something our primate brains can deal with. Maybe we’re like the characters in a video game trying to understand the hardware in terms of the game’s rules.

But it doesn’t seem productive to just assume that. We won’t know if we don’t try to understand. If we stay stuck, I hope there are always people trying to push that boundary.

LikeLiked by 2 people

Thanks for pointing out that her paper uses retrocausality! (That she doesn’t like the word retrocausal is understandable.) Did she say something similar in the video? I stopped watching about half way through. Usually, “superdeterminism” is just a name for a grand coincidence, almost a conspiracy theory, where the universe just makes sure we don’t do the experiments that would reveal the (to use Hossenfelder;s concept) statistical non-independence. Like the near-conspiracy in your little diagram from Wikipedia. That would be, um, crazy.

But retrocausation, or to use her better term, future-input dependence, that’s not crazy at all. It’s just weird, and weird has to be taken as a given in this business.

There are other issues, depending where one goes with the future-input dependence idea. I’ll try to raise them in comments on some other comments, where they already seem to be coming up.

LikeLiked by 1 person

Oh, that first “comment” was actually Mike’s post:

That’s exactly how it’s supposed to work. Check out the Wikipedia articles on the Transactional Interpretation of QM and on the Two-State Vector Formalism.

LikeLiked by 1 person

I don’t think she discussed the retrocausality in the video. She does mention that only the state of the measuring device at the time of measurement is important, but left out the reasons why that would be so. Honestly, so much of it was a rant against men and a strawman version of their views that I almost dismissed the whole thing. But she shared the preprint link on Twitter and I was curious.

I thought about the retrocausal interpretations. But the transactional one is reportedly explicitly non-local, which isn’t what Hossenfelder is aiming for. I’m less familiar with the two state vector one. It’s interesting that Lev Vaidman is a proponent since he’s also a proponent of many-worlds. He’s actually the author of the SEP article on many-worlds. He seems to see the two as compatible. Interesting.

LikeLiked by 1 person

Hi Mike,

Happy Holidays, first of all!

I much enjoyed the topic here as well as the article by Hossenfelder. To your point about “retrocausation” and “future-time dependence,” I understood her to be saying that a) experimental outcomes depend on the preparation and the detector settings, and that b) the correlation between the two does not need to exist at the time of the experimental preparation, but can come into being dynamically at some point in the intervening time. She also notes that if the deeper superdeterministic theory underlying quantum mechanics was understood, one could in principle take an initial state billions of years ago, and discover when the necessary correlations come into being. I didn’t understand her to be saying any information is traveling backwards in time to create the correlations.

On causality issues, I think she is simply pointing out that physicists speak of causality as a relationship between correlated events along the arrow of time, while those who study “causal discovery algorithms” ignore the light cone or underlying physics of their models and just make changes to see what the impacts are. When a given change impacts a result, then it could be considered causal, regardless of its position in the light cone relative to the outcome. But I think her point is this type of analysis often ignores the constraints of doing physics, and that various authors have mixed and matched the sort of causality described in the causal discovery algorithms to the sort used by physicists, and the result are physically meaningless statements that sound really good on paper. The point with respect to superdeterminism as I see it is that changing a detector setting changes the outcome of an experiment, and so it appears that one is doing something in the present that is causing an effect in the past, because presumably the choice of detector setting in the present would “select” for particular hidden variables that must have been determined in the past. But correlation isn’t always causation, and Hossenfelder’s point is that a) physically this is nonsense, and b) the correlations between hidden variables and detector settings can arise in time and are simply not required to exist at all until the time of measurement.

On the denial of wave function realism, it seems a reasonable approach to make for superdeterminism because isn’t the very essence of superdeterminism (as Hossenfelder describes it) the notion that there are hidden variables that will explain the aggregate interference patterns of classical quantum experiments as well as accurately describe the trajectory of each individual photon?

Lastly, I have no idea what to make of superdeterminism, but I think it’s quite fascinating to think about! I am certainly drawn to the notion of an underlying connectivity between all the elements of the universe, and I do tend to think in some fashion something like that exists. But I don’t know if it’s ultimately useful or not. Because if the variables are forever hidden then what sort of predictions can be made? My personal thought-sense-intuition-fantasy is that the universe has some sort of very deep correlations akin to those described in this paper, but at the same time is not deterministic.

Michael

LikeLiked by 1 person

Hi Michael,

Happy Holidays!

I actually take Hossenfelder to be saying that the preparation itself doesn’t matter, just the states at the time of measurement (she emphasizes that last part). So she seems to be saying that the causal history isn’t the issue, and the paper (which it sounds like you read) asserts that the Bell loophole tests, while interesting, aren’t relevant. Of course, this only works if future-input dependence is an operationally meaningful concept.

I have some sympathy with the idea of people conflating different types of causality. But I struggled to see the distinctions Hossenfelder identified. Interventionist causes seem like causes with an agent in their light cone making deliberate decisions. But at the level of physics, it’s still, as you noted, isolated consistent time sequenced correlations. But I’m open to the possibility that I’m missing something here.

If the hidden variables do explain the interference pattern, it would be an answer to the question I asked in the post. But too often when people take an anti-real stance toward the wave function, they go on to simply ignore those interference effects. The trick, it seems to me, is identifying those hidden variables in a way that reproduces all the predictions of the wave function (except the ones we find objectionable, naturally). The theories that have managed to do it so far (like pilot-wave) have been explicitly non-local, and as a result don’t seem to reconcile with quantum field theory. Adding to quantum mechanics seems pretty fraught.

I agree with much of what you say in your final paragraph, except that my intuition is that reality is ultimately deterministic, although it may not be a determinism we can ever cash out. Operationally we may always have to live with indeterminism. But I definitely think someone should push on this and see where it leads. We might just get more confirmation of quantum mechanics, but it’s always possible we don’t.

LikeLiked by 1 person

I’m pretty sure she thinks the preparation history matters, because those are one of the essential variables in her simplified superdeterminism equation. What she explicitly says doesn’t matter, is whether or not the decisive correlations with detector settings exist at the time of the preparation. As long as those correlations arise at some point in time between the preparation and the measurement, and exist at the time of measurement itself, then the notion of superdeterminism is satisfied.

Agreed on the hidden variables and interference pattern paragraph you wrote. I may be giving Hossenfelder too much credit on this one. When she wrote that current QM is a theory of ensembles that gives correct average values, and that a superdeterministic hidden variable theory would accurately explain individual particle interactions, that must mean things like the double-slit experiment would be handled as well…

I think to your other comment, that those who do not like superdeterminism have the complaint the entire universe “conspires” to enforce the correlations, I think that’s using more words than necessary to just say they don’t like it. I mean, that’s the essence of it: that everything is fundamentally interrelated in some way such that statistical independence doesn’t apply. I can see why it wouldn’t be a likable idea, but that framing of the dislike is like saying “I don’t like peanut butter because it tastes too damn much like peanuts.” I think the one thing to Hossenfelder’s credit in the paper on this point is that she is not just arguing philosophical points, but pushing for experimentation. She says clearly another point of detraction is that superdeterminism likely doesn’t reproduce QM exactly, only in a theoretical limit. And this means there should be means of testing whether or not this gap exists. She gave, as you noted, a few examples in her paper. To your own position, why not just test it if we can do so?

LikeLiked by 1 person

I hate to quote this paper, because as you noted above, there’s a lot of ambiguity and she seems to be taking both tacts at times. Still, this seems to reflect the sentiment she expresses in the video.

https://arxiv.org/abs/2010.01324

From page 4 (emphasis added):

Of course, that has to be balanced by statements like this later in the paper. From page 11:

I disagree that simply saying we don’t like the consequences is equivalent to concern about improbable correlations. I think that omits key facts, facts that pertain to the conclusion. It ignores the logic. Hossenfelder does that in both the video (with all the ranting about free will) and in the paper. If she disagrees about the correlations being improbable, then she should provide her own logic. Of course, her answer is to simply say there are hidden variables, but until those are actually demonstrated, it’s just an IOU, a statement of faith that somewhere somehow there is a theory better than the one we have in hand that’s more friendly to her intuitions.

All that said, improbable is not impossible. We’re agreed the key thing is to test the very small and cold and see if, despite the conventional logic, there are deterministic mechanics that just haven’t been isolated yet.

LikeLike

Mike, I think you misinterpreted my remarks about the preparation mattering. You added emphasis to this statement from the paper: Because the setting at preparation was always irrelevant, and so was the exact way in which these settings came about. What Hossenfelder is saying is the detector settings at the time of preparation are not relevant. (That’s in part because she’s acknowledging they could be changed later.) She’s not saying, to my mind, the experimental preparation itself and the associated history is irrelevant. The detector settings are only relevant when the measurement is made, but the history contained in the experimental preparation obviously matters, because it is this history that produces the experimental conditions being studied… In fact she notes that very small differences in the preparation could have large impacts on the result. The preparation and the hidden variables contained therein are the variable lambda in the paper. The variable theta is the detector settings.

As to improbable correlations, I was simply responding to this statement you made: …then it seems like we’re back to the most common complaint about superdeterminism, that the entire universe conspires to enforce these correlations. All I was saying is that if the complaint is simply that the universe contains these correlations, that’s not a reason to not like the idea. That is the idea. I see now you meant the word “conspire” to be a stand-in for an improbability argument, which I didn’t catch. I can appreciate an improbability argument.

That said, I don’t have a strong opinion on how probable statistical independence is. I view many probability arguments as simply rhetorical devices. I mean, in other branches of science very unlikely things are deemed plausible because if there are enough other universes or enough eons of time, then lots of unlikely things can happen. But as you said yourself, and as (I hope) Hossenfelder intended it: the rubber hits the road in experiments, if they can be conceptualized and implemented.

LikeLiked by 1 person

Michael,

Sorry for misinterpreting you. Obviously Hossenfelder can’t be saying the history is completely irrelevant. (Although I think she makes it easy to interpret her that way.) She’s a determinist, which means the history should always be relevant. But she does seem to be saying the Bell loophole tests are irrelevant. I don’t know.

I can see how my use of “conspire” might lead you to think it was a rhetorical statement rather than a descriptive one. When talking science or philosophy, I often try to use words to convey a lot of information quickly, which can be misinterpreted as emotional rhetoric. I actually suspect the same disconnect might be happening between Hossenfelder and the people she’s criticizing for being pejorative toward superdeterminism.

I think the improbability arises because it’s very difficult to imagine a causal chain that would enforce such correlations. It’s like saying there’s no light sensor in my fridge, that the light goes on and off randomly, but just happens to always be on when I open the door. Once I’ve validated the correlation by taking my que from the early universe on whether to open the door at a specific time, the idea that such a correlation comes from some prior cause looks profoundly improbable.

But at the end of the day, the universe doesn’t care what we think is improbable, or for that matter even what we think is impossible. If it did, we’d be living on an Earth at the center of the universe and heavier objects would fall faster than lighter ones.

LikeLike

“If the hidden variables do explain the interference pattern, it would be an answer to the question I asked in the post.”

To me, the hidden variables do explain the interference pattern. This conclusion is derived from that fact of the matter that particles with a mass greater than the infamous buckyball built from 60 carbon atoms do not display wave type characteristics. Theories have to hold universally because that is the “standard” of a necessary truth; and if they do not, they have to be rejected.

The ridiculous rebuttal of: “Well, if it isn’t a wave function causing the interference pattern, what else could it be? And furthermore, since we do not know what else it could be then it “has” to be a wave function.” This rationale is equivalent to a jurist coming to the conclusion that since they do not know who else could have committed the murdered therefore, it must be the accused. I just don’t get this type of rationale………

LikeLiked by 1 person

The 60 atom molecule was once the largest object with quantum effects. But that ceiling is constantly being raised:

https://www.quantamagazine.org/how-big-can-the-quantum-world-be-physicists-probe-the-limits-20210818/

…as well as:

https://physicsworld.com/a/quantum-entanglement-of-two-macroscopic-objects-is-the-physics-world-2021-breakthrough-of-the-year/

The thing about the wave function, is it has been tested now for almost a century. Anyone can say it’s not the real answer, that there’s a better one we just haven’t found yet. But it’s the answer we currently do have, one that has passed innumerable empirical tests to several decimal places. Any alternate answer has to pass the same tests. That means that whatever the wave function is modeling, there’s at least some emergent reality it’s capturing. Doesn’t mean we won’t discover the limits of its applicability tomorrow, but like Newton’s laws, it’ll remain useful for the domain in which it has been validated.

LikeLike

The rationale you or anyone else is relying upon to justify a position on wave function is the same rationale used to justify an innocent person rotting in a jail cell.

“Let’s not forget that the case brought forth by the prosecutor is the best one that was available at the time, therefore we should accept it. And furthermore, an innocent person rotting in a jail cell is equally useful because it appeases the angst of the victim’s families.”

There is an alternate explanation for the interference pattern of the double-slit, one that is grounded in the empirical evidence of science, and explanation that even a child could understand. That alternate explanation is be based upon the sound, empirical evidence that has already been discovered then catalogued and filed by the physical sciences. It’s all a matter of connecting the dots, but somehow; the impervious wall of an original assumption always gets in the way…….. Go figure, eh?

LikeLike

I spent last night catching up on YouTube videos including Sabine Hossenfelder’s superdeterminism video. Usually, her videos make complete sense to me, but this one had a lot more speculation and hand-waving than usual. Superdeterminism is something she’s been working on and talking about for quite a while — I think it’s a hook she’s hung hopes of fame on. (Something I’ve seen theoretical physicists do fairly often.)

Frankly, I think it’s a load of dingo’s kidneys and she’s ironically gotten lost in the math. Her evangelism and negativity towards criticism is a huge red flag for me. The correct stance, I think, is, “Yes, this is a crazy idea that argues against all of human history and experience, but it’s not ruled out, so there’s some chance it might be right. Let’s see if we can test it.” She did get that last part right, so time will tell.

LikeLike

Yeah, she’s having the, “My conclusions are obvious and anyone who disagrees is an idiot,” impulse. Of course, we all have that impulse, but it pays to keep it in check, to remember that what seems obvious is often more about our biases than reality. And implying people are idiots is usually not a good way to get them to engage with the idea. I agree she’d be on firmer ground with a, “This is a possibility that does seem bizarre, but we should still explore it,” vibe.

I do agree with her that we should do the exploration. My own suspicion is that it won’t validate the idea. Most likely it’ll just be more confirmation of quantum mechanics. But maybe it’ll point to something completely unexpected.

LikeLike

Yep. 🤞

I just read a blog post about lepton pair production at ATLAS — an experiment that might have turned up a deviation from the SM, but the paper they released shows none. The SM wins again.

Meanwhile, observations of stars orbiting the BH in our galaxy show that GR has no deviation in the extreme regimes of speed and gravity. So, GR wins again, too.

Quite the conundrum!

LikeLiked by 1 person

Hi Mike,

I watched the video when it came out and was a bit nonplussed because she didn’t seem to really explain why superdeterminism is weird. She presented it as just basically the same idea as determinism, and implied that people didn’t like it only because they didn’t like determinism.

She did not explain that superdeterminism means that the universe has been ordained since the Big Bang as if by coincidence to guarantee that these correlations appear billions of years later.

This may be because she doesn’t see it that way, because she conceives of something more akin to retrocausality. But as far as I can see bringing retrocausality into it means it’s no longer a local theory. Retrocausality is more or less the same thing as superluminal communication — if you can send signals faster than light you can send signals back in time, and vice versa. But the idea of a local hidden variable is just that you don’t need to communicate faster than light — no spooky action at a distance. The idea of superdeterminism was that if you had these correlations from the time of the Big Bang then you could do without spooky action at a distance. But retrocausality just is spooky action at a distance. Retrocausality therefore isn’t really superdeterminism as I understand it.

That doesn’t mean either idea is ruled out. But I really think she underplayed how weird both ideas are, especially superdeterminism. If you allow the theory to be non-local then I suspect you end up with Bohmian mechanics. So I don’t really understand how her interpretation is different. But then I haven’t read the paper.

LikeLiked by 1 person

Hi DM,

I agree she doesn’t do a good job describing what superdeterminism actually entails, and why most physicists don’t see it as likely. I tried to make clear in my post why they don’t. It wasn’t always like that. Obviously the reason all those Bell loophole experiments happened is that physicists wanted to be sure.

She’s actually very clear in the paper that she’s not talking about the type of retrocausality that involves information going backward in time. I agree if we go there, it’s just another type of non-locality. I think that’s why the explicitly retrocausal interpretations are usually labeled as non-local. She’s trying for something more grounded. But as I discussed with others above, it’s not clear what that is. If it’s just an instrumental way to deal with the effects of a complex and disparate causal history, then we’re back to the widely recognized difficulties.

Honestly I had pretty much the same reaction as you when I first watched the video. But she tweeted a link to her preprint, and the idea that there might actually be a rational for a local single world deterministic theory piqued my curiosity. Unfortunately the paper seems to be filled with a lot of ambiguity. (Although as a non-physicist, I’m open to the possibility that it’s just over my head.) At this point, I don’t know that we can completely close the door on it, but my credence in it is pretty low.

LikeLike

So you think maybe she’s just agnostic on where the correlations come from, and saying that we can just work with the correlations and not need to ask where they came from?

Isn’t this basically just “shut up and calculate?” or is there more to it, do you think?

LikeLike

Hi Mike,

Reading the paper now. Some reactions…

“It means that fundamentally everything in the universe is connected with everything else, if subtly so”

It’s clear she wants the interpretation to be local, but this does not seem local to me. But perhaps I’m failing to interpret it correctly.

“Superdeterminism is presently the only known consistent description of nature that is

local, deterministic, and can give rise to the observed correlations of quantum mechanics”

Where does MWI fail to meet this description, I wonder? Does she think it’s inconsistent? Even if so, this is surely a point of contention, so needs some justification. Sean Carroll would surely disagree with this, as MWI is local and deterministic and explains the observed correlations of quantum mechanics.

“In a superdeterministic theory, the evolution of the prepared state depends on what the detector

setting is at the time of measurement.

I want to stress that this is not an interpretation on my behalf, this is simply what the mathematics behind Bell’s inequality really says.”

No it isn’t. The mathematics she explains just says that the hidden variables are correlated with the detector settings at the time of measurement. This doesn’t have to mean that the evolution of the prepared state depends on what the detector setting is at the time of measurement. That’s one way to get them correlated, sure. But another way would be for the hidden variables to be correlated with the prepared state at the time of preparation, as superdeterminism is usually interpreted. Her interpretation may be the more reasonable (that remains to be seen) but it’s still an interpretation and not just what the mathematics says.

LikeLike

Hi DM,

On shut up and calculate, she’s talking a bit too much to tell anyone else to just shut up. 🙂 But she is a professed instrumentalist.

On everything being connected, yeah, there are two ways to interpret that. One is pretty much tautological, that everything impinges on and is impinged on by its environment. But the other is the stronger sense that does sort of get us into pilot-wave like territory.

Hossenfelder did a video criticizing many-worlds a while back. I watched and read it numerous times without ever being able to understand her point. (I actually find this to be so with a substantial portion of her material.) http://backreaction.blogspot.com/2019/09/the-trouble-with-many-worlds.html

On many-worlds being local, it depends on our criteria for “local”. If we mean local dynamics, then many-worlds is local. But if we mean separability, one of Einstein’s criteria, then it isn’t. So it doesn’t have any action at a distance, but it does lean heavily on the inseparability of entanglement. It’s worth noting that not everyone agrees on this. David Deutsch, using the Heisenberg picture, claims to demonstrate separability, but other many-worlds advocates, like David Wallace and Lev Vaidman, seem skeptical.

Myself, separability strikes me as more of an accounting issue than anything else, so I remain puzzled why, strictly speaking, inseparability counts as non-locality. But when discussions of spatially separated entangled particles and world split timelines come up, it’s simpler to just concede that point.

Yeah, when someone says something along the lines of, “This is just what the math really says,” they’re invariably sharing their opinion with sanctimony, typically in an attempt to stifle disagreement.

LikeLike

Hi Mike,

I haven’t got through the whole paper yet, partly because I’ve been spending too much time binge-watching the anime Parasyte on Netflix.

But mulling it over, I think I kind of see her argument, but I don’t think it works.

To try to explain it as clearly and charitably as possible:

I think the idea is that the future-input dependence works similarly to past-input dependence but in reverse. This doesn’t mean retro-causality because this is a contradiction in terms. The laws of physics are reversible and don’t have a concept of causality built into them. We can trace a story of the evolution of state either forwards in time or backwards in time. For most processes, it’s easier to trace the evolution forwards in time because we need less information to do so. We don’t need to know the precise state of all the atoms in the universe to see what’s going to happen when an egg falls to the kitchen floor. All you need to know is pretty localised. It’s going to smash. But as the consequences of that smashing disperse throughout the environment (as soundwaves etc), the time-reversal where the egg spontaneously reassembles cannot be predicted without knowing the precise state of atoms over an increasingly wide area.

I think Hossenfelder thinks that future input dependence is the same as this but in reverse. It’s no more a conspiracy that very finely tuned states of particles miraculously come together to ensure the observed Bell correlations than is the time-reversal of the egg where the whole world conspires to nudge the atoms of the egg to reassemble. In both cases there’s a nice, compressed way to describe a state and predict what will happen. In the case of the egg, we see an egg falling and we know it’s going to smash in the future. In the case of Bell correlations, we know the correlation at the time of measurement so we can predict the evolution of the state backwards in time. But even in the latter case there is a story where we can explain what happens by the ordinary forward evolution of a physical system, it’s just that it looks a lot more conspiratorial, like the time reversal of the egg, because we’re used to working forwards rather than backwards in our predictions.

I think this is the gist of her idea, but I’m open to correction.

I don’t think it works because it seems to imply the interaction of two physical systems with opposing arrows of time. I very recently read From Eternity to Here by Sean Carroll, which is a fascinating exploration of exactly these kinds of issues. He doesn’t discuss exactly this idea but he does discuss similar ideas in a cosmological context. Future input dependence where the nice compressed description is in the future rather than the past would seem to imply that we have a system with lower entropy in the future interacting with ordinary physical systems with lower entropies in the past. It’s hard to make this work, because you can only really fix lower entropy at one point. It’s very hard to create a system that will reliably and consistently evolve from low entropy in one context (e.g. a classical description) in the past to low entropy in another context (e.g. Bell correlations) in the future. You can start from the low entropy point in the future and work backwards, but that will tend to mess up the low entropy point in the past. Or you can start from the low entropy point in the past, which will tend to mess up the low entropy point in the future. This is why it’s incoherent to imagine that we could ever interact with another part of space where time’s arrow is moving in another direction, but it’s not incoherent to imagine that there are regions which are completely causally disconnected where time’s arrow is reversed (this may be the case on the other temporal side of the Big Bang, for instance). The only way you can get the kind of balance required by Hossenfelder seems to me to have the astronomically unlikely conspiratorial setup since the Big Bang.

LikeLike

Thanks DM. I think I agree with everything you say here. Overall, Hossenfelder’s view is still the traditional superdeterministic one. Her reasoning might perhaps provide an instrumentalist approach to calculating it, but it still depends on compatible correlations being consistently determined by prior events in the shared causal history of the measured and measuring system, events that appear to have been experimentally isolated to the early universe, but which somehow enforce the correlations through all the perturbations between now and then.

It’s conceivable the universe works this way, but it really seems to need new physics. Which of course is exactly her position with the hidden variables. But saying there’s new physics out there, and identifying them, are two very different things.

I’ve seen a lot of recommendations for Parasyte. Would you describe it as horror? It sounds like it from the description, which is why I haven’t jumped in. Horror not being my thing.

One of these days I do hope to read From Eternity to Here.

LikeLike

Hey Mike. Sorry I’m late to the party. I read your post, watched the video, read (a lot of?) the paper, and then my brain hurt.

As you may know, I’m sympathetic to Rovelli, and apparently Hossenfelder, in that I think quantum mechanics and the wave function are epistemic, not ontic. I’ve read most of the discussions, and here are some thoughts.

When you talk about wave function realism, would you say that sound waves are real in the same way? To me, the mathematics of waves describes something happening, but there is nothing there (no wave thing) separate from molecules doing what they do. Same with water waves, although what the material is doing there is completely different. Same with electrons going thru slits.

Regarding determinism and superdeterminism, I’m Agnostic with a capital A, meaning not only that I don’t know, but I cannot know, and you cannot know, and there can be no proof either way. The only thing we can do is find mechanisms, but any mechanism may have sub-mechanisms. We can dig as far as possible, but no further.

Regarding retrocausation, here’s a thought I had: suppose the preparation is letting some fish into a tank. Determinism says what kind of fish, say bass or bluegill in some combination, is determined at time 0, but hidden. At time 1, you’re going to choose a lure. You don’t get to see the lure you choose. You only get to count how many fish you catch. The lure you choose will make a difference, but you don’t know what the difference will be.

Okay, my brain hurts again.

*

LikeLiked by 1 person

Hey James,

Yeah, I’m with you on brain hurting.

I do think sound and water waves are real. But I accept the reality of emergent phenomena. (Emergence in a weak sense.) Temperature is emergent, but real. As is entropy. Or the chair I’m sitting in. Heck, we’re emergent, and I certainly think we’re real. Something doesn’t have to be fundamental to be real, otherwise nothing is real but quantum fields, and maybe not even those.

The question, of course, is what does the wave function emerge from? I don’t think anyone knows. It’s possible the wave, as an excitation in the quantum field, is fundamental. David Deutsch thinks that the fundamental reality is point particles, with the wave composed of different versions of the particle in parallel worlds interfering with each other. Or we could view the particle as a fragment of the wave; same ontology as the particle one with different description. Or we could privilege one of those fragments as the real particle, and the rest as ghostly potentials, which puts us in pilot-wave territory.

But we don’t have to answer these questions with any kind of finality to see the wave function as capturing something, emergent or otherwise, that’s real. We only have to accept that its predictions are accurate and that it would be unlikely for something so accurate to not reflect reality at some level.

I’d be more prepared to accept the epistemic view if someone could provide alternate explanations for the interference effects, or at least what appear to be interference effects. Saying there is an explanation, but we just don’t know it, strikes me as a vague IOU that something somewhere somehow will rescue us from implications we dislike.

Given the history of science, I’m pretty leery of conclusions about what we will never know. There are too many examples of things that started out as metaphysical speculation (atoms, the composition of the stars, entanglement, etc) that eventually became measurable phenomena.

On the fish and lure analogy, consider if someone had provided evidence that the fish-lure correlation could not have been determined during the preparation (either of the tank or your gear). We might imagine that maybe the cause of the correlation is before the preparation. But suppose you made your choice of lure based on signals from the early universe. Now we’re saying that the fish lure correlations began then. That’s superdeterminism.

The good news is that, with QM, if your brain isn’t hurting, then you likely didn’t understand the problem. 🙂

LikeLike

1. I think the trouble you’re having with explaining the interfering effects is that you’re still thinking of particles as really small, approximately spherical, solid things. So you’re thinking the electron must be going through one slit or the other, at least when it gets observed on the way. This (I’m pretty sure) is wrong. The electron always goes through both slits, and interacts with the wall in an interference pattern. It’s just that if something interacts with (observes) the electron before it hits the wall, the interference pattern is shifted randomly. This is what the “quantum eraser” experiment showed.

2. I’m not saying we will never know more about QM. I’m hopeful someone will come up with a better description of what underlies “particles”. How is string theory doing, anyway? And what I am saying is not that we can never get to the bottom. I’m saying that we can never know if we are at the bottom. Whatever is the current bottom, we identify by what it does, but we don’t know how it does what it does. If we learn how it does what it does, we have a new, lower, bottom. Whatever is at the current bottom is multiply realizable. It could be an angel flipping a coin, or reading a script, so, undetermined or determined.

*

LikeLike

1. The view you’re describing and criticizing isn’t mine. It’s actually much closer to those who take an epistemic view of the wave function, including Bohr, Heisenberg, and Rovelli. I agree that holding to a particle-only ontology is very problematic. I described my view above, which is a wave ontology that I’m open to being something emergent. I’m also open to alternatives, but they need to be concrete.

2. I agree. We can never know whether we’ve reached the bottom. But whatever layer we are at, we can model the dynamics, and if validated by testing, that model will supervene on, constrain, the possibilities of those lower layers. Whatever we learn about those lower layers, it will have to be compatible with what we’ve learned about the layers above it. In other words, the emergent reality will remain.

LikeLike

1. Given that every current description of waves that I know of is not a description of a wave-thing, but a description of material objects doing wave-like things, I don’t know what a “wave ontology” is. Is there a motion ontology, or a spin ontology?

2. I agree with what you say here. My point is: whatever is at (current) bottom, you can’t know if it is determined or stochastic.

*

LikeLike

1. Maybe the issue here is you’re the one stuck, not on a particle ontology, but of some distinct object rather than a diffuse wave. A wave is a wave, following the dynamics of the wave function. I understand we can think of it more generically in quantum field theory as a wave of excitation of a quantum field. Your next question should be, “What is a field?” I don’t know. We’re at that boundary we’ve been discussing in 2.

In terms of motion, the central mystery of QM is that quantum systems move like ever spreading waves but hit like localized points. I think decoherence and entanglement are at least part of the answer. I’ll fully admit to not having a spin ontology. Everyone acknowledges the pictures of little balls spinning is cartoonish. I’ve read that gyrating field lines may be the best way to visualize it, but won’t claim to understand it. Some physicists like Max Tegmark seem to regard any visualization as misleading.

2. In terms of determinism or randomness, it comes down to when we’re prepared to declare victory. A determinist like Einstein would refuse to do so until we have a deterministic model. A non-determinist would be fine declaring a random process as fundamental. As you noted, we never know whether we’ve hit the bottom. But I think there’s more involved than just determinism vs indeterminism.

LikeLike

“Wave ontology” would be what’s known as wave mechanics, which is studied at both the classical level (sound and water wave) and the quantum level (wavefunction). What’s different is that QM uses complex-valued mathematics. As Mike says, a central mystery in QM with regard to wave mechanics is what in the hell is waving???

FWIW: A point not often mentioned wrt two-slit experiments is that, in similar experiments with water or sound waves, the null points are the result of opposing wave energy cancelling each other out. A positive wave crest meets a negative wave crest, and the result is flatness. But wave energy is very much present. And the water or air the waves move through is present all along the detector surface.

But in particle versions, the null points are the result of probability cancelling such that the particles never go there. Photons aren’t cancelled out, they avoid. And they’re especially prone to go where probabilities add. Another difference is that we don’t, at the detector, see the positive and negative crests we do in mechanical waves. There are only light areas and dark areas.

LikeLike

I think saying they’re probabilities, and only probabilities, is essentially the epistemic view of the wave function. But this would involve probabilities interfering with each other, a strange concept that’s never made sense to me. One of the things I’ve learned is that very few physicists can describe this without putting their finger on the epistemic vs ontic scale.

LikeLiked by 1 person

Indeed! As I said, (quantum) interference is, I feel, one of the big mysteries. What is interfering? Mathematically, it’s the phase of the complex numbers used to describe things, not their amplitudes (as in regular wave mechanics). With complex numbers, the amplitude is always 1.0. It is all, as you say, epistemological.

Obviously there has to be something ontological, but no one has a clue what it is. How do we reify complex numbers?

Happy New Year, Mike! In 2022 may all your pains be sham-pains! 🍾🍾🍾

LikeLike

And I’m sure you saw it’s now been determined the complex numbers aren’t optional. The real number versions apparently don’t hold water.

Happy New Year to you too Wyrd!

LikeLiked by 1 person

Yep, did see that (although I’ve also seen that some still aren’t entirely convinced, but par for the course).

LikeLiked by 1 person

Hi Mike,

I think there’s part of Hossenfelder’s account we haven’t touched on enough (or at all), which is her reliance on the idea of attractors (at least in the paper).

As you’re probably aware, an attractor can be thought of as a predictable macroscopic state which is reached by a wide variety of different earlier microstates and where it seems to stay there once reached. What’s more, it can be a bit like what what I said we couldn’t have in the future — a nice compressed description. So, I wasn’t quite right in what I said earlier. In Hossenfelder’s view, attractors explain how future states can drive the evolution of a system — i.e. the system evolves towards the future attractor state.

I still think her idea doesn’t work, but I need to be more precise.

I think for Hossenfelder to rely on attractors, we need more than one attractor state, one for each way the detector could be set up. It’s ok for a system to have many attractors, but we usually expect that which attractor wins depends on the initial state of the system. But for Hossenfelder, the hidden variables will evolve towards one or the other, depending on which detector settings are chosen in future. But now I don’t think we can really think of the attractor as determining the evolution of the system any more, because the choice of which attractor is activated by the detector settings is what matters, and this is not itself determined by the attractor.

I’m not really sure I’ve been precise enough even now. I guess I’m not comfortable enough on either the mathematics or the QM of this, but Hossenfelder’s account seems off to me in any case.

Parasyte is certainly technically a horror, but it didn’t particularly feel like it to me. It’s grotesque and gory, with monsters a little like John Carpenter’s the Thing, but it’s a cartoon so who cares. I didn’t find it scary or disgusting at all personally and it never made me want to wince or cringe, but maybe that’s just me. It felt more like a thriller to me. I’m not into horror either, but I do watch the occasional horror if I think it has good dramatic elements (for example the excellent Midnight Mass on Netflix).

LikeLike

Hi DM,

I have heard of attractors; mostly in connection with biology, and most notably with Karl Friston’s free energy principle. I remember Hossenfelder’s mention of them but had forgotten about it. I agree that we expect the attractor to be encoded in the initial state of the system. But what’s the system in this context? Are we saying valid measurement correlations are an attractor state for the whole universe? That just seems a bizarrely specific and anthropocentric thing if so.

I think the reality here is that Hossenfelder is fishing for a rational. She’s convinced of the conclusion and is looking for logic to get her there. And under her assumptions (determinism, locality, one world), it might be seen as a rational place to start. Although I prefer to start with what’s reliably known and reason forward from there, but that inevitably leaves us having to question one or more of her assumptions.

On horror, my aversion to it isn’t about the gore or scary parts. There are elements of that in a lot of fiction I enjoy. What I dislike is the overall message that horrible things are going to happen and there’s nothing anyone can do about it. I know a lot of people get some kind of catharsis from that. I don’t. For example, I love Attack on Titan, despite its horrific elements, primarily because the characters in the story fight for their survival. (I am a little worried about how it will end. If it ends with the horror message, I won’t be happy.) The problem with something described as horror, is I never know whether it’s constructed in the typical horror template, or transcends it. For example, Dracula is horror, but people ultimately fight back effectively in it, so it becomes a story I can get into. Not that I necessarily insist on a happy ending, but I don’t like ignominious ones.

LikeLike

Hi Mike,

I think you can get attractors in all sorts of systems. Even generically.