The journal, Trends in Cognitive Science, has an interesting paper up: Dimensions of Animal Consciousness. After noting the current consensus that some form of consciousness is present in at least mammals, birds, and cephalopods, it looks at how to evaluate it in various species. The authors take the position that consciousness can be present in varying degrees, but argue that it can’t be assessed on any single scale without missing important dimensions of variation. So, they argue, a multidimensional approach is needed.

Their proposed dimensions are:

- P-richness

- E-richness

- Unity

- Temporality

- Selfhood

P-richness describes the perceptual capabilities of the animal, the level of detail it can perceive in its environment for any specific modality (such as sight, hearing, etc). That richness can be resolved into different components. For example, visual experience depends on bandwidth (how much visual content can be experienced at one time), acuity (the number of differences the animal is sensitive to), and categorization power (the ability to sort perceptual properties into high level categories). These different components can complicate comparisons between species, unless all or most of them are higher or lower.

To probe p-richness rigorously, conscious vs unconscious perception must be distinguished. The paper discusses possible experimental scenarios correlated with consciousness in humans, or lack thereof, such as blindsight, discrimination learning, and other techniques.

E-richness stands for evaluative richness. This is about affects, the conscious feeling of emotions, including valence (feeling good or bad). Similar to p-richness, it can have components, including its own versions of bandwidth and acuity. The authors express doubt about attributing emotions like anxiety or grief to animals, but note that more primal ones like hunger, thirst, or pain are expected to be widespread. (Although they caution against making assumptions without evidence.)

E-richness can be tested with experiments probing motivational trade-offs (such as rats having to decide whether to enter a cold chamber to get at a sugar solution). A trade-off that is crossmodal (somatosensory vs taste for the rats) is stronger evidence for consciousness, requiring crossmodal integration. (Although apparently there is evidence that such integration is not a guaranteed indicator.)

Unity involves integration at a specific point in time. Conscious experience in healthy human adults is highly integrated and unified.

Although there are various pathologies that can undermine that unity, such as split brain patients, who’ve had the connections between their cortical hemispheres separated. Through careful experiments, it can be shown that the two hemispheres no longer communicate with each other, leading to what appear to be two conscious experiences.

Apparently birds are natural split brains. They don’t have the corpus callosum connections between hemisphere that mammals have, implying that a bird may be a couple of conscious entities intimately cooperating with each other. And the nervous systems of cephalopods include a central cerebral ganglia, but local controlling nerve rings for each arm that appear to be at least somewhat independent.

Experiments to test unity in animals are inspired by the experiments on split-brain patients, and involve testing whether information sensed on one side of the body can affect behavior on the other side, or in the case of cephalopods, how much coordination exists between the central senses and the various arms. Other indicators may include whether the animal can have one hemisphere asleep while the other is active, a trait observed in some birds, dolphins, and seals.

Temporality is integration across time, essentially experiencing episodes rather than just snapshots. In its more sophisticated form, the authors argue, this includes mental time travel.

Evidence for this type of capability includes perception of apparent motion, particularly in visual illusions such as the phi illusion (the rapid sequencing of two dots of different color, such that an illusion is generated of one dot moving from one position to another, changing color halfway). It also includes testing for episodic memory and future planning.

Selfhood, self-consciousness, includes awareness of oneself as distinct from the world. In its simplest form, this is awareness of one’s own body. The more sophisticated versions include awareness of one’s own stream of experiences, and mindreading (theory of mind), and at the most sophisticated level, mindreading turned inward. Humans possess all of these. There is evidence that apes and corvids possess some mindreading capabilities, but little evidence that it’s turned inward.

The authors present a table summarizing possible experiments to test and measure these dimensions.

Toward the end, the authors discuss some challenges, one of which is an admission that the dimensions could easily get much more numerous and complicated than what’s presented here. But they cite the need for pragmatism to keep the count down to reasonable number. Another challenge is the need to keep the dimensions distinct from each other, and also to keep them usable for species comparisons.

A few quick observations. The paper struck me as too stingy with the species it sees having episodic memory. I’ve read studies implying it’s more widespread in mammals and birds, albeit with widely varying sophistication. Although maybe more evidence is needed to solidify this conclusion.

I initially thought the authors were too accepting of the mirror test. But they only take it as evidence for self-body awareness rather than self-mind awareness, which is probably the right way to see that test.

Overall, I found a lot to like in this paper. Assessing consciousness across multiple dimensions is, I think, a good approach. It has me wondering if I should find a way to work it into my usual hierarchy, although the goals of that hierarchy are different than this framework.

What do you think of the dimensions? Or the overall approach?

Ha, yes! Configuration spaces — exactly what I’ve been posting about. So handy!

LikeLiked by 1 person

?? WordPress allows images, but not IMG tags?

LikeLiked by 2 people

I thought you might like this one. It seems to resonate with your recent post.

Of course, the authors here aren’t trying for a full and comprehensive accounting of consciousness, just a way to compare it across species. That allows them to cherry pick the dimensions and keep them manageable. Although as they admit, it makes them vulnerable to debate about whether they chose the right ones.

LikeLiked by 2 people

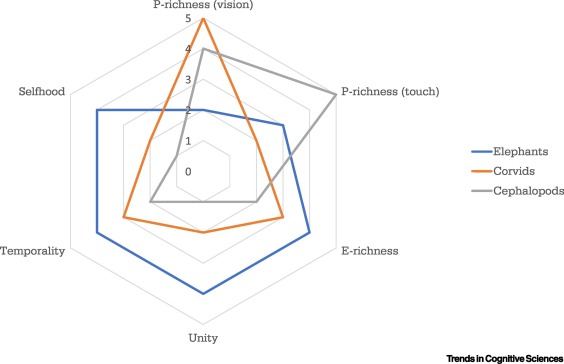

Sure, but even if they’ve picked the “wrong” ones the concept is still a powerful tool for analysis and communication. Here it nicely illustrates graphically how consciousness can have different “shapes” — somewhat like different regions in the space. Like how stouts and IPAs are both beers, but with very different shapes in config space.

LikeLiked by 1 person

True. And just talking about them, I think, reveals some important things about consciousness, and how whether a particular animal is conscious will not have a simple answer. How many things can you change about a beer and still consider it a beer?

LikeLiked by 1 person

“How many things can you change about a beer and still consider it a beer?”

Depends on how you define beer. 😀 😉

The difference with beer is there are objective definitions one can reference. The most restrictive one is the ancient German purity law, Reinheitsgebot, which defines beer as a fermented beverage made from malted barley and hops (and water and yeast). More, or less, and it couldn’t be called beer.

I tend to favor Reinheitsgebot’s beers, but for most people “beer” is any fermented malted grain beverage with a hops modifier, although most beers center on malted barley. In particular, new things are added to the mix (e.g. chocolate, coffee, fruit, etc).

It’s generally pretty clear what’s a beer, but there are some borderline cases that people see different ways. If something other than hops is used as a bittering agent, many would question calling it beer (I would), and it’s possible to brew malted grain with no bittering at all. (I forget which was which, but in England long ago, “beer” and “ale” referred to hopped and unhopped fermented malt beverages. I think (paralleling the German law) “beer” was hopped.)

LikeLiked by 1 person

I’ve always thought of ale as a type of beer. But I’m pretty much the opposite of a beer connoisseur. So I guess an alternate bittering agent doesn’t count?

LikeLiked by 1 person

These days, yes, beers are broadly classified as ales or lagers depending on the yeast used (there are ale yeasts and lager yeasts, and they behave slightly differently).

Using different bittering agents than hops is one place where many think we’re not really taking “beer” anymore. There’s probably a lot more latitude in the malted grain used than in the bittering. Very few doubt wheat beers are really beer, and rye and rice are very common. But not using hops? That seems more like some other kind of alcoholic beverage.

With no bittering, one ends up with something sweet and wine-like. “Barley wine” is a beer style with so much malt the alcohol content gets up to 12% or more, and there is almost no hops presence, it being overwhelmed by the malt and alcohol.

LikeLiked by 2 people

I think that dimensionality is the correct approach. But then, that’s because I think I understand the core features which will be found to be common to every dimension, and that different dimensions are simply different ways to make use of those core features.

Having said that, I will note that the biggest hurdle to overcome will be the notion of the dichotomy between conscious and unconscious perception. From my understanding, there is no such thing as unconscious perceptions. There are only conscious perceptions which can be accessed by a given system and conscious perceptions which are not accessible by that system.

*

LikeLiked by 2 people

On the core features, are you saying they all make use of the same neural substrate? Or just that they all work in a similar manner?

On unconscious perceptions, it comes down to how you define “consciousness”. Your definition is focused on representations, so I can see why you’d conclude there are no unconscious perceptions. However we label them, they can’t be reported or deliberated on. Their effects seem limited to automatic reactions.

LikeLiked by 1 person

On the core features, I’m saying they work in a similar manner, making use of mutual information via representations, possibly including information integrators (unitrackers) and/or multi-representational mechanisms (semantic pointers), but possibly some other mechanisms as well.

On unconscious perceptions, I think you’re falling into the trap of assuming a priori that there is one conscious system in your brain, and that’s the one that can make verbal reports. The split brain experiments show there are at least two, one that can make verbal reports and one that can report via gestures, including drawing. What is the case when the halves are not split? The non-verbal one can still make reports, but it probably mostly makes those reports directly to the verbal one via the corpus collosum. And if you accept these two as separate but cooperating consciousnesses, there’s no good reason to assume a priori that there are not similarly cooperating, inter-reporting, subsystems within each of those two. It’s even possible that these subsystems can deliberate, presumably to a significantly lesser degree, but still. First you have to decide what a “deliberating mechanism” is doing, and then look at the subsystems to see if they’re doing it.

*

LikeLiked by 2 people

On core features, cool. Thanks. That’s what I thought.

As I noted to someone else in this thread, I’m not as sure of the unity of human consciousness as the paper authors are. So I’m not necessarily averse to the points you make. And as I keep saying, it depends on how you define “consciousness”.

But for a lot of people, context matters, the context of why it feels like something to us to have a certain perception. I don’t think you get that context in any one sensory or motor system. You need integration across modalities and across the sensorium and motorium to get it. Of course, we do get that in each hemisphere, which is why it makes sense for many of us to say each is conscious. But I can’t see that we get it with, say, just my visual cortex.

I define deliberation as simulating alternative action-sensory scenarios, judging each by an affective reaction, working toward selecting a course of action. That seems to require access to integrated sensory and categorization functionality in the sensory regions, as well as motor and affective systems. It’s hard to imagine it happening in a meaningful way in an isolated specialty system.

LikeLiked by 1 person

Birds don’t have a corpus callosum?? Wow that’s wild. I think unity isn’t a dimension I’d have considered separately from p-richness, but I’ll have to read the paper and see their thoughts in more detail on this. Otherwise, I see a lot of overlap with the cognitive capacities I’ve placed in my hierarchy and think something like this is “just” a very nice way of presenting a multi-dimensional “thing” in one picture. I think I’d want to chart several examples using my own hierarchy to see if there’s truly any helpful difference. Thanks for the summary and share!

LikeLiked by 3 people

The birds not having connected hemispheres was my biggest what?!? in this paper. It was definitely…consciousness raising.

Something I forgot to mention about unity in the post. I actually think unity in human consciousness is overstated. The fact is, split-brain patients are able to lead relatively normal lives. There are some exceptions, but they generally don’t perceive themselves as two separate consciousnesses. That, to me, seems to signal our consciousness may not be nearly as unified as we often assume it is. The thing is, we’re only conscious of what we’re conscious of, and we’re not directly conscious of what we’re not conscious of, such as the hole in our visual field. If our conscious experience were fragmented, it don’t think we’d be conscious of the gaps.

The dimensions fit in my hierarchy pretty well, although it reminds me of something I don’t track with it, the degrees of sophistication involved with each capability.

Glad you found it useful!

LikeLiked by 2 people

Hello, what do you guys mean when you talk about cognitive hierarchies? I’ve read it more than twice in the comments section.

LikeLiked by 3 people

One of the things we’ve done is arrange the subcomponents of consciousness in hierarchies. Here’s a post on mine: https://selfawarepatterns.com/2019/09/08/layers-of-consciousness-september-2019-edition/

…although I need to do an updated version sometime soon. It was originally developed to point out what’s missing from very liberal definitions of consciousness.

Ed has one too: https://www.evphil.com/blog/consciousness-19-the-functions-of-consciousness

LikeLiked by 2 people

Ta, thanks. Yes these are hierarchies of consciousness and I incorporate 13 aspects of cognition in mine from a good paper I found on the evolution of cognition.

LikeLiked by 2 people

Hello Fred,

One thing to mention about these cognitive hierarchies (which I think Mike has stated before, and perhaps Ed agrees with as well), is that they’re essentially ways to characterize the vast collections of beliefs which reside in these fields. So their purpose is not to directly move the ball forward, but rather to help observers keep score. P-richness, E-richness, Unity, Temporality, and Selfhood, or factors that we’re now considering, seem very much in this vein. And while Mike’s hierarchy essentially begins from the non restrictive position of panpsychism and goes up to highly restrictive higher order theories of consciousness, this account seems to take various popular characteristics associated with “human consciousness”, and then tries to assess a given animal regarding how much of that sort of thing it seems to display. And as mentioned, are these emergent factors effective ones, or would others work better? How many should be included? So the point is essentially to conclude “Maybe [this animal] can effectively be quantified to have [this level of consciousness.]”

Given the extreme softness associated with these fields, for many it’s not about moving the ball forward, but rather about trying to effectively tread water. But observe that Newton provided no hierarchies when he defined force to exist as the product of accelerated mass. Unfortunately our mental and behavioral sciences seem to have attained few such effective definitions, and mainly in quite specific fields off the center, such as economics. Effective reductions should some day exist regarding “consciousness” however, and I’d love to help right this ship!

LikeLiked by 2 people

Birds may coordinate hemispheres through the brainstem and I would guess humans do also to some degree which would account for ability of split brain patients to read relatively normal lives.. The corpus callosum may have developed to fill the need for coordination in larger brains and it took over a lot of responsibilities from the brainstem.

LikeLiked by 1 person

Do you have any authoritative references for the connections between the brainstem halves? I occasionally read an assertion that there are connections there, and it seems plausible, but I haven’t been able to find anatomical details.

In any case, I think the information transfer at that level would be minimal compared to what we normally get with a functioning corpus callosum. Even the claims in Yair Pinto’s study, if you read the results carefully, show very limited communication between the hemispheres. And Michael Gazzaniga has argued Pinto and colleagues didn’t do enough to isolate sensory signalling between the hemipheres.

LikeLiked by 1 person

“When hemispheres contribute differentially to behaviors that recruit muscles bilaterally, such as for bimanual movement or vocal production, it is sometimes assumed that hemispheric coordination is achieved exclusively by the corpus callosum, a massive fiber bundle that connects the left and right cerebral hemispheres. This commissural system is evolutionarily recent and is only observed in placental mammals, yet hemispheric switching is observed in animals such as fish and birds that do not have a corpus callosum. A possible neural substrate for hemispheric coordination and switching might therefore include brainstem neuromodulatory systems. These are interconnected across the midline and project diffusely throughout the hemispheres, and could therefore differentially influence each hemisphere.”

https://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.0060269

Makes a lot to me since hemispheric coordination would be required even for such simple things as flying or walking.

Probably more evidence that the brainstem is the sine non qua for consciousness and almost everything else is elaboration. Possibly the gamma modulated by the brainstem is associated.

LikeLiked by 3 people

Thanks! I’m going to have to go through that paper.

Based on what the Dimensions paper authors said, it sounds like there’s still a lot of work to do here to figure exactly what kind of information makes it across the hemispheres in various species and how. My own suspicion is that the cerebral connections in mammals evolved for a reason. I suspect we’ll find out that object detection can cross at the brainstem level, but object discrimination and planning, at least in mammals, requires those cerebral ones. Birds may have evolved alternate mechanisms, although there’s no guarantee it involves unity.

LikeLiked by 1 person

What’s not to like? It certainly fits in with your ways of listing the various functions of consciousness, even if your series is more hierarchical (you seem to be looking for dependence relations among the functions?). Dirty little secret: basically all concepts are high-dimensional, not just “consciousness”. That’s what I was getting at in my comment about ordinary people vs philosophers on the “Hard Problem”. It’s the underestimation of the complexity of concepts, and the overestimation of our reflective access to their content, that leads philosophers to invent many “problems” that aren’t.

LikeLiked by 2 people

Definitely my hierarchy concerns the fact that the abilities build on each other. You can’t have e-richness (affects) without p-richness and reflexes. And you can’t have temporality (imagination) without p-richness and e-richness. And high selfhood requires introspection, which is only meaningful on top of all the others.

I agree that oversimplification creates pseudo-mysteries. Jennifer Corns, in relation to the philosophy of pain, call it “the orthodoxy of simplicity”, which gives an impression that something magical is happening. If we admit that these are enormously complex phenomena, there’s still a lot of work needed to understand them, but they are revealed as things we can study and understand.

LikeLiked by 1 person

For what it’s worth, I would suggest what you call reflex and affect are actually different dimensions at the same level of hierarchy. They are both responses to perception. I would suggest you *can* have temporality/imagination without e-richness/affect. And you could likewise have selfhood without affect. Do you think otherwise?

*

LikeLiked by 1 person

I do think otherwise. For me, a reflex is an automatic motor response to either a simple stimulus, or to a perception.

But consider a reflex where, while the reflex is firing, higher order systems are signaled, giving those systems the option to allow or inhibit the motor output of the reflex. The reflex signal triggers a galaxy of associations, all of which together make up the affect, which serve as input into the higher order systems’ decision.

Now, it is true that a reflex alone can trigger an affect-display, which many, particularly many animal researchers, conflate with the affect. But there are many affect-displays which can happen unconsciously in humans, such as unguarded facial expressions.

LikeLiked by 1 person

Let’s just talk about responses to perceptions. I think we need to diagram the parts.

So let’s start with perception P. Here’s a “reflex” response:

P -> R, where R is a chain that ends in motor function M, so, P -> R(M1->->->M)

Now here’s an affect response:

P -> A, where A results in a “galaxy” of effects, so, P -> A( A1,A2,A3, …, An).

It’s possible that these are combined. So for example, within the reflex chain there might be a signal which activates both the motor chain and the affect set (say, M1 -> A and also M1 ->->->M). Likewise, some member of the affect galaxy could start the motor chain, say, A3 -> M1. But it’s also possible that they are completely separate.

So I’m saying temporality comes in when the system can reference a process, either P -> R or P -> A, in the absence of that actual response. But I don’t see why the system could not reference a reflex response (P -> R) in the complete absence of any affect response (P -> A). What am I missing?

*

LikeLiked by 1 person

I think the question to ask is, why does the temporality evolve? If it just comes into being and references reflexes, what’s its role? Affects give it a role in the sequence, a purpose for being, an adaptive benefit that causes it to be selected for.

LikeLike

Um, … temporality, knowing this happened and then that happened, is how you get to understanding causality, “that” happened because “this” happened.

I believe I am missing your point. How is affect necessary for giving temporality a role?

*

LikeLiked by 1 person

What adaptive benefit does understanding causality provide? Crucially, how are predicted and remembered outcomes judged?

(Hint: a consequence of bi-lateral amygdala damage can be an inability to make decisions.)

LikeLike

[Feeling Socratic, are we?]

Understanding causality provides the capability of achieving goals. Predicted outcomes are judged with respect to their proximity to the goal state.

I expect you want to say that goals are chosen based on anticipated affect, or something like that. While that is sometimes the case, I don’t think it is necessarily the case, unless you are going to broaden the meaning of “affect” to the point of abuse. Suppose I see a letter has arrived and is sitting on the table near the door. I decide (I generate the goal) of seeing who the letter is addressed to. Are you going to say that affect is a necessary part in either my generating that goal or attempting to achieve that goal?

*

LikeLiked by 1 person

[Sometimes it’s the best approach.]

What motivates you to generate that goal? For that matter, what motivates you to do anything?

LikeLike

Ok. I dunno. What motivates you to do anything?

*

LikeLiked by 1 person

Though there are many terms for it, I’m hoping that Mike provides an answer which begins with the letter “Q”.

LikeLiked by 1 person

In the end, the only thing that motivates anything in us are instincts. In a simple organism, that manifests as reflexes. In a more complex organism, the reflexive reaction serves as an impetus for reasoning (ranging from very simple to complex depending on the species). The name we give to the impetus from the reflexive reaction, along with its automatic associations, is “affect”, or emotional feeling.

This is what’s behind Hume’s famous observation:

If you can think of something else that motivates us, I’m interested to hear it.

LikeLiked by 1 person

[anticipating the suggestion of a necessary role affect in goal creation]

A question: can the creation of a goal be a reflexive response?

*

LikeLike

Didn’t see this until after my response above, but I think I addressed it.

LikeLike

“ The name we give to the impetus from the reflexive reaction, along with its automatic associations, is “affect”, or emotional feeling.”

I see. It’s a nomenclature issue. I do not give the name “affect” to instinct, although I may apply “affect” to the automatic associations, if those associations are of a certain type (specifically not bearing mutual information with respect to a specific incident so much as to a very broad range of possible incidents). Thus, I see it as possible to have instinct without affect-type associations.

*

LikeLiked by 1 person

I’ll acknowledge that the word “affect” is problematic, since it’s supposed to refer to a conscious feeling.

For example, in habits, there is typically an innate reflex, or combination of reflexes, which the habit, essentially a learned reflex, allows or inhibits in a particular way. The communication from the reflex to the habit circuitry can be just as complex as the conscious feeling, but we often aren’t conscious of it.

There are also people like Lisa Feldman Barrett, who would use the word “affect” to refer to just the feeling of the instinct, or collection of instincts, without the cognitive associations, and reserve the word “emotion” for the affect with those associations. Unfortunately, the terminology is a mess because you have people like Antonia Damasio, who reserve the word “emotion” for the reflex, or what he calls “action programs.”

This may be why the paper authors went for “evaluative richness.”

All that said, you’re not going to get temporality without some sort of evaluative process already in place for it to build on. And except maybe in a newborn, I don’t think you ever get just the instinct without those learned associations, at least in any organism capable of associative learning.

LikeLiked by 1 person

I regularly feed crows in my backyard and like to observe them. I wonder how P-rich or E-rich their experiences are. Do they have two selves or one? Just wondering.

LikeLiked by 2 people

My take would be that they have one self, and their impulses and desires will be centered on that one self, but with two interacting engines of decision making.

I should note that the paper discusses evidence for “interocular transfer” between the eyes in the lower field of vision, but not in the upper field, except (bizarrely) in specific individuals. I wonder if some of that comes from an avian version of an optic chiasm.

LikeLiked by 1 person

Nice blog

LikeLiked by 3 people

As you know, my goal is to identify the parts and their roles. So when you say “ I don’t think you ever get just the instinct without those learned associations, at least in any organism capable of associative learning“, I would say you’re right, but only with respect to extant organism’s. Because that is natural selection’s method of generating evaluation, but it’s not the only way. Robot’s/computers can have a more direct method, but it’s still evaluation, without affect. It’s still instinctual, as generated by the designer of the evaluation mechanism, but possibly without resort or result of affect.

*

LikeLiked by 1 person

Definitely there’s no particular reason an AI has to work the same way in its evaluation as a naturally evolved system. But it would likely still be a punishment / reward dynamic. We already see this in existing ANNs. So it would be affect like, although the system could be much more precise about when and if the affect needs to fire. Whether the result is what we would call an affect or emotion, I’m not sure is a fact of the matter. But then neither is whether such a system is conscious.

LikeLike

Odd that I see very little consideration in most papers such as this to sociality.

Excluding insects with colonies of organisms adopting very fixed relationships, most of the more apparently conscious species are social: primates, elephants, dolphins, corvids, wolves. Sociality is probably an indicator for selfhood since it usually requires locating oneself in a social structure, understanding motives of others, and managing relationships. I think this would likely put corvids higher on the scale on the self scale than the diagram shows.

LikeLiked by 1 person

Social species in general are usually more intelligent than non-social ones. And it’s plausible that they have a more developed sense of selfhood than non-social ones. The trick is getting scientific evidence for it. My understanding is that, so far, the evidence is limited and controversial. An example is a paper I linked to a while back on dog metacognition. The evidence it presented seemed vulnerable to simpler explanations than actual metacognition.

But it’s worth noting that the diagram is hypothetical, a first guess by the authors, presented more as a type of model we should be aiming for than anything established by data.

LikeLiked by 2 people

The trick is getting the evidence for any of the five dimensions. It might be more useful to try to measure with strictly observable behaviors instead of dimensions that must be inferred indirectly.

LikeLiked by 2 people

Careful. Someone will accuse you of being a behaviorist. 🙂

But seriously, behavior, including report, is all we get. Some will say we also get things like brain scans, but those are only useful once we’ve already established correlations with experience.

But careful tests of behavior can tell us a lot. For example, the degree to which a species can discriminate between different colors, or patterns, with a food reward on the line, tell us about their p-richness. E-richness and temporality can be tested by a species’ ability to make value trade off decisions, enduring a short term negative affect for a positive one in the future.

Unity can be tested by whether information from a sense organ affects actions by parts of the body on the other side, or in the case of cephalopods, whether the degree to which the eyes can guide an isolated arm.

But establishing introspective selfhood is tough in any species that can’t talk. Metacognition has been established in some monkeys but the tests are complex and it’s possible limited intelligence is a confound in other species. Of course, it’s also possible that metacognition simply requires intelligence.

LikeLiked by 1 person

Should not P-Richness be easily evaluated from investigations of their sensory apparati? Dragonflies apparently have very wide spectrum vision, seeing colors well into the UV, for example. Those sensory organs wouldn’t have developed without the mental machinery to process the information being fed in from their eyes, no?

LikeLiked by 2 people

Yes, we might think so. I think they five different types of cones in their eyes so their p-richness might even exceed humans in some sense. That’s where I think most of this attempt at ratings tends to fall apart. Are dragonflies more or less p-rich than humans? They can see a wider spectrum but perhaps humans can pick up patterns better. What is superior? Patterns? Spectrum? Movement? The characteristics are evolved to meet the requirements of the species and their biological niches.

LikeLiked by 1 person

I find the way crows act regarding their dead very interesting and suggestive (of self-hood).

LikeLiked by 2 people

There is an alternate theory for crow funerals that they are learning situations for understanding predators and dangers. But there are some weird accounts that would lead one to suspect more.

About four years ago I wrote some on crows after I got interested in them.

https://broadspeculations.com/2016/04/09/of-minds-and-crows/

One of the more impressive things to me was a story from researchers monitoring groups of crows over many years of a female group leaving her family to live in another group many miles away. After several years, the female returned to her family and was accepted back into it. And then the Marzluff’s studies of crows apparently passing over generations and possibly across groups information about particular faces.

LikeLiked by 1 person

What sort of learning situation is thought to occur? I agree, I suspect more is going on.

In one study researchers wore face masks when they harassed crows. The crows learned to avoid people wearing those masks (so the crows were apparently identifying faces). Further, their offspring also avoided people wearing those masks which suggests the knowledge was passed down. Another case of individual identification and teaching.

LikeLiked by 1 person

My understanding is that this is crows observing the circumstances of the crow’s death and possibly showing younger crows about dangers.

One limitation is understanding other species is our understanding of what kind of behaviors have been pre-wired by evolution. One test involving problem solving involved a magpie putting its head into a container to retrieve a stick. The crow wouldn’t do that but the explanation might be that the crow is pre-wired to avoid putting its head into what it judged to be a risky environment based based on a different evolutionary history. So some choices that other species make may appear to us to be less optimum but are logical choices based on genetic history.

Personally I see incredibly cautious and risk-adverse behavior. When I put out food, the crows usually swoop down in stages from high to low, side to side, flying over the food. Then they wait, sometimes making alert-type calls. My suspicion is that they are trying to make any potential predator in nearby bushes reveal themselves. Usually still when some of the group feed, one or more others are in trees looking out.

LikeLiked by 1 person

I’m not sure what looking at a dead body would teach. [shrug]

Birds and small animals are very skittish. I’ve been trying to make friends with the crows, squirrels, and rabbits, I see on my walks, but they want nothing to do with me.

LikeLiked by 1 person

[If I recall correctly, the crows did not avoid the people in the masks. They actively harassed them. Same as they do to eagles. Pretty sure the study was done on UW campus, which is down the street. Lotsa crows around here.]

LikeLiked by 1 person

Ha, even better! I’ve seen crows harass an eagle. Pretty funny, actually.

LikeLiked by 1 person

I think the harassing serves the functions of trying to drive the animal from the vicinity but also alerting other crows to potential danger. Crows seem to make an assessment of the threat level I can usually detect when cats or other animals are entering the backyard when crows are present by their behavior. When many crows are loudly engaged it is likely a hawk or on occasion a fox or coyote. I think I can usually distinguish the difference but it would be hard for me to quantify it. By and large, the coyote or fox will generate an even louder alert and faster alerts calls than the hawks. It seems to be related to volume and speed. Some cats, the known predators, elicit an alert but much more subdued. Other cats are almost ignored. Small hawks are also mostly ignored. Sometimes if cats are eating the food that the crows want they will loudly harass them to drive them off the food.

LikeLiked by 1 person

Really fascinating. I learned a lot from your blog post, mainly about what has been observed in animals so far. The information about the split brain of birds, for example, is very cool. It will be nice to see how studies on animal consciousness progress, and I am personally wondering where it connects with the rationale of vegans.

LikeLiked by 2 people

Thanks! Glad you found it useful.

In terms of animal welfare, it seems likely that at least all mammals feel pain, and most animals have something at least analogous to it. But most animals don’t have a robust autobiographical self, meaning that when they’re killed, they’re not losing it the way a human would. Many vegans, I think, have a tendency to assume they do.

LikeLiked by 1 person

Re “The authors express doubt about attributing emotions like anxiety or grief to animals …” Apparently neither has had a dog or cat in their lives for very long. I have seen dogs go through what I can only describe as a full scale anxiety attack.Our dog goes ape shit when a walk is even being hinted at. (So much for animals live in the present.) And I have seen dogs and caps become almost catatonic when a significant other dies. When my wife and I were deathly sick from the flu, every time I would wake up I would see one of our two cats on guard at the end of the bed. They seemed to be doing shifts watching over us. (They were fed and watered using large self-feeding dispensers at the time, so were neither hungry or thirsty, but still they may have been worried about their meal tickets.)

I do not “know” exactly how these states are felt by animals but I am parsimonious. I don’t think we would have developed one system and they another when we share common DNA, cellular machinery, so much lese, etc.

LikeLiked by 3 people

The intuitions that our pets have human level emotions, such as anxiety or guilt, can be very strong. I’ve felt it myself. But that’s a model we build in our head, a theory of their mind, one we project onto them. Actually demonstrating the existence of higher level emotions in dogs and cats scientifically has proven pretty elusive.

Parsimony is going with the theory with the fewest number of assumptions. In animal cognition research, that means not attributing complex cognition when simpler cognition is enough to explain the behavior.

LikeLiked by 1 person

If a cat is manifesting behavioral problems and is prescribed an anti-depressant and gets better, wouldn’t the most parsimonious explanation be that the cat was experiencing anxiety?

https://www.medicinenet.com/pets/cat-health/treating_behavior_problems_in_cats.htm

LikeLiked by 1 person

I think you’re making assumptions about what is behind the behavioral problems, that it’s more complex emotions rather than simpler ones. It’s worth noting that anti-depressants work at a low level. They don’t automatically change a person’s emotions, just allow them to be changed.

LikeLiked by 1 person

What makes you think anxiety is complex?

Perhaps if you are thinking of some sort of metaphysical angst… but even most people don’t have that. Anxieties are about simpler things like potential lost of love ones, failure, insecurities around jobs and money.

LikeLiked by 1 person

I guess it depends on your definition of “anxiety”, but usually it’s understood as distress caused by anticipated events. It’s distinct from a flight reaction, such as the one a dog may go into when it realizes it’s on the way to the vet, as opposed to a general feeling of unease about a possible future vet visit. It’s a complex state that hasn’t been scientifically demonstrated in most animals. I think that’s the one the paper authors were referring to.

Many social animals do seem to experience separation anxiety, but that appears to be a simpler state in response to a more immediate situation.

LikeLiked by 1 person

When I moved into this place back in 2003, during the move lots of doors were open, and the wind flow caused the door into the laundry room to loudly slam shut just before my dog, Sam, was about to go through it. Scared her badly.

After that, on that first day I couldn’t drag her through that doorway if I tried. For several weeks she was anxious about it, but that anxiety faded over time until she finally replaced that memory with enough others where the doorway didn’t scare her.

Early today I read an article about what science has learned about training dogs, and it turns out negative reinforcement can damage their confidence, causing them to not try new things. That’s a form of constant low-level anxiety about negative consequences.

Anxiety in humans is, of course, complex, but given the spectrum of consciousness, it doesn’t seem at all unusual to me animals would experience less complex forms. Further, given that animals are far more emotional beings that intellectual beings, they would be prone to emotional disturbances.

LikeLiked by 2 people

My dog was once in a certain corner of the house when lightning struck a tree right outside the house. Subsequently, anytime there was thunder, she got as far away from that corner as she could, or even scratched to be let outside, anything to get away from that spot.

LikeLiked by 2 people

Yep, there ya go. Sounds like “distress caused by anticipated events” to me!

LikeLiked by 1 person

I think “anticipated” is too strong a word. I’d say “associated.” She associated the sensory combination of that corner and thunder with a threat condition. She was fine with that corner any time there wasn’t thunder, and wasn’t that bothered by thunder when we weren’t home.

LikeLiked by 1 person

So you’re saying you don’t believe she thought it would happen again, she just associated the specific conditions with a past Bad Thing?

Maybe, but I think that does their minds a disservice. When it comes to emotions, animals seem more like us than not, and as I said above, this doesn’t surprise me at all. Animals are like children — who are also very emotional beings.

LikeLiked by 2 people

So let’s pick this apart and I’m curious about your take on this as a functionalist.

A cat has a similar brain to ours and uses the same neurotransmitters. It manifests an abnormal behavior. We give it an antidepressant that alleviates symptoms of anxiety in humans. The behavior ceases or reduces. The cat was or was not experiencing anxiety?

A human simulation in silicon has no brain similar to ours and uses no neurotransmitters that we use.It manifests an abnormal behavior. We give it an antidepressant. Is that the same drug we give humans or a simulated one? Let’s assume simulated. The behavior ceases or reduces. The simulation was or was not experiencing anxiety?

LikeLiked by 1 person

I don’t think we can say that the cat was experiencing anxiety. As I noted above, antidepressants work on subcortical reflexive regions. For insight on what I’m talking about here, check out this post from Joseph LeDoux: https://www.psychologytoday.com/us/blog/i-got-mind-tell-you/201508/whats-wrong-antianxiety-drugs

On the human simulation, I suppose it depends on what the “abnormal behavior” is. Assuming we’ve managed to simulate the functional details successfully, and a psychologist diagnoses the simulated person with an anxiety disorder, then there’s a good chance they’re experiencing anxiety. It’s worth noting that if we successfully simulate the cat’s brain, we should have the same result as with the actual cat. Of course, if we know how to do all this simulating, we probably have much better fixes for these issues.

LikeLiked by 1 person

I think Steve Ruis nailed it. If his cats and dogs continually display behavior which mimics human function (and countless corresponding observations exist beyond his), then parsimony suggests that there should often be common behavioral architecture at work. Otherwise we’d need extra models to account for what’s displayed — or the opposite of parsimony. But given that the field of psychology has not yet been able to agree upon effective reductions in this regard, it does make sense that many today would consider the human quite special.

Consider the possibility that all conscious entities are ultimately only concerned about how they presently feel, though contemplating their futures can cause them to feel “hopeful” (which feels good in this regard), and/or “worrisome” (which feels bad in this regard). Thus this feature should provide instant incentive for them to do things which promote hope as well as diminish worry regarding perceptions of what might come. This should effectively bond the present conscious entity with the perceived future conscious entity. (Furthermore “memory” seems to account for the connection of present to past.)

Talk of “anxiety”, like it’s an advanced dynamic only reserved for something as intelligent as the human, seems to add unnecessary complexity to what should otherwise be pretty basic. It seems to me that as social animals. cats and dogs might indeed feel true affection for others. Thus when ill the Ruis cats could have been worried in that capacity, and/or they could have been worried about not being looked after. Note that to me the first actually seems substantially less advanced than the second.

LikeLiked by 2 people

“parsimony suggests that there should often be common behavioral architecture at work.”

Right. It seems to me like the parsimonious argument would be similar feelings are involved when the behavior is similar, not the other way around. I guess parsimony is in the eye of the beholder.

LikeLiked by 1 person

People often take parsimony to prefer to the explanation they simply feel is most likely. But that’s not parsimony in the sense of Occam’s razor.

The Occam’s razor version, the one that is a useful heuristic in scientific reasoning, is to prefer the explanation with the minimum number of assumptions beyond the data. Each assumption beyond the data is an opportunity to be wrong.

Historically, this has been especially true for the assumptions we feel most strongly just must be right.

LikeLiked by 1 person

So your assumption is that the animal with a brain and nervous system that uses the same neurotransmitters and exhibits the same behavior and same response to various drugs as a human is not having the same feelings as the human.

Whereas, conceivably a simulated human without a brain or nervous system and no neurotransmitters that exhibits the same behavior and same response, albeit to a simulated drug not even the real thing, as a human could be having the same feelings as human.

LikeLiked by 1 person

Applying Occam’s razor, I can’t see how you could ever think a non-organic being could be conscious since any behavior you witness could simply be the actions of an automaton no matter how strongly you feel the being is conscious or how precisely it mimics a human.

LikeLiked by 1 person

I do not assume that just because the same neurotransmitters are used, that the same evaluative abilities are present. Comparing a 16 billion neuron cortical system to a 0.76 billion one, it’s a major assumption to assume they are.

As I stipulated above, my conclusions about the simulated human assume the simulation is successful, which is, of course, an assumption, but assuming a broken simulation seemed unproductive for your question.

Asking whether an automated system is conscious is, I think, a meaningless question. It’s equivalent to asking how much humanness it has.

A better question is what its capabilities are. In assessing those, we should be just as cautious as with animals. Of course, with an engineered system, we have an advantage, since we presumably know what capabilities the engineers intended for it to have.

LikeLiked by 1 person

It is well to keep in mind it is just a heuristic, not a rule, and as you so often point out, nature is under no obligation to fulfill our expectations one way or the other.

LikeLiked by 1 person

Once we move beyond the assumptions necessary to explain the data, we’re indulging in our biases and prejudices. But beyond observation and rigorous extrapolation, the number of possible scenarios is vast. The probability that loose speculation with lots of assumptions just happens to hit on the infinitesimal fraction of scenarios that is reality, isn’t zero, but it’s not a good bet.

LikeLiked by 1 person

I’m not sure we can approach the topic of consciousness without assumptions. Functionalism itself is based on a huge assumption that the substrate doesn’t matter when there is no evidence or probably even any way of generating evidence that something non-biological can be conscious.

LikeLiked by 1 person

We only have evidence for functionality. And we have evidence for lots of biological functions being reproducible with technology (heart pumps, hip replacements, etc). Just in terms of the brain, the devices we’re using right now are doing things that once were only possible with human minds.

The idea that things will be different at some point as we recreate more of mental functionality is itself an assumption many privilege, but an assumption nonetheless.

LikeLiked by 1 person

Get back to me when we replace part of a brain.

LikeLiked by 1 person

Not exactly replacing a part of the brain, but I highlighted technology a while back that communicates directly with it.

https://selfawarepatterns.com/2020/01/24/stephen-mackniks-work-on-prosthetic-vision/

LikeLiked by 1 person

“…we’re indulging in our biases and prejudices.”

We all are, yes?

Do you find no role for logical analysis? Is it all bias and prejudice?

LikeLiked by 2 people

We’re certainly all prone to it. It helps to be aware of the tendency, to be cautious about the assumptions we’re making.

On logical analysis, note the point above: “and rigorous extrapolation”.

Bias and prejudice are an ever present danger. It’s typically easy to see in those we disagree with, but very hard to see in ourselves. The only ways I know to deal with that is the reality checks of empirical tests in science, and being willing to expose reasoning so others can try to find faults with it.

LikeLiked by 2 people

“We’re certainly all prone to it. It helps to be aware of the tendency, to be cautious about the assumptions we’re making.”

Your phrasing to me suggests you think you’re above it.

“On logical analysis, note the point above: ‘and rigorous extrapolation’.”

That part seemed a throw-away given the clause that followed about the vast number of scenarios.

“Bias and prejudice are an ever present danger. It’s typically easy to see in those we disagree with, but very hard to see in ourselves.”

Exactly my point, my friend.

“The only ways I know to deal with that is the reality checks of empirical tests in science, and being willing to expose reasoning so others can try to find faults with it.”

I completely agree. So what is the fault in the logical reasoning here?

We have in question similar brain systems — evolved from the same base — based on the same biological principles and substances. We have external observations of behavior that reasonably matches how we behave under similar circumstances. We have evidence that animals have emotional lives.

It seems overly cautious in the extreme to me to think something else is going on. Or that nothing is going on.

LikeLiked by 2 people

I think we can go too far with the anthropomorzing but that hardly means the other extreme is correct. I doubt cats have existential crises but, even with humans in many cases, so-called angst arises from displacement of a real concern, perhaps a disappointment or loss. It does seem realistic to think that another mammal with a relatively large brain with similar neurons and transmitters and affected by the same drugs might experience anxiety when a caregiver is missing, for example. I could doubt whether a spider or crab might experience it.

LikeLiked by 2 people

Absolutely. If we want to apply Occam, the assumption that a simpler, smaller system would operate in a simpler, smaller way than a complex, larger complex system with the same basic architecture, substances, and principles, has less freight than the assumption they would be significantly different because it adds the requirement of explaining why they are so different.

LikeLiked by 2 people

“a bird may be a couple of conscious entities intimately cooperating with each other”

I’d heard about this in relation to cephalopods before, but not birds. For Sci-Fi purposes, I could see some interesting extraterrestrial characters being inspired by this idea.

LikeLiked by 2 people

Adrian Tchaikovsky’s Children of Time has an interesting exploration of the psychology and sociology of a civilization of uplifted ocptopusses. They make a distinction between their perceiving and emotional self and their “reach”, the parts of themselves that control their arms.

The question for birds is, would they even recognize that there’s a separation? (Split-brain patients generally don’t, although some do notice “alien hand” type effects, perhaps after they’ve been told about the experiments.)

LikeLiked by 2 people

I still have to read Children of Time. There are all sorts of things about that that sound interesting to me.

LikeLiked by 2 people

Sorry, I used the wrong title above. Children of Time is excellent, and includes a fascinating exploration of uplifted spiders. It’s the sequel, Children of Ruin, that has the octopuses, as well as an actual alien intelligence. Both books I enjoyed and recommend!

LikeLiked by 2 people

If someone were to tell me that a perfect computer simulation of me would think it’s me exactly as I do, I’d say something like “Sorry but no, you don’t actually believe that”. A computer simulation of something, as the term is quite standardly known, essentially means a “model” of that entity rather than the entity itself. A computer simulation of a coming storm might provide us with useful data about the effects that it could have, but will not produce the sorts of things that the actual storm does. And this does seem true by definition. (I believe I’ve heard Wyrd remark that the only exception to this rule is that a good computer simulation of software function could be said to do exactly what’s simulated, which I guess I can go along with in a practical sense at least.)

My point is that even if people sometimes appear to strawman themselves by saying that a good computer simulation of me would actually think it’s me, I still wouldn’t play along. Instead I’d attempt to steelman their misguided assertion by suggesting that they should actually mean something which does make sense. If they’re naturalists then I’d suggest that they mean my brain uses some kind of physics to produce the qualia which ultimately exists as “me”, so if a machine other than my own body were to produce the same qualia that I experience over a given period of time, then the result would indeed be something else which essentially exists as “me” for that period. Thus our positions would instead align!

What if someone were to tell me that they believe qualia is created when certain information in the form of some medium, whether neuron firing, sheets of paper, or anything else, is properly converted into other information? Though I personally don’t consider this to be a causal process by which anything natural exists, that wouldn’t matter. If something did convert the right information into other information such that the qualia that I know of existence were produced, then there’d be two of us with the same experience. I find such steelmanning not only helpful in discussions with others, but to improve my perspective in general.

(And speaking of both simulated and real storms Mike, didn’t you just get another hurricane over there? Are you doing okay?)

LikeLiked by 2 people

Eric,

I’m noticing an increasing trend of you saying that you don’t think people believe what they tell you they believe. It is so wrong, you seem to think, that they can’t possibly be telling the truth. You seem to be wrapping yourself in layers of denial to avoid any chance of actually considering other views. Unfortunately, it doesn’t leave much room for productive conversation.

On the storm, I’m okay. We only caught the edge of it here. There are some people without power this morning, but that’s about it. Most of the devastation you see on TV happened in the western part of the state. Thanks for checking!

LikeLiked by 3 people

Mike,

I just think it’s wrong to say things are true when they’re false by definition. A computer model of something is, by definition, not the thing that it models, but rather a model of it, or “simulation”. A model of a town will not be a town, but rather a model of it. And a model of a model of a town should merely be a simulated version of the model. If one instead means the same kind of thing then they should say a “copy” of the model. I guess this is why Wyrd permits that a simulation of a software program could do what an actual program does, though to be pedantic I’d say that the “copy” term would mean the same thing, though “simulation” would be somewhat different.

Anyway no one believes that a simulation of the weather will do what the weather does, though may potentially provide a useful model of it. Similarly I don’t think that anyone should say that a computer simulation of me could essentially be like me, which is to say experience what I do. This is false by definition. But if one would like to imply that there is a physics which constitutes “me”, and that a computer armed with that physics could theoretically produce the exact same qualia which constitutes such a conscious entity, then I agree. So here I consider myself to be steelmanning an erroneous statement.

Isn’t it your view that there is a physics by which qualia exists, even if processed information alone, and thus a computer armed with that physics could theoretically produce something subjectively indistinguishable from what your brain produces? In that case I not only understand your view, but state it in a way that isn’t false by definition. But if that’s now your view, then please explain.

LikeLiked by 2 people

Eric,

I’m a computationalist. Which means that I see the brain as doing computation, and a successful simulation of it as essentially a re-implementation of that computation. To me, quibbling between “simulation” and “copy”, as long as the simulation is successful in terms of reproducing behavior, is meaningless.

Computation is a 100% physical process, so talking about the physics of qualia or whatever, as though it’s something separate and apart from that of computation, wouldn’t be computationalism.

Now, if you think computationalism is bunk, fair enough. A lot of people are in that camp. But I’m not one of them.

LikeLiked by 2 people

Mike,

I’m not talking about what is or isn’t bunk here. I’m talking about using terms which misrepresent what a given idea effectively means. Furthermore the chief person who seems to have screwed this up, as far as I can tell, happens to be on my side rather than yours, or John Searle himself. As I recall, all he was trying to say back in 1980 with his Chinese room thought experiment, was that we should be skeptical of considering consciousness as “brain software”. Thus one appropriate title for that position would have been “softwarism” at least, if not full “informationism”. But instead your side was gifted with the far more expansive and palatable label of “computationalism”. It’s merely what this term literally implies that I’m challenging. The brain does of course function in the manner that a computer does. It’s scientifically uncontroversial that it accepts input information, processes it through “AND”, “OR” and “NOT” gates, and then provides output information to animate various instruments, such as the heart. As far as I know Searle never challenged anything of the sort. It’s just that the “computationalism” term which he naïvely gave his opposition, does imply this.

Then there’s the “functionalism” term, which I have no idea who first coined. I perceive this as “If something functions like something else in some manner, then it can effectively be assessed to be that way in that regard”. It’s pointless to coherently challenge something which is true by definition.

Interestingly I also object to using a term here which is false by definition, or “simulation”. In standard English it means “a model of something else”. I’m sure that you’ve heard things like “A model of rain doesn’t produce wetness” countless times. Why do people assert this? Because to them it’s inherently false to imply that a “simulation” of something could also exist as what’s being modeled, or thus that your side is fundamentally wrong.

I’m not talking about who’s right or who’s wrong here however. I’m talking about the problem of using terms in ways which are interpreted differently. Thus each side seems unable to effectively communicate with the other. Isn’t the theme of your next post that we need people to challenge us so that we might be able to develop more open minds? Using terms like “computationalism”, “functionalism” and “simulation” do not foster open minds, but rather harden opposing camps. My suggestion would be for us to neither use terms which misrepresent a given idea when suitable terms could instead be used, as well as to avoid terms which render a given position true or false by definition.

LikeLiked by 2 people

Eric,

Computationalism goes back to the 1940s (at least), long predating Searle’s criticisms of it, although I don’t know when the precise term “computationalism” (or its alias: “computational theory of mind”) came into usage. Often “ism”s start as pejoratives, but Searle didn’t mention it in his 1980 paper. That said, I think the term as commonly understood, fairly describes the broad category of outlooks it refers to.

I seriously doubt that Searle would agree with your assessment of the science. And there are some scientists (Christof Koch comes to mind) who would agree with him.

I also think “functionalism”, again, as commonly understood, is a useful word for what it refers to, that the mind is what the brain does, not a thing that exists in addition to it.

On “simulation”, that’s not the word I usually choose, preferring “copy” or something along those lines, but it was how James asked the question. Usually “simulation” is preferred by anti-compuationalists. It highlights the issues you’re bothered by. But as I noted above, if you are a computationalist, then a computational simulation of another computation is a copy or alternate implementation of that original computation. So for me, it’s just not worth making an issue of.

Anyway, I think your angst about this is showing how difficult is is to live by your principle that one should always accept the other person’s definitions. Often it means conceding more than we’re comfortable with.

LikeLiked by 2 people

“I also think “functionalism”, again, as commonly understood, is a useful word for what it refers to, that the mind is what the brain does, not a thing that exists in addition to it”.

I’m not sure I’ve quite heard that definition before but I find it fairly problematic without additional qualification.

If mind is literally what the brain does, then only brains will ever have minds. Case closed.

If mind, however, is something the brain does but can be potentially done by other things, then there are other problems. The problem comes in when we what it is that brain does. We could define the brain as neurons firing in sync. We could define it as sensory and information processing. But those two definitions lead to quite different conclusions about whether mind could be instantiated in something non-biological. The first definition would lead one to say no. The second might lead one to say yes. If we say both are true, then we still limited to the biological.

If you lean towards the “sensory and information processing” view, in fact, you are not saying mind is what the brain does because the brain, assuming it does process information, processes it in a particular way with neurons, neuron assemblies, synaptic connections, and neurotransmitters. You are actually abstracting some subset of what the brain does and calling it mind.

LikeLiked by 1 person

Obviously that brief reference wasn’t meant to be rigorous or comprehensive. For that:

https://en.wikipedia.org/wiki/Functionalism_(philosophy_of_mind)

https://plato.stanford.edu/entries/functionalism/

The main issue with just focusing on neurons firing in sync, is it’s just a fragment of what’s going on. It’s like analyzing a car motor and focusing only on the firing order of the spark plugs.

We need to ask Dennett’s hard question: And then what happens? What is the causal relationship of the neurons firing in sync to sensory input, motor and hormonal output, and overall adaptive fitness? In other words, what is its functional role? The best explanations I’ve seen is that it represents a binding of a concept, a set of associations, that requires multiple brain regions, with each region exciting the other recurrently, leading to synchronized frequencies. It allows the concept to be held long enough for deliberation.

On abstracting some subset, there’s some truth in that, but it’s not arbitrary. It’s the subset that captures the brain’s functional role in producing adaptive outputs. It’s similar to understanding that the heart is a pump and can be replaced with a pump that works different from the organic version. If we get the subset wrong, we won’t have a functional equivalent.

LikeLiked by 1 person

“The best explanations I’ve seen is that it represents a binding of a concept, a set of associations, that requires multiple brain regions, with each region exciting the other recurrently, leading to synchronized frequencies”.

That is pretty much what I just wrote about in my latest post.

My problem with the so-called functional approach is that mind/brain is not simply a set of inputs and outputs. It has an evolutionary role. It needed to develop with evolutionary limitations. Mimicking the inputs and outputs is not a guarantee of a creating a mind. Comparisons to pumps and levers don’t really work for me because subjectivity is by its nature something internal;. How it works is just as important as what it does.

LikeLiked by 1 person

Just read and commented on your post.

I think we psyche ourselves out on subjectivity, make it something more than it is, a unique world and self model built from taking in information from a particular perspective and in a certain manner, influenced by past associations and innate dispositions.

LikeLiked by 1 person

Mike,

It does make sense to me that the “computational” term would go back to Alan Turing’s day. As I understand it people back then were quite excited by the emergence of computers to serve as an effective brain analogy. And indeed, the Turing test would serve as a hypothetical consciousness test as well.

I think a great deal of trouble could have been diverted on both sides however, if Searle would have had the foresight to give your side an appropriate name, such as “softwarism” or “informationism”. Then people who claim to be on his side might not think that he thought that “Brains ain’t computers”. In 1990 he wrote an article which at least tried to mend a decade of misperceptions. For one thing it stated “First, I have not tried to prove that “a computer cannot think.” Since any thing that can be simulated computa tionally can be described as a comput er, and since our brains can at some levels be simulated, it follows trivial ly that our brains are computers and they can certainly think.” That’s not quite my above “AND, OR, and NOT” observation, but it’s at least the right theme. http://beisecker.faculty.unlv.edu/Courses/PHIL%20330/Searle,%20Is%20the%20Brain's%20Mind%20a%20Computer%20Program.pdf

Furthermore if it were understood that both sides think that brains do compute, then informationism shouldn’t be perceived as the only game in town in this regard. (Beyond appropriate names, better still would be if he’d have directly proposed my “thumb pain” version of his thought experiment!)

You won’t be surprised to learn that if my first principle of epistemology were generally agreed upon, then I suspect that this mess would largely be resolved. If it were formally understood that there are no true or false definitions, but rather just more and less useful ones in a given context, then people shouldn’t tend to take a term like “computationalism” at its misleading face value. Here they should ask things like, “So how are you defining that term?” To which one might reply, “We believe that qualia exist when the proper information given some medium (whether neurons, sheets of paper, or anything else), is properly processed into other information. This is to say, we believe that no dedicated qualia producing mechanisms exist, but rather just an information to information conversion.”

Actually by that definition I’d be a functionalist, and even though I have a problem using a title which, as commonly known, would be true by definition. I believe that what the brain (sometimes) does, is produce mind, through through associated mechanisms rather than information processing alone.

LikeLiked by 2 people

Hi Mr Mike Smith and Eric,

There is so much more to read in the comment section here than there is in the post itself. In any case, this area of research is really just at its infancy, though it is highly commendable, and there will continue to be some significant challenges ahead and many methodological issues to iron out.

Mike, given the relevance and quality of your post, I have decided to hyperlink your post to mine under the heading “Related Articles” in my post entitled “SoundEagle in Debating Animal Artistry and Musicality” at http://soundeagle.wordpress.com/2013/07/13/soundeagle-in-debating-animal-artistry-and-musicality/ so that my readers can access your post.

Happy New Year to both of you! May you find 2021 very much to your liking and highly conducive to your learning, thinking and blogging!

LikeLiked by 1 person

Thanks SoundEagle. Hope we all find 2021 a better year!

LikeLiked by 1 person

I had my eye on Laura because I know someone who lives down there and saw that it just clipped you. Glad to hear you “weathered” the storm okay!

Speaking of power outages, had two this summer. First time in many years that’s happened. The first one was due to a very close lighting strike. Bright flash, instant loud crack, and the whole neighborhood went dark. That one took 12 hours for them to fix (blown transformer, I assume). The other one I think was due to air conditioning overload. Happened during our heat wave. That one only lasted a few hours. I’ve come to realize that the power companies are just about the best utility service of them all. Their responsiveness and diligence really shines!

LikeLiked by 1 person

Thanks!

Strangely enough, the outages in Baton Rouge have been happening after the storm. There are a few people saying they saw wind damage. Maybe the utility crews had to cut power to work on something. I actually live right by my own power company so, at least so far, it’s been steady.

There were a ton of utility crews in Baton Rouge Wednesday and yesterday. They’re using BR as a staging area for repairing the other regions.

I agree on power being the most reliable of utility services. In truth, we only notice utilities when they’re either absent or not working correctly.

LikeLiked by 2 people