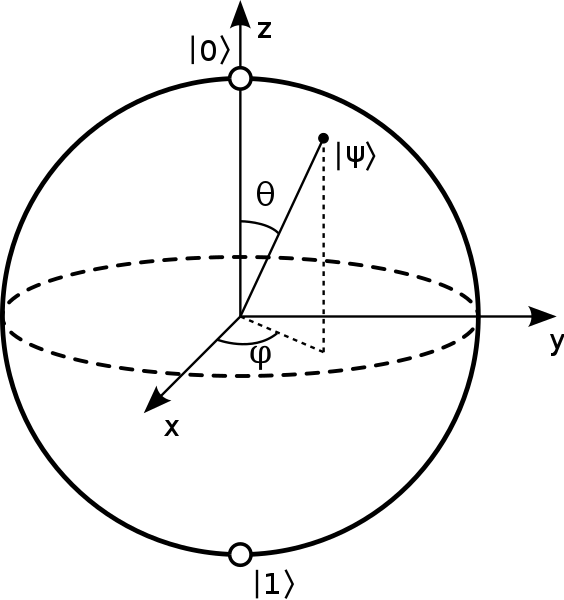

The main difference between a quantum computer and a classical one is the qubit. Qubits are like classical bits, in that they hold binary values of either 1 or 0, on or off, true or false, etc. However, qubits, being quantum objects, can be in a superposition of both states at once. The physical manifestation … Continue reading Thoughts about quantum computing and the wave function

Tag: Copenhagen interpretation

Quantum Reality

I just finished reading Jim Baggott's new book Quantum Reality: The Quest for the Real Meaning of Quantum Mechanics - a Game of Theories. I was attracted to it due to this part of the description: Although the theory quite obviously works, it leaves us chasing ghosts and phantoms; particles that are waves and waves … Continue reading Quantum Reality

Einstein, Schrodinger, and the reluctance to give up hard determinism

Ethan Siegel on his Starts With a Bang blog has an interesting review of Paul Halpern's new book on Einstein and Schrodinger, and their refusal to allow the implications of quantum physics to dissuade them from idea that the universe is strictly deterministic. It's an interesting post and one that I recommend reading in full. … Continue reading Einstein, Schrodinger, and the reluctance to give up hard determinism

Chaos theory and doubts about determinism

I've mentioned a few times before that I'm not a convinced determinist, at least not of the strict or hard variety. I have three broad reasons for this. The first is that I'm not sure how meaningful it is to say something is deterministic in principle if it has no hope of ever being deterministic … Continue reading Chaos theory and doubts about determinism

Fluid tests and quantum reality

The other day, I mentioned that I had some sympathy for the deBroglie-Bohm interpretation of quantum mechanics, namely an interpretation that there isn't a wave-function collapse as envisioned by the standard Copenhagen interpretation, but a particle that always exists but is guided by a pilot-wave. It turns out that there are some people doing experiments with … Continue reading Fluid tests and quantum reality

Tegmark’s Level III Multiverse: The many worlds interpretation of quantum mechanics

I recently finished reading Max Tegmark’s latest book, ‘Our Mathematical Universe‘, about his views on multiverses and the ultimate nature of reality. This is the third in a series of posts on the concepts and views he covers in the book. The previous entries are: Tegmark’s Level I Multiverse: infinite space Tegmark’s Level II Multiverse: bubble universes Tegmark … Continue reading Tegmark’s Level III Multiverse: The many worlds interpretation of quantum mechanics

Determinism isn’t as certain as many assume

Conversation on yesterday's post on free will has me thinking about determinism. First, what is determinism? According to Merriam-Webster, my favorite dictionary because they seem to be extremely good at cutting to the chase, determinism is defined as: a theory or doctrine that acts of the will, occurrences in nature, or social or psychological phenomena … Continue reading Determinism isn’t as certain as many assume