The last post on feelings generated some excellent conversations. In a couple of them, it was pointed out that my description of feelings put a lot of work on the concept of imagination, and that maybe I should expand on that topic a bit.

In their excellent book on animal consciousness, The Ancient Origins of Consciousness, Todd Feinberg and Jon Mallatt identify important behavioral markers of what they call “affect consciousness”, which is consciousness of one’s primal reactions to sensory stimuli (aka feelings). The top two markers were non-reflexive operant learning based on valenced results, and showing indications of value based cost/benefit trade-off decision making.

In thinking about what kind of information processing is necessary to meet these markers, I realized that a crucial component is the ability to engage in action-sensory scenario simulations, to essentially predict probable results based on decisions that might be taken.

In their book, Feinberg and Mallatt had pointed on that the rise of high resolution eyes was pointless without the concurrent rise of mental image maps. Having one without the other would have consumed valuable energy with no benefit, something unlikely to be naturally selected for. Along these same lines, it seemed clear to me that the rise of conscious affects was pointless without this action-scenario simulation capability. Affects, essentially feelings, are pointless from an evolutionary perspective unless they provide some value, and the value they provide seems to be as a crucial input into these action-scenario simulations.

I did a post or two on these simulations before realizing that I was talking about imagination, the ability to form images and scenarios in the mind that are not currently present. We usually use the word “imagination” in an expansive manner, such as trying to imagine what the world might be like in 100 years. But this type of imagination seems like the same capability I use to decide what my next word might be while typing, or what the best route to the movie theater might be, or in the case of a fish, what might happen if it attempts to steal a piece of food from a predator.

Of course, fish imagination is far more limited than mammalian imagination. The aquatic environment often only selects for being able to predict things a few seconds into the future, whereas for a land animal, being able to foresee minutes into the future is a very useful advantage. And for a primate swinging between the trees and navigating the dynamics of a social group, being able to see substantially farther into the future is an even stronger advantage.

But imagination, in this context, is more than just trying to predict the future. Memory can be divided into a number of broad types. One of the most ancient is semantic memory, that is memory of individual facts. But we are often vitally concerned with a far more sophisticated type, narrative memory, memory of a sequence of events.

However, extensive psychological research demonstrates that narrative memory is not a recording that we recall. It’s a re-creation using individual semantic facts, a reconstruction, a simulation, of what might have happened. It’s why human memory is so unreliable, particularly for events long since past.

But if narrative memory is a simulation, then it’s basically the same capability as the one used for simulation of possible futures. In other words, when we remember past events, we’re essentially imagining those past events. Imagination is our ability to mentally time travel both into the past and future.

As I described in the feelings post, the need for the simulations seem to arise when our reflexive reactions aren’t consistent. (I didn’t discuss it in the post, but there’s also a middle ground for habitual reactions, which unlike the more hard coded lower level reflexes, are learned but generally automatic responses, such as what we do when driving to work while daydreaming.)

But each individual simulation itself needs to be judged. How are they judged? I think the result of each simulation is sent down to the reflexive regions, where they are reacted to. Generally these reactions aren’t as strong as a real time one, but they are forceful enough for us to make decisions.

So, as someone pointed out to me in a conversation on another blog, everything above the mid-brain region could be seen as an elaboration of what happens there. The reflexes are in charge. Imagination, which essentially is also reasoning, is an elaboration on instinctual reflexes. David Hume was right when he said:

Reason is, and ought only to be the slave of the passions, and can never pretend to any other office than to serve and obey them.

When Hume said this, he wasn’t arguing that we are hopelessly emotional creatures unable to succeed at reasoning. He was saying that the reason we engage in logical thinking is due to some emotional impetus. Without emotion, reason is simply an empty logic engine without purpose and no care as to what that logic reveals.

Okay, so all this abstract discussion is interesting, but I’m a guy who likes to get to the nuts and bolts. How and where in the brain does this take place? Unfortunately the “how” of it isn’t really understood, except perhaps in the broadest strokes. (AI researchers are attempting to add imagination to their systems, but it’s reportedly a long slog.) So, if this seems lacking in detail, it’s because, as far as I’ve been able to determine in my reading, those details aren’t known yet. (Or at least not widely known.)

What I refer to as “reflexes” tend to happen in the lower brain regions such as the mid-brain, lower brainstem, and surrounding circuitry. Many neurobiologists often refer to these as “survival circuits” to make a distinction between the reflexes on the spinal cord, which cannot be overridden, and the ones in the brain, which can, albeit sometimes only with great effort. (I use the word “reflex” to emphasize that these are not conscious impulses.)

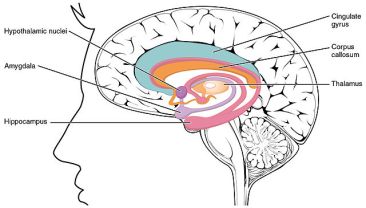

Going higher up, we have a number of structures which link cortical regions to the lower level circuitry. These are sometimes referred to as the limbic system, including the notorious amygdala, which is often identified in the popular press as the originator of fear, but in reality is more of a linkage system linking memories to particular fears. Since there are multiple pathways, when the amygdala is damaged, the number of fears that a person feels is diminished, but not eliminated.

Of note at this level are the basal ganglia, a region involved in habitual movement decisions. This can be viewed as an in-between state between the (relatively) hard coded reflexes and considered actions. Habitual actions are learned, but within the moment are generally automatic, unless overridden.

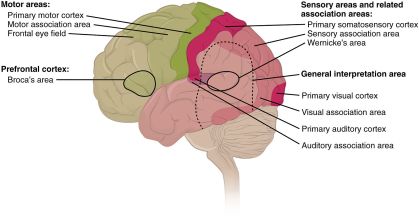

And then we get to the neocortex. (Some of you may notice I’m skipping some other structures. Nothing judgmental, just trying to keep this relatively simple.) Broadly speaking, the back of the cerebrum processes sensory input, and the front handles movement and planning.

The sensory regions, such as the visual cortex, receive signals and form neural firing patterns. As the patterns rise through regional layers, the neurons become progressively more selective in what triggers them. The lowest are triggered by basic visual properties, but the higher ones are only triggered on certain shapes, movements, or other higher level qualities. Eventually we get to layers that are only triggered on faces, or even a particular face, or other very specific concepts. (Google “Jennifer Aniston neuron” for an interesting example.)

Importantly, when the signals trigger associative concepts, the firing of these concepts cause retro-activations back down through the layers. The predictive processing theory of perception holds that what we perceive is actually more about the retro-activation than the initial activation. Put another way, it’s more about what we expect to see than what is coming in, although what is coming in provides an ongoing error correction. (Hold on to the retro-activation concept for a bit. It’s important.)

Imagination is orchestrated in the prefrontal cortex at the very front of the brain. Note that “orchestrated” doesn’t mean that imagination wholly happens there, only that the prefrontal cortex coordinates it. Remember that sensory imagery is processed in the perceiving regions at the back of the brain.

So, if I ask you to imagine a black bear climbing a tree, the image that forms in your mind is coordinated by your prefrontal cortex. But it outsources the actual imagery to the back of the brain. The images of the black bear, the tree, and of the bear using its claws to scale the tree, are formed in your visual cortex regions, through the retro-activation mechanism.

Of course, a retro-activated image isn’t as vivid as actually seeing a bear climbing a tree since you don’t have the incoming sensory signal to error correct against. You’re forming that image based on your semantic memories, your existing associations about trees, bears, and climbing. (If you need help imagining this, and don’t know not to try to escape from a black bear by climbing a tree, check out this article on black bears.)

What the pfc (prefrontal cortex) does have are pointers, essentially the neural addresses of these images and concepts. This is the “working memory” area that is often discussed in the popular press. And the pfc is where the causal linkages between the images are processed. But the hard work of the images themselves remain where they’re processed when we perceive them.

So imagination is something that takes place throughout the neocortex and, well, throughout most of the brain since the simulations are judged by the reflexive survival circuits in the sub-cortical regions. Given how difficult this capability is to engineer, I don’t think we should be too surprised.

So that’s my understanding of imagination and how it ties in with perception and affective feelings. Have a question? Or think I’m hopelessly off base? As always, I’d love to find out what I might be missing.

Thank you. That was fascinating.

Interestingly, the prof (Paul Bloom) in my “Moralities of Everyday Life” online philosophy course used the same Hume quote this week. Given the tenor of his lectures, I doubt that he agrees with it, though.

LikeLiked by 2 people

Thanks!

I once listened to Paul Bloom in an interview and read one of his articles. I recall it being a push-back against claims that we can’t be rational. I actually don’t think that’s what Hume was claiming, but others, such as Jonathan Haidt and Robert Kurzban, do make the case that it’s very difficult.

My own view is that being rational by ourselves is very hard. It’s extremely easy to fall prey to our own biases without realizing it. It’s only by talking with people who will challenge those biases that we really test them. (Which is one of the reasons I blog.) Even then, we’re in danger of biases that exist throughout our culture, or throughout all humanity.

LikeLiked by 1 person

Hi Mike,

Always reading, only commented a couple of times through the years. I share your general outlook on the brain/mind and have some belief that the world is coalescing around some kind of similar story, but I guess we’ll see.

I like your schematization but I’ve struggled with your imagination part of this, and like some of the others, think feelings are too loosely described and conceptualized. Not that I could possibly explicate any better.

The animal imagination part confounds me on the future level. The idea that animals imagine the future can be hedged. I like the idea that the squirrel is not imagining anything of the future when it is running around burying nuts. Similarly, there is talk about birds reburying objects if another bird is watching them. It, again, seems like we do not necessarily need some imaging of the future idea “that bird is going to steal my food when I leave,” but that it can instead be more of a ready made thought process. Perhaps they have images of another animal stealing their food, and maybe that will necessarily happen in the future, say minutes away, but that does not necessarily mean they are imagining the future, in the way that me and you have an understanding that the train will arrive in ten minutes. The squirrel nevertheless effects her future.

That may be an unnecessary distinction, but there may be some truth that animals always imagine events but do not imagine future events. Their imagined events may often be warding off something in the future, but in the end, they lack that recursive understanding that their imagined event is something that is going to happen in the future. Perhaps apes start doing that, I don’t know, but I kind of think not.

Similarly, we re-imagine past events, but we also know they are “in the past.” Other mammals have memories and images from their past, but it seems strange to say they understand that these are memories. The penguin does not return to her mate because it remembers it was her mate last breeding season. Again, your in that tricky area that it may have an indelible image of “this is my mate” because of its past, but it does not know that this was her mate in the past.

In the end, I would say that such an idea presents once again the way that human consciousness reaches a higher level than animal consciousness. Although, it is surely a continuum and our early ancestors and our potential humans go through various stages of capacities to imagine the future and understand that they have a past.

Lyndon

P.S. Always enjoy your twitter links. The building of artificial cells is awesome.

LikeLiked by 2 people

Hi Lyndon,

Good hearing from you. Your comments are always welcome!

On animal imagination, I agree that we have to be very careful about anthropomorphizing them. I think all vertebrates do have imagination, but it’s generally far more limited than human imagination.

What is the squirrel doing that it’s actually thinking through, and what is it doing because it just feels good or right to it to do without any forethought? Squirrels don’t have a master plan that leads them to save nuts. They do it because it feels good to do it (for them). Of course, evolutionarily it feels good because it enables them to survive, but the squirrel itself has no comprehension of that. In Dennett’s phrasing, they have competence without comprehension.

Nevertheless, many animal species with similar instinct bound behavior, when tested, do show signs of an imagination. But that imagination is far more limited than what we have, or even than what most primates have. It amounts to being able to plan how to get a tasty treat when presented with a couple of pathways to do it. Imagination requires a prefrontal cortex (at least in mammals). Primates have far larger pfc than most mammals, and humans have a far larger pfc than any other primate.

I totally agree that the vast majority of species show no signs of recursive metacognitive capabilities. They have what some philosophers might call first order consciousness, what neurobiologists call primary or sensory consciousness, but not the “high” order consciousness of humans. I vacillate over whether sensory or primary consciousness deserves the label “consciousness”, but what typically brings me down from outright rejecting it is that, while introspection may be absent, a lot of what we introspect is still there, albeit in diminished quantity. But I can understand if someone does reject it. It’s a matter of definition.

Thanks for the feedback on Twitter! I periodically wonder if anyone is watching that feed.

LikeLike

On the imagination of animals:

If you’ve ever house trained a dog, you have to assume they have some sort of imagination of future events. When they are sitting at the door whining, you know their intention, and you know they are excercising control over bodily functions. If you’ve ever left a dog alone in the house too long and returned to find them acting “guilty”, even before you’ve found the “present” they could no longer keep in, you know they are anticipating punishment.

When talking about crows I usually compare them with chickens because I can. If you put out a kind of food which they need to bite off pieces rather than swallowing whole, like a clump of lettuce, (my) chickens will bite down on a piece and then shake their heads a little to try to tear a piece off. This works sometimes, maybe 50% or so. Crows will always stand on the thing and pull off pieces, which works every time. I have seen the chickens accidentally stand on the piece, in which case they have an easy time, but inevitably they move off the piece and go back to the old, less-effective method without learning anything.

But even the chickens seem to show some anticipation. When they are still in the coop and we open the back door of the house (making a distinctive sound) and approach the coop, they crowd around the door and make particular noises. Clearly they anticipate being let out.

I think what sets human imagination apart is the arbitrariness, and so novelty, of things we can imagine. I can see various animals being able to anticipate a bear climbing a tree, but I doubt any could imagine a bear jumping 50 feet to the top of a tree.

As a side comment, imagine a bear trying to climb a smooth steel wall. Take a moment. I would lay odds that if you actually tried to imagine it, at some point you imagined yourself trying to climb a smooth steel wall, imagined yourself trying to find some purchase, imagined your own hand or foot slipping down the wall.

*

LikeLiked by 2 people

I’ve had the same strong intuition about dogs showing guilt, but the science supposedly doesn’t back it. The animal behaviorists who’ve looked at this can’t find any evidence beyond the dogs reacting to their owner’s reactions. We project our own mental states onto them.

I think all vertebrates have imagination to some degree. As I mentioned in the post, for many species that degree is minuscule by our standards, but it appears to be there. So I think chickens definitely have it, albeit to a far less degree than crows.

Things get a little more difficult with invertebrates. I recall a study showing that fruit flies engage in trade-off behavior, which indicates some glimmer of imagination. But it’s hard to find it in ant behavior; E. O. Wilson using pheromone trails to have the ants march in a pattern that spells his name doesn’t seem to leave much room for imagination. Cephalopods definitely have it though, and to Jim’s point, they have it with a radically different brain architecture.

I actually think what sets human imagination apart is symbolic thought. It takes symbolic thought to plan days, weeks, months, or years into the future. Or to conceive of vast distances, or very small ones. Or to organize into societies of thousands or millions of people.

On the bear climbing a steel wall, before I got to your next sentence, I actually just pictured a super bear sinking its claws into the steel. I think I missed the “try” though. If I hadn’t, my thoughts might have gone along the lines you described.

LikeLike

I think some of the more complex behavior of crows is pretty darn hard to explain without supposing they imagine the consequences of their actions.

LikeLiked by 2 people

Agree. I spend a lot of time feeding and observing crows in my backyard.

It turns out parrots and corvids have as many neurons as some primates but they are packed more densely and in different structures.

It might be a mistake to become too obsessed over particular brain structures being required for certain capabilities and realize that nature can create find more than one solution to a problem.

LikeLiked by 1 person

I’m fascinated by crows. I have them in my garden and they treat me like an equal, or perhaps a rather inferior and irritating neighbour. But it’s hard to tell whether they really regard me with contempt, or if I am projecting human emotions onto them. It raises interesting questions about AIs and how they might be able to simulate complex human social behaviour without actually having theory of mind.

LikeLiked by 1 person

They may have a theory of mind.

https://www.huffingtonpost.com/2013/01/11/crow-intelligence-mind_n_2457181.html

LikeLiked by 1 person

Ditto on the twitter links.

LikeLiked by 1 person

SelfAware,

Your idea that imagination must be paired with affect dovetails nicely with Morsella 2005, except that he talks about phenomenal states. Sorry, the link within the link doesn’t work, but maybe you can ask the author.

Do you distinguish between “affect” and “emotions”? Or other closely related terms? Because emotions can involve a kind of ready-state for the organism, For example, an animal that is afraid will run from a stimulus that would otherwise not impress it. And this ready-to-run state doesn’t require any imagination to work its magic.

LikeLiked by 2 people

Thanks Paul! Appreciate the link. I’ll check it out.

One of the problems with this stuff is that words like “affect” and “emotion” have varying meanings in the literature. I’ve seen “affect” used to refer to what I call the reflex, but I’ve also seen it used to refer to conscious feeling states. William James also used “emotion” to refer to the reflex, but it’s almost always used for those conscious states today. Common usage of “affect” does seem broader than “emotion” though. Affect can refer to things like hunger or pain, which usually are not included in the category of emotions. All of this is why I usually go for “reflex” and “feeling”. Even those are subject to misinterpretation but perhaps less so.

I do think the feeling of the emotion is a complex thing. Similar to what Lisa Feldmann Barratt theorized, I think they’re mental concepts, constructions, predictions in their own right, and heavily influenced by a lot of things the reflexes aren’t necessarily aware of, such as social norms.

On the ready state, I disagree that imagination isn’t involved. It certainly isn’t the leisurely type of imagination we usually associate with that word. I think its primal response to want to run away is the reflex. The fact that it feels this primal response serves a purpose, to provide an input into its decision making, to give it a chance to override that reflex. I do agree though that if it’s already in a fearful state, extra energy is required to do something other than follow its primal responses.

LikeLike

On the ready state: I was thinking of flies. I’m not at all convinced that flies can imagine different future events, or weigh trade-offs. But they definitely have agitated and non-agitated states. In the case of animals that do have imaginations, I agree that the ready state interacts with imagination. Decision making still happens in mammals and birds when they’re scared, with a bias in favor of fleeing. But in flies I suspect you have the behavioral bias without any comparison of imagined scenarios.

LikeLiked by 1 person

I can understand your skepticism on fruit fly imagination. With only 250,000 neurons, it doesn’t seem like they have much to work with. My statement about them having it comes from this study: https://www.nytimes.com/2014/05/23/science/even-fruit-flies-need-a-moment-to-think-it-over.html

Of course, with the size of their brain, any imagination they might have would by necessity be very low resolution and low depth. It might be more appropriate to call it “proto-imagination”.

It’s worth noting that the decisions in the study were made based on odors. That’s interesting because in the most primitive vertebrates, the forebrain seems largely dedicated to processing smell information. Not sure if I buy this, but there are some biologists who think smell may have been the original sense that spurred the development of imaginative capabilities. Smell is unique among the senses in having a direct connection to the forebrain. All the other senses go through the diencephalon (thalamus in humans). It’s hard for us to imagine that given that smell in primates is so atrophied, but for most animals it’s a much more central part of their worldview.

LikeLike

I am in the exact same place as you on these topics. With regard to “AI researchers are attempting to add imagination to their systems, but it’s reportedly a long slog.” I think you may be pessimistic here. All chess playing computers have used a proto-imagination protocol in the form of decision trees. The computer considers potential responses to moves being made by its opponent by considering a suite of possible moves and the consequences of each of those. The computer has to have programmed into it a method of deciding between these “possible future states of the chess board” and then it selects one possible next move and repeats the whole process based upon the response of its opponent. This is not unlike the power of imagination.

LikeLiked by 2 people

Thanks Steve.

I’m actually not pessimistic that they’ll eventually succeed in adding imagination. But the accounts I’ve read indicate that it’s much harder than it looks. The problem with using games is that they provide a false sense of confidence. The simplified rules the games operate under are far less complex than what a bee does to navigate through its world and find food, much less a mammal or bird.

LikeLike

Can I use this beautiful piece of text for my own purposes?

Have you a question? Or maybe you think I’m hopelessly off base? So I’d love to find out what I might be missing.

LikeLiked by 2 people

Hey Stan,

Good hearing from you! Absolutely on using the text. Take it!

LikeLiked by 1 person

i came back. And that’s for a very simple reason.

Because where I can find such a wonderful mind

and so good man.

But I have to admit that i a little doubted.

But I must also admit that nowhere I can found such a wonderful and wise man.

So it is only my fault that i wasn’t able to use this potential, no matter how it sounds cruelly.

LikeLiked by 1 person

It’s good to have you back Stan.

LikeLiked by 1 person

I’ll first go through the comments so far and then get into the actual post.

Ann,

We certainly don’t see enough women on these blogs so I do hope to hear more from you! Regarding Hume, yes I suppose I’m far more happy with Mike’s interpretation of the guy than your teacher’s (but then again, I hate it when the ideas of people from the past are used as “expert validation” for what remains uncertain today. So whatever).

Lyndon,

As I see it, all conscious forms of life construct scenarios about the future regarding what might happen in order to make themselves feel more happy in the present. Thus a bird may become worried about another stealing its nuts when it perceives being watched. So yes, I am talking about the revered “theory of mind” idea, or something which some scientist like to place as a sacred trait of the human! I think we need to remember however that anthropocentrism does not just concern attributing human perspectives to non-humans, but also deciding that the human is fundamentally different from (perhaps read “is superior to”) the sorts of life that it evolved from. That’s also a problematic position as I see it.

I presume that you’ve seen a bit of me in the past and I hope we see more of you in the future.

JamesOfSeattle,

Oh man did you nail it on the trained dog that poops in the house scenario! How could the dog act guilty because of the owner’s reactions, before the owner can possibly even react? Who’s really guilt here? Might it be an anthropocentric science which wants to believe that humans are special?

Lisa Feldman Barrett seems to currently be capitalizing on this bias with her theory of constructed emotion, and which Mike did a post on. My own criticism is found here, though as noted it did begin earlier. https://selfawarepatterns.com/2017/08/12/the-layers-of-emotion-creation/comment-page-1/#comment-17460

Paultorek, James Cross, and Steve Morris,

Good stuff on crows! But didn’t you know that this sort of talk happens to be inconvenient…

Steve Ruise,

Yes computation apparently functions like imagination often enough, though I theorize two fundamentally separate forms of computer for each sort of function. For the standard computer existence is personally inconsequential, though the conscious form is motivated by a teleological goal, or to be “happy”. Apparently this simplifies evolution’s programming demands.

s7hummel,

Yea I like Mike too.

LikeLiked by 2 people

Well Mike, it seems that you’re trying pretty hard here to nail down some basic nuts and bolts, or to reverse engineering the human. And yet ultimately you realize that it’s not quite working yet. Perhaps this is like driving somewhere when you don’t have a map to show you the way? Here you can study all sorts of places that your travels take you through the brain, and yet without an effective map you simply may not fathom how this sort of machine functions. Good architecture seems essential to your quest, and even if it turns out that this would teach you that your quest happens to be beyond human engineering. But even if so an effective map might still yield some useful things that can indeed be achieved. People obviously suffer today given the failure of our mental and behavioral sciences Conversely they do not suffer because we’re unable to build conscious machines or to download ourselves to other mediums. (Well maybe Ray Kurzweil suffers, that is until he looks at what his failed predictions have done to his bank account!)

I have architecture for you to consider, and which I’ve developed without knowing a wit of neuroscience. Furthermore you might notice that I seem able to effectively attack opposing architectures, and yet my own models seem to receive little damage from outside attacks. Though you may have a general grasp of how my ideas work, the devil does remain in the details. You’ll prove your mastery of them once you’re able to effectively predict what I would say about a number of associated issues.

I’ll now continue on from our last discussion found here: https://selfawarepatterns.com/2018/10/28/the-construction-of-feelings/comment-page-1/#comment-24610

I agree with you that it would have been far less contentious if F&M would have theorized exteroceptive “perception” rather than “consciousness”. The term they chose, while provocative ($), seems to get us going down an unfortunate “panpsychism” sort of rabbit hole.

A standard pocket calculator conceptually does exactly the same thing that a self driving car does. It accepts input information, processes it algorithmically, and so provides output function. The only true difference here is a matter of quantity. I’m saying that the “image map” of a self driving car will be far more extensive than that of a calculator, though they do each provide such a thing. Even if the calculator uses points on a map, points on a map are what maps are made of.

Regarding higher level wording, providing that sort of thing is indeed the role of the architect. It’s not the architect’s job to decide how to build something. This person’s role is only to decide what needs to be built. I’m not saying that good architecture exists out there in general today, but rather that we shouldn’t inherently blame the architect if the engineer can’t do a given job. We humans may not be able to do what you personally want to be done, and even with good architecture. But I suspect that there’s a great deal that could be done regarding our soft sciences in general with good architecture.

Yes architects do speak in metaphor (as we all do), though engineers that do not grasp an architect’s language will most certainly fail to grasp the nature of a given architect’s vision. So let me suggest here that your phone does not “feel” in the sense that you do, or in the metaphorical sense that I know you understand, and regardless of what’s displayed on its screen.

On whether or not you exist, I define you as a product of experienced value. If you’re perfectly sedated then “you” do not thus exist. (Well your body should, though essentially the way that your phone does, or with no value component during that period.) If you’re being tortured then you will exist very strongly in a negative sense here. That’s the sort of thing I mean by “you” — something that feels positive to negative.

I do distinguish between more and less primitive forms of life, but not more and less primitive varieties of value. I theorize value as a universal element of reality, not that I have effective ways to measure this stuff. If something feels horrible to a lizard however, then theoretically feeling that way would be no less and no more horrible for a human to experience.

LikeLiked by 1 person

Eric,

Sorry for the delay in responding, but I’ve struggled to come up with points that I haven’t already made many times. Usually when I reach this stage I end the conversation, but your post in this new thread deserves a reply. (Although for most the topics from the last thread, I’ll let my remarks there stand.)

On your architecture, I think I do have a pretty good understanding of it, and I see at least two serous flaws which I don’t think you’ve adequately addressed.

The first is the ontology of the tiny computer. The initial concept is plausible, but the details simply don’t align with the neuroscience I’ve read. I’ve asked you before what computations or information processing the tiny computer actually does. It superficially sounds similar to imagination, but when we get into the details, more and more of the actual computation involved in imaginative scenarios gets consigned to the non-conscious computer, eventually leaving me wondering what the tiny computer actually does. In short, I don’t think it exists in the manner you conceive, or perhaps it needs to be described in another manner.

The second is the overall level of detail you’re willing to get into is simply not adequate for my purposes. Given your remarks above about architects, I think we simply disagree about the desirability of those details. But if you’re looking for a theory that you can sell to the cognitive sciences or the philosophy of mind, I think that lack of detail will frustrate your ambitions.

This is an area where everyone has their own pet theory. Everyone, including people who’ve never cracked a book on the mind before, thinks they’re an expert on it. Many read about one theory that sounds right to them and champion it against everything else they hear. It stands to reason that most are wrong. Standing out from the pack in any enduring manner requires novelty, detail, and rigor.

LikeLiked by 1 person

Hi Mike,

People get upset with me from time to time, and when I go over what’s happened I generally realize why. Now reading through the situation again, I think I can see things more clearly. Even though my actual perspective is quite the contrary, I now see that I was dismissive of both you and your post. I certainly apologize! But what might I do to help fix my mistake? My wife tells me that when I find myself in a hole, stop digging! But I don’t like being trapped in holes, and so struggle to get out despite her advice.

Let me say that I very much support this post and all of the neuroscientific understandings found within. Good stuff! Perhaps some neuroscience would help me improve my models? Or indeed, where my models are pretty good, perhaps some neuroscience would help me relate this to others? Right now I just can’t say. But one thing that is clear to me, is that I must somehow end my cocky attitude. That’s something which seems to transform friends and potential friends, into adversaries.

LikeLiked by 1 person

Thanks Eric. No apology necessary. One of the reasons I put these posts out is to get feedback, and I know some of it will be spirited. Our interest in this stuff is what brings us together, and often we’re passionate about it. But I do appreciate your clarification on the post.

I enjoy our conversations and very much hope they continue.

LikeLiked by 1 person

Hi Mike,

Once again I think I understand everything you’ve said but failed to see the contection between your use of ‘imagination’ and perception.

It seems to me when you asked us to imagine a bear climbing a tree you are already visualising a bear which you or me can percieve as an image or form. What I would like to know is how we arrived at the perception in the first place. The visual information of a bear gets stored into neuronal maps in the cortex. This is similar to how a computer stores similar information by mapping it into transitors. So at that point it’s only a neuronal map akin to a transitor map or a binary digit code etc. Something has to ‘percieve’ this organised data as a bear but what and where is that something and how is it done?

Your description of imagination certainly doesn’t fit the bill of that ‘something’. My understanding is that you say action-scenario simulation are played of the future and these are associated with ‘affective consciousness’ that guide which action to take versus inhibit?

The action-scenario simulation can be called imagination but how is that different from what a self-driving car is doing for example? Any purposeful or effective action needs some degree of future simulation that is better than random noise.

However, you added the association with ‘affective consciousness’ but again this is a perception itself without an explanation of how it came to be.

If I were to say the decisions a self driving car makes are guided by affective consciousness or that the potential decisions a self-driving car can take are affective states in themselves why would that be wrong in comparison?

LikeLiked by 1 person

Hi Fizan,

“Something has to ‘percieve’ this organised data as a bear but what and where is that something and how is it done?”

This is an interesting question. Answering it gets into the very meaning of experience. Based on the neuroscience I’ve read, I can give an account that I feel somewhat confident resembles the reality, but no one can currently be authoritative on the details.

Consider that the image of the bear exists in your visual cortex and the posterior temporal lobe. It’s in the posterior temporal lobe that the bear shape is recognized. But the more general category of “bear” appears to be resolved in what is sometimes called the posterior association cortex in the middle parietal lobe.

So, let’s say you actually were seeing a bear. The patterns would form in the visual cortex, cascade to the temporal lobe, and eventually to the posterior association cortex. A signal of “bearness” would be sent from there to regions throughout the brain. This may trigger a visceral reaction from the midbrain regions, particularly if the concurrent signals from the hippocampus seem to indicate that maybe we’re a little too close to the bear.

But I think the region you’re looking for here is the prefrontal cortex. The pfc is where the planner lives. It receives the “bearness” signal, as well as the visceral reaction, and a number of other signals.

To be clear, the pfc doesn’t receive the whole image of the bear, just an impression of bearness. It may then request details about the bear from various regions, or maybe it chooses to integrate signals coming in from other regions. The color of the bear is retrieved from the relevant portions of the visual cortex. Maybe the texture of its fur. The size of its claws. The growling noise it’s making comes in from the auditory regions of the temporal lobe.

All of these interactions happen quickly (milliseconds) and in rapid succession. It gives the impression of a Cartesian theater. But the theater is an illusion, created by the pfc retrieving information from various perceptual regions, as well as the reflexive reactions from the midbrain region, often via the amygdala.

You can check this by looking around your current surroundings. If you think carefully about it, you can only think about one thing in your visual field at a time. You just flit from one thing to another so rapidly that the impression of taking in the entire field continuously is present.

There are more aspects to this. Your ability pay attention to your experience at all seems to happen in another part of the pfc (medial and lateral anterior regions) as well as the anterior cingulate cortex. We couldn’t have this discussion without these regions.

This isn’t to say that a humuculus, a little person, lives in the pfc. Again, the pfc doesn’t have sensory images, nor a sense of self, nor primal reactions. It receives information on these things from other regions and utilizes them to plan for action. What it brings to the table is the ability to orchestrate and coordinate action-sensory scenarios (imagination) as part of that planning.

I could go on for thousands of words, but hopefully this gives a sketch of how I at least see it.

LikeLiked by 1 person

Thanks Mike, that certainly is interesting. Being a psychiatrist I tend to read bits of neuroscience as well (though I’m planning to delve into it more seriously later) and your explanation seems on point.

However, I don’t think you’ve answered my question. I see you are trying to capture the wholeness of the experience of a bear by presenting the multiple parts of it. On the other hand I’m not statisfied by your single part explanation yet. So to make it simple lets keep it to a single point such as ” It’s in the posterior temporal lobe that the bear shape is recognized.” If you start adding in colour and the ‘visceral’ response etc. then we’ll be having 3 discussions of the same problem.

So coming back to the recognition of the bear shape. Do you think this is the same as the ‘perception’ of the bear’s shape?

For example many software are able to recognise shape as well do you think they actually percieve the shape when they recognise it?

LikeLiked by 1 person

I do think that’s where the perception of the bear shape is. Of course, this can get into exactly what we mean by “perception”, but if we’re talking about where the bear shape is registered, then the posterior temporal lobe (or thereabouts) is where it is. In truth, I think the whole perception is the overall network of firing patterns that span the sensory and posterior association cortex. But if we talk about the experience of the perception, then that’s more complicated, and we have to expand the network to the frontal lobes.

In the case of software, my laptop logs me in by recognizing my face. (Well, usually, assuming lighting or other conditions doesn’t throw it.) Does that mean my laptop is “perceiving” my face? I don’t know that we have grounds to say it isn’t. It’s an extremely narrow, shallow, and limited form of perception, but that seems like more a matter of degree than sharp division. I don’t know whether the Windows software uses an ANN (artificial neural network) for facial recognition, but this kind of recognition increasingly depends on them. But I wouldn’t say that my laptop is “experiencing” its perception in anything along the lines of what we typically mean by the word “experience”.

On psychiatry, I’m currently reading John E. Dowling’s ‘Understanding the Brain: From Cells to Behavior to Cognition’, which includes some discussion on the effects various drugs have on synaptic function, including neurotransmitters and neuromodulators. It’s pretty interesting. I finally understand (hazily) what the phrase “SSRI (selective serotonin reuptake inhibitor)” actually means.

LikeLiked by 1 person

Thanks Mike for a very clear response.

From your answer I’m assuming you believe whenever a pattern is being recognised by a system there is a shallow, limited unit of subjective perception happening (and the subject here is that system or is it the pattern+ system whole?)

From this it seems you either have a panpsychist view that qualia is a fundamental property of the universe which every material thing posses to some extent. Or you believe in Emergentism i.e. that qualia is a property which emerges out of the neuronal firing pattern and that this is a fundamental property of the universe that whenever such a functional organisation is achieved this specific new proerty (of subjective experience) emerges. I think we’ve discussed panpsychism on my blog before and you don’t agree with it. Do you believe in a form of ’emergence’ then ?

If it is the latter I have a few issues; firstly emergence does not seem to be a scientific explanation. It is a post-hoc imposition onto a known correlation due to the lack of finding a material causation.

Secondly, all other cases of emergence (such the ‘wetness’ of H2O molecules) fall under subjective experience and are NOT properties which exist in the real world. On the other hand ‘subjective experience’ is something that does exist in the real world. It’s a false analogy.

Lastly, (expanding on your example of your laptop’s face recognition) what if we replace the face recognition with some other recognition system. For example, If we place a photodiode which unlocks the laptop when there is sufficient light. Does the photodiode have a shallow subjective perception of a photon?

To me it seems that be it a ‘face recognition system’ or a photodiode or even a rock falling down a mountain slope, they are all doing the same thing that is obey physical law. They aren’t actually recognising or perceiving the face or light or the slope. Subjects are what give those processes any meaning.

LikeLiked by 1 person

Fizan, I want to address your issues with emergence. For a good hard scientist’s view of emergence, you might want to read Sean Carroll’s The Big Picture. It’s all about levels. So at a very low level you see only atoms and molecules, whereas at a higher level you can see a chair. The “chair” is emergent. Similarly, you can see a man on a mound of dirt throw a small sphere toward a group of other men. It’s all just atoms and physics, but at an emergent level it’s a baseball pitch. So it is for perception and experience. Under specific circumstances (being worked out by neuro science) we call a bunch of specific neural activity a subjective perception or experience. Personally, I think of “experience” as perception + the sequellae (further subsequent perceptions) of that perception. In any case, it exists as something that happens in the real world in just the same way that baseball pitches happen.

The key difference between a computer recognizing a face (or a photodiode recognizing a photon) and a rock rolling down a hill is that the mechanism which does the recognizing (computer or diode) was created for the purpose. It’s still just physics, but at a higher level of emergence we can talk about purpose. And the key difference between a computer recognizing a face and a human recognizing a face is the source of the purpose. In the former case the purpose comes from an intent whereas in the latter case it comes from natural selection. The other big difference between the computer and the human is what they do with that recognition. The computer doesn’t (usually) do much at all with it. The computer doesn’t call to active state other times when it recognized that face. It usually doesn’t try to differentiate one face from another. It usually just decides if it is the one face it cares about and does one thing in response. That doesn’t mean a computer couldn’t be programmed to do all the things a human does. It would just take a lot more computing power than most (all?) computers have now.

*

LikeLiked by 1 person

Fizan,

Apparently I was not very clear at all since I somehow managed to give you the exact opposite impression of what I was trying to clarify. I still don’t see panpsychism as productive and I don’t see the qualia of the perception happening in the region you asked about, or in my laptop.

For subjective experience to be present, I think a hierarchy of capabilities has to be in place. To recap, this hierarchy includes:

1. Reflexes: survival circuits adaptive toward genetic preservation (homeostasis, reproduction, etc)

2. Perceptions: image maps, representations, predictive models of the environment, widening the scope of what the reflexes can respond to

3. Attention: prioritization of which perceptions the reflexes are responding to

4. Imagination: action scenario simulations, deciding which of multiple competing reflexes to allow or inhibit

5. Introspective self awareness: metacognition, awareness of our own awareness

I think what we intuitively think of as qualia require at least 1-4. The full human versions may require 1-5. The posterior temporal lobe only has a fragment of 2.

Strictly speaking, my laptop doesn’t even have 1. Oh, it has its own version of reflexes, but they’re generally not adaptive toward its survival, reproduction, etc. The facial recognition feature is a very primitive and somewhat fragmentary version of 2 with glimmers of 3 (since it manages to find my face at various angles). But 4 is absent.

So when I say the perception of the bear shape is in the posterior temporal lobe, I’m only saying that’s where the image map for it is maintained. The experience of that image map requires the prefrontal cortex to access it as part of its action scenario simulations. And the human version may only be there when the anterior prefrontal cortex introspects that access.

(And, of course, we can only talk about it because Broca’s area at the lateral edge of the prefrontal cortex is there to translate this perceptual/imaginative information into language and affect the motor cortices to produce speech, or in this case, typing.)

Hope my clarification actually clarified this time 🙂

LikeLiked by 1 person

James of Seattle, with regards to the examples of ’emergence’ you give i.e. a chair and a baseball pitch, (as I explained in reply to Mike) these emergent properties of ‘chair’ and ‘baseball pitch’ are only emergent at the level of conscious experience. These properties do not exist otherwise. Let me be clearer, inorder for some collection of molecules to be called a chair it has to be percieved by a conscious observer as being a chair.

The whole concept of ’emergence’ is at the level of conscious experience. To then use this concept to explain conscious experience as being an ’emergent’ level itself is just a play on words. It’s like saying conscious experience is analogous to conscious experience.

Mike,

“For subjective experience to be present, I think a hierarchy of capabilities has to be in place.”

I’m familiar with your hierarchy. But the problem remains the same for me:

This hierachy of capabilities produces ‘subjective experience’, so it seems this property has emerged out of this underlying pattern ?

I say this because at no point within in your hierachy is the property of ‘subjective experience’ present and it only appears as ‘Qualia’ by steps 1-4 taken as a whole and as ‘human experience’ by steps 1-5 taken as a whole etc.

Or in other words when taken as a whole these patterns of activity produce the experience of consciousness ?

– This is exactly what emergentism is (from my understanding most modern neuroscientists do tend to take this view since it was first proposed by Nobel laureate Roger Sperry)

I also have one unrelated issue, why do you think step 1 is neccessary to achieve the property of ‘qualia’ or why won’t it happen without it?

LikeLiked by 1 person

Fizan,

On emergence, I think you make an excellent point that it only applies to human consciousness. Emergence is when it becomes productive for us to switch models. We do this solely due to the limitations of human intelligence. At some point, it becomes productive to stop thinking about molecular kinetics and start thinking about thermodynamics, or fluid dynamics instead of molecular chemistry.

The thing about emergence is, we’re stuck with it. If we completely banish it from our epistemology, then nothing exists except spacetime and quantum fields, along with their excitations and interactions (and even they may someday turn out to be patterns of lower level phenomena). But this perspective isn’t productive for understanding most things. (I say this as someone who uses “Self Aware Patterns” as a moniker.)

In computer science, there is a another term for this: abstraction levels. When you’re using whatever device you use to comment on this post, it’s not productive to work with it in terms of transistor voltage states and capacitors, or at the higher level of bits, bytes, and instruction sets, or even at the higher level of files, TCP/IP sockets, and processes. You work with it at the user interface level of the application layer. As a product of human engineering, we understand all the layers thoroughly, including how one emerges from the other. But we still find it necessary and productive to switch models as we climb up those layers.

Where I’d agree that emergence as a concept is unsatisfactory is when it’s all we have to explain something. It’s why I don’t reach for that concept when I explore consciousness. It’s simply not answering a question. Of course consciousness is going to be emergent from lower level phenomena, just like everything else (unless you go in for substance dualism). But the question is how it’s emergent. Simply evoking emergence is not an explanation. I personally think we can do better.

On qualia, if I could answer authoritatively on where it is in the brain, I’d be keeping my shelf clear for my eventual Nobel prize. All I can do is speculate. But before speculating, let’s start with what we actually know.

First, we can discuss qualia. (If we can’t, then this is a very strange conversation.) That means that the motor cortices of our brain can manipulate our larynx and tongues, or our fingers, to make statements about it. These motor cortices know what to do because of Broca’s area, a brain region at the edge of the prefrontal cortex involved in speech production (vocal or written). Broca’s area does its thing based on information it gets from the more medial regions of the prefrontal cortex.

The pfc (prefrontal cortex) can formulate what needs to be communicated because it has access to the image maps and associations in the parietal, temporal, and occipital lobes. We can express how we feel because the pfc receives signals from the amgydala, hypothalamus, and other regions, which in turn receive signals from circuitry arising from the midbrain region.

Okay, that’s the neuroscience. You can double check it in any hardcore book on the brain. (I can make suggestions if you’re interested.)

Here’s my speculation. I think qualia are information, communicated information. Not communication in the manner we normally think of it, composed of language or symbols. Qualia evolved long before any of that existed (at least in minds). It’s raw primal communication. Communication from the back parts of the brain about associations or image maps, communications from the amygdala, hypothalamus, and midbrain regions on reflexive reactions. Qualia are the raw stuff of that communication, communication from various regions of the brain to the pfc.

This isn’t to say that the pfc itself is conscious. Without the other regions, it isn’t anything. It’s merely a conductor of an entire orchestra that produces the symphony. It’s a nexus, but a nexus can’t exist if it isn’t a nexus of something.

This gets into your final question about why step 1 is necessary. The most common question I get from people troubled by the hard problem is, “Why does if feel like anything to perceive red.” The information of redness can’t feel like anything if it doesn’t generate some primal response, a response which gets communicated to the pfc and interpreted as a feeling. No reflexive survival circuits, no feelings.

Okay, I think I’ve blathered enough (for now). Let me know if you see anything I’m missing.

LikeLiked by 2 people

Mike, I’m wondering if you have thoughts on what might make a “red” qualia physically different from a “blue” qualia. If qualia is emergent, what’s the next level down? What does it emerge from? And what would the “primal response” to “red” look like?

Also, did you see my recent comment on the Conscious Entities blog re:qualia? It didn’t get any response so I’m wondering if it made sense.

*

LikeLiked by 1 person

James,

The quality of red starts at the retina, where there are photoreceptor cone cells that are red sensitive, along with others that are green or blue sensitive. So there are axons going up the optic nerve that only fire when a bit of red light strikes a certain spot on the retina. This distinction is obviously preserved all the way through the thalamus and occipital lobe, and gets communicated to the frontal lobes since we often make decisions based on the color of things we see.

On a primal response, consider the vividness of red. We perceive this vividness, not because we choose to or learn to, but because it’s inherently vivid to us. This vividness is a primal reaction. Why is it vivid? The theory is that it is adaptive for primates because it makes us more likely to recognize ripe fruit, which tends to have a red / yellow coloring. I’m not sure which structure in the brain fires when there is something red in front of us, but obviously something does. In modern society, we’ve hijacked this original adaptive response to call attention to traffic lights (among other things).

I have to admit that I was confused the first time I read your CE post, but on re-reading it, I think I see the point you’re making. Percepts often come down to which incoming axons are “lit up”. A dog neuron may fire if it is excited by a wolf-dog shape synapse, by another synapse indicating barking, etc. If enough of the attributes come in, the overall dog neuron will fire. But maybe it doesn’t get enough for it to fire, but another neuron nearby, the wolf neuron, does end up firing. Ultimately, in the nervous system, conceptual distinctions come down to which incoming synapse is firing and whether any incoming inhibitory synapses are negating their signal. It’s not the individual synapses/axons which are significant, but the vast neural patterns that that converge on their firing.

LikeLike

Fizan, what emerges are patterns. I agree that only conscious minds can recognize and label these patterns, but that doesn’t mean the patterns don’t exist without minds to recognize them. “Star” is a shorthand label for a complex pattern of physical activity which emerges. Likewise “vortex” and other naturally occurring homeostatic activities. “Life” is another such label for a certain (emergent) pattern of activities. “Consciousness” is just yet another such label. It’s just a curious happenstance that consciousness is needed to recognize the pattern called consciousness, “recognizing” being one of the activities in the greater pattern called “consciousness”.

*

LikeLiked by 2 people

I don’t think it’s as easy as saying that’s just a happenstance. Without getting into semantics, subjectivity is what consciousness is and it is exactly this ability to perceive objects (whether inside or outside objects).

The patterns does not emerge (as a “star” for example) at the quantum level. Neither does it emerge at any number of higher or lower dimensional levels. So it’s not that the data does not exist it’s that the data does not seem to ’emerge’ at all without subjective perception.

LikeLiked by 1 person

Fizan, I’m trying to get a handle on what you mean. I translated your last statement as consciousness = subjectivity, but I don’t know what that means. I agree that subjectivity is an important part of the explanation of consciousness.

As I’ve said before here and elsewhere, “Consciousness” refers to certain kinds of processes, namely, recognizing-type processes. Do you agree with this? If so, the question becomes, what is physically going on in a “recognizing” process? If you do not agree that “consciousness” refers to certain processes, then why not?

*

LikeLike

James,

““Consciousness” refers to certain kinds of processes, namely, recognizing-type processes”

If that is the case then anything which can recognise anything should also be conscious.

I’ll give my perspective with some questions:

Does a radar sytem recognise an airplane?

Or does a stone recognise a slope as it falls?

Or a face recognition camera on your phone recognise your face?

From my perspective in any of these cases it isn’t for example the stone recognising the slope nor is it even a camera which may ‘recognise’ that a stone is falling. The recognition happens only when I or any other conscious entity recognises/ observes / sees this happening.

Inorder for these words (recognise/ observe/ see etc.) to be used there has to be a subject.

The subject has an inner world of experience and that is what consciousness is.

So in one sense I agree that recognition is what consciousness does but I don’t agree that other ‘recognition-type processes’ in which the ‘recognition’ does not come from the system or process itself but by virtue of a conscious observer (doing the recognition) are equivalent.

LikeLike

I can’t speak to your analysis of brain architecture and neural correlates — not a topic I follow much — but it sounds like we’re “imagining” similar things with regard to imagination.

I’ve been pondering it in the context of free will; is our ability to imagine future paths we might take the seat of our (apparent) free will? Further, is the brain/mind system extremely “noisy” such that mere thoughts can steer it and hence affect the physical world?

I did imagine a bear trying to climb the wall, but I also did resonate with the idea. I recognized the difficulty the bear would have by recognizing it would be hard for me to climb.

The exercise of imagination I’ve used is this: “Imagine a unicorn.”

Which just about anyone can do.

Which is interesting since they don’t exist!

I think this is substantially different than anything we find in the animal kingdom. Our highly expressive art and literature are products of the heights of our imagination.

So are our bridges and spaceships. Far beyond any animals we imagine how our tools can be improved, and we even imagine new tools to meet new needs.

Whatever it is, imagination is pretty impressive!

“The aquatic environment often only selects for being able to predict things a few seconds into the future,”

A minor quibble: I think one could argue that long-term planning is an advantage in any environment. It’s possible whales or dolphins, or especially octopuses, imagine into the future as much as any land or air animal.

With regard to dogs, I’ve read the same science studies claiming a lot less is going on, but I’ve been around dogs all my life, and, boy, it sure seems there is more going on.

Case in point, my dog Sam. Our protocol in those puppyhood days was: I’d come home from work, park in the driveway, open the front door, and she’d come out to do her business and we’d play a little fetch. Then we’d go back, and she’d get her traditional treat, which she loved.

Keep in mind, I haven’t even entered the house at this point.

Some days, after the play, she’d suddenly be reluctant to go back in for her treat. She’d get that “hangdog” look. And it always meant she’d left a present for me.

I honestly don’t see any way I could have communicated anything to her, because I didn’t know anything. Not even a smell. And her reaction always came when it was time to go back in.

So,… [shrug]

LikeLiked by 2 people

Hello Wyrd,

To me this whole “dog” thing is simply ridiculous. Beyond your, and my, and James’ provided anecdotal evidence, millions more can be found, along with the testimony of virtually all professionals who actually work with non-humans every day. If people who are paid to work with animals are unable to understand how they function, then how are they able to effectively do their jobs? Conversely scientists make their money in separate ways, do some random animal testing, and then from this claim “You non-scientists are actually just being anthropocentric. Our tests indicate that only humans feel anything more complex than base affects”.

So perhaps a good scientific investigation right now would be to determine why it is that animal behavior scientists have gained this particular bias? What motivated reasoning leads them to skew their experiments in order to effectively claim that only the human has evolved to feel things like guilt and jealousy? Clearly there must be something going on. And actually as things stand today, I doubt that many of them would be all that surprised to learn of such findings. Unfortunately this would be more of the same.

LikeLiked by 2 people

Eric,

This seems to be a matter of passion for you. I agree, as someone who’s owned several dogs, that they certainly do seem to show guilt.

However, I think we have to remember something I pointed out in the post. We don’t remember the past by retrieving a recording of it. We imagine it based on our current beliefs. I’m open to the possibility that my memories of my dog appearing guilty is tainted by my knowledge of what followed in the seconds or minutes afterward. Of course, I haven’t owned a dog for a few years, so this might be easier for me than a current dog owner.

To be scientific about this, I think we’d have to record any impression of guilt we saw in our dogs before seeing the thing we later perceive them to be guilty of. That means stopping when you notice the behavior and immediately recording it on your phone or a notepad, and tracking the occurrences and seeing if they correlate to actual “wrongdoing” (peeing on the carpet, leaving pooch bombs, tearing up the furniture, etc).

I think when scientists call dog guilt into question, it’s because they attempted to do that kind of data capture and didn’t get evidence. That said, I haven’t researched this in any great depth, so I could be wrong on exact science.

LikeLiked by 1 person

Yes Mike, I’d say that each of us are passionate about this stuff. But as you say, extraordinary claims need to be backed up by extraordinary evidence. To me the idea that only the recently evolved human has a rich emotional life, not countless creatures that came earlier, seems bizarre. But my suspicions aren’t science. And yes Wyrd and James of Seattle might have misremembered the behavior of their dogs on those occasions before they found where their dogs couldn’t quite hold it in. And the time that my parents’ sweet lap dog chewed up my nephew’s doll in their bedroom, supposedly for them to find as an indicator of her disapproval of this young competitor, might instead have been a standard coincidence rather than feelings of jealousy. Furthermore professionals might be fooled by such creatures all the time just as other other non-scientists are fooled. And I certainly grant you that pet people (which is not me!), can be expected to have associated biases. But what I haven’t yet noticed is any extraordinary evidence from which to overturn these sorts of anecdotes.

I’ll also admit that I do happen to be an interested party here. From my own models it makes sense that sentient life would tend to evolve to feel all sorts of things in order to subtly motivate all sorts of associated behaviors. I consider language to have evolved as an amazing second variety of thought in the human, but not as a means from which to actually feel new things (contra Lisa Feldman Barrett’s theory of constricted emotions as I understand it). As motivation from which to drive the conscious form of function, feelings should occur bottom up rather than top down.

You know that I was not at all impressed by the Alexandra Horowitz study that Barrett cited to support her position, which you left me here https://selfawarepatterns.com/2017/08/05/politics-is-about-self-interest/#comment-17446 . Apparently that six page pdf is no longer working, but googling “dog guilt Alexandra Horowitz” brings up all sorts of articles about her study. Apparently she’s become quite popular. This perplexes me however since clearly wrongly implicated dogs might look to us humans just as guilty as guilty dogs look. Didn’t this occur to anyone else? Where are the studies which take this concern out of the mix? Yes that could be as simple as video of generally obedient dogs that know punishment is coming for what they’ve done, though before the owner knows.

Of course it’s not your job to justify the premise of Barrett’s book to me. But until I come across credible evidence that non-humans aren’t motivated by all sorts of feelings beyond basic affects, I’ll remain skeptical of this position.

LikeLiked by 1 person

It is quite possible dogs can experience some form of cognitive dissonance in situations that interact with their experience in negative ways. But until we can actually talk to them about what they’re feeling, calling it “guilt” may be a mistake, because “guilt” is a complex human emotion based on our strong understandings of moral behavior.

A human can typically express why they’re feeling guilt, but dogs don’t have the symbol-handling for that. If they feel anything akin to guilt, it’s something much simpler than any human might feel.

This may be more a terminology thing — how we define guilt.

LikeLiked by 1 person

Thanks Eric. I had totally forgotten about that study, or Barrett’s summation, which makes it sound like they followed a protocol similar to what I laid out above.

“This perplexes me however since clearly wrongly implicated dogs might look to us humans just as guilty as guilty dogs look. Didn’t this occur to anyone else?”

I’m not sure if I understand your concern here. I think the point of the study was to show that wrongly implicated dogs behave just like rightly implicated ones in that they’re actually responding to the human’s reaction. Although I do agree it would be interesting to video the dogs (guilty or not) before the owner shows up.

I don’t know if you remember, but I had my own issues with Barrett’s ideas, although mine were more definitional. I’m willing to use the word “emotion” for what dogs have where she stipulates that they only “affects”. To me, the dog’s emotions are simpler than humans, which I think most people would agree with, but I would still call them “emotions”.

LikeLiked by 1 person

Wyrd,

I think we’re on the same page with that. I consider “true guilt” as what a person should most purely feel if he or she isn’t actually held accountable by others. For example prisoners are sometimes derisively told “You only feel ‘guilty’ because you were caught!” But this other guilt commonly concerns hurting someone, seeing the associated damage, and then feeling regret given the human capacity for care. This can feel horrible. And might dogs ever feel something like that? Well I’m pretty sure that this social species evolved to sometimes feel strong bonds of care for others. Furthermore I suspect that they can ponder what they’ve done over time and so have the potential for such guilt in some capacity. I’m not going to put that beyond them without evidence.

The mentioned study didn’t come anywhere near testing for something like this however. No one was being hurt for the dogs to potentially be concerned about. And it wasn’t even like pooping in the house, which is never okay. Instead this was a master’s arbitrary decision of whether or not to allow a standard treat to be eaten on a given occasion. So all they were actually “testing” for here were different reactions between dogs implicated of not following directions, whether or not they did follow them. No real crime here. And as I’ll get into below, apparently they found exactly what they were looking to find.

Mike,

I did take another look at that study so thanks for sending it over. On my first reading I guess that I decided that to exonerate the dog guilt scenario they were thinking that the innocent dogs would stand tall rather than become submissive. Okay, so instead there point was that dogs would look guilty with any scolding regardless of their behavior and so this must be about human cues exclusively. Fine. But doesn’t this merely suggest that dogs tend to behave submissively when scolded by their masters? That doesn’t seem like a very interesting conclusion to me.

On this reading I did notice a disclaimer stating that dogs might actually feel guilt, though this study merely suggests that signs of guilt may often not be accurate, which I appreciate. Of course Barrett goes much further with her theory.

And do dogs feel anything regarding what they’ve done in the past? Well I expect that they sometimes worry about such consequences, just as we do. I found it interesting that the innocent dogs looked somewhat more guilty than the guilty dogs did. I suspect this was because such injustice tended to hurt them somewhat more than in the “deserving” case. Complex stuff!

I’ll again mention the biased perspective expressed in this study. If supported I’d think that charges of anthropocentrism would be a potential conclusion to make. Instead these charges were made commonly.

Thanks for restating your view that many animals probably harbor emotions of some sort. Yep, bottom up makes sense to me. Just because we can’t find common neural signatures for things that we feel, this doesn’t mean that the complex things we feel are “constructed”. It could be that we’re simply not yet very good brain explorers.

LikeLiked by 1 person

Eric,

I do think “constructed” could be the right word for what happens. That construction may be prompted by the firing of lower level survival circuits, such as when we feel a spider crawling up our arm, see a large vehicle barreling toward us, or some other physically dangerous scenario.

But it could also be prompted “top down”. I think we once discussed an example of this second kind of activation, such as someone learning that the portfolio of their retirement account has tanked. This isn’t something the lower level systems innately know anything about. Instead, the understanding forms in the cortex, triggers activity in the amygdala, which in turns triggers the lower level circuits, which then shoots back up giving the bad news a kick. In other words, a purely social event gets translated into a visceral one.

Does this top down thing happen in dogs? Obviously they’re not concerned about investment funds (that take symbolic thought). But if they lose status in a pack, I could see a very similar loop taking place. In fact, something like that probably happens when their owner scolds them.

LikeLiked by 1 person

Mike,

I don’t mind using “constructed” and “top down” as you’ve used them here. And I’m pleased that you’re granting this sort of thing to certain non-humans. Apparently “constructed” for Barrett means something far more exclusive however, and this is what I’m not so sure about.

You might recall me mentioning that I theorize two separate dynamics from which to drive conscious function, or hope and worry. An investor invests because it feels good presently to invest (hope), and/or because it feels bad to not invest (worry). Thus the destruction of an investment should naturally feel bad on the basis of this. But even though human stock investments require human language and culture to grasp, I don’t consider associated feelings to be all that unique.

Consider a chipmunk. In a forest full of poor nut trees, perhaps it see one that it suspects would be extremely fruitful. The motivation I theorize for it to consciously check this out is its present feeling of hope about this prospect. Furthermore it may worry about not checking it out if it has any concerns about not finding enough nuts. So this venture too could be an investment. If chipmunks consciously realize that they save nuts in order to be eaten later, and so feel good about saving nuts, then it seems to me that they’re quite like us in this regard. Here they should naturally feel bad when their investments get wiped out. We could define these feelings as “higher order” if we like, but regardless they seem relatively standard to me. What good would consciousness be if animals only prepared for the future in non-conscious ways?

I rewatched Barrett’s Brain Science episode (135 provided below) to get a renewed sense of whether I’ve been unfair to her position. For 50 minutes I was in agreement, and often noted how well my own dual computers model aligns with things like a void of hardwired emotion circuits, how the brain functions contextually, and so on. But apparently Campbell noticed how much time had gone by and so around there mentioned “babies” to spice things up.

Here Barrett let it be known that even human babies do not feel emotions, and because they do not have languages from which to teach them to have such feelings. WOW! So from this position only the human harbors a rich emotional life specifically because only the human has evolved language. And surely people with highly reduced capacities for language would then feel little if any emotion as well?

I don’t mean to be so negative about competing theorists, but it’s hard for me to swallow how these sorts of ideas get as far as they do. Given this sort of thing how might someone who actually does have good ideas (just hypothetically, and regardless of whether or not my own ideas happen to be any good at all), be able to illustrate this to the world when crap ideas can get so far?

http://hwcdn.libsyn.com/p/6/7/b/67bc06238541fb18/135-bsp-barrett.mp3?c_id=16217102&cs_id=16221542&expiration=1543992826&hwt=b4443b5de96678fdb6940db4c692a436

LikeLiked by 1 person

Eric,

I’m actually skeptical that chipmunks store nuts as a conscious strategy. It seems like something they do compulsively because it just feels good to do it. Of course, the reason it feels good is because it enhances their survival chances, but I can’t see any evidence that the chipmunk has thought that out. If they were capable of that kind of reasoning, it seems like at least some of them might choose different strategies.