Anyone who follows the computing industry knows that Moore's Law, the observation that computing power doubles every couple of years, has been sputtering in recent years. This isn't unexpected. Gordon Moore himself predicted that eventually the laws of physics would become a constraint. One of the technological hopes for a revival is quantum computing. Quantum … Continue reading The promise of quantum computing?

Tag: Computers

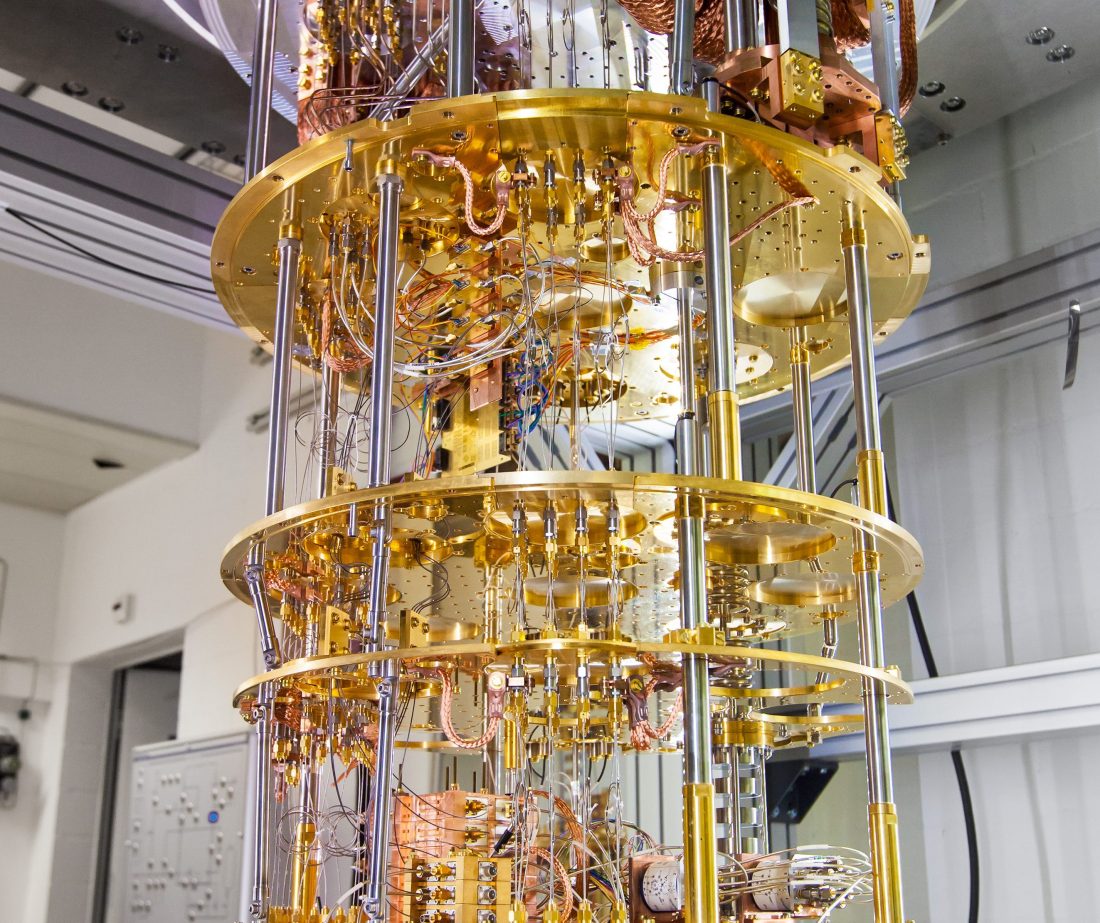

Quantum computing will not rescue Moore’s Law

I found this video on quantum computing educational. It confirmed some things that I've been pondering about quantum computing for a while, notably its limitations, which are discussed after about the five minute mark. http://www.youtube.com/watch?v=g_IaVepNDT4 The strength of quantum computing is that it makes use of superpositions, the fact that quantum particles can be in multiple … Continue reading Quantum computing will not rescue Moore’s Law

Cosmic rays becoming an increasing problem for microchips. Threat to Moore’s Law?

When I first saw the title of this article, I thought it might be an alarmist piece of some kind about passenger safety from higher radiation doses while in the air, but it's actually about a broader and more serious problem: The $8.5M Race to Protect Planes From Cosmic Rays. It’s an invisible, but looming threat … Continue reading Cosmic rays becoming an increasing problem for microchips. Threat to Moore’s Law?