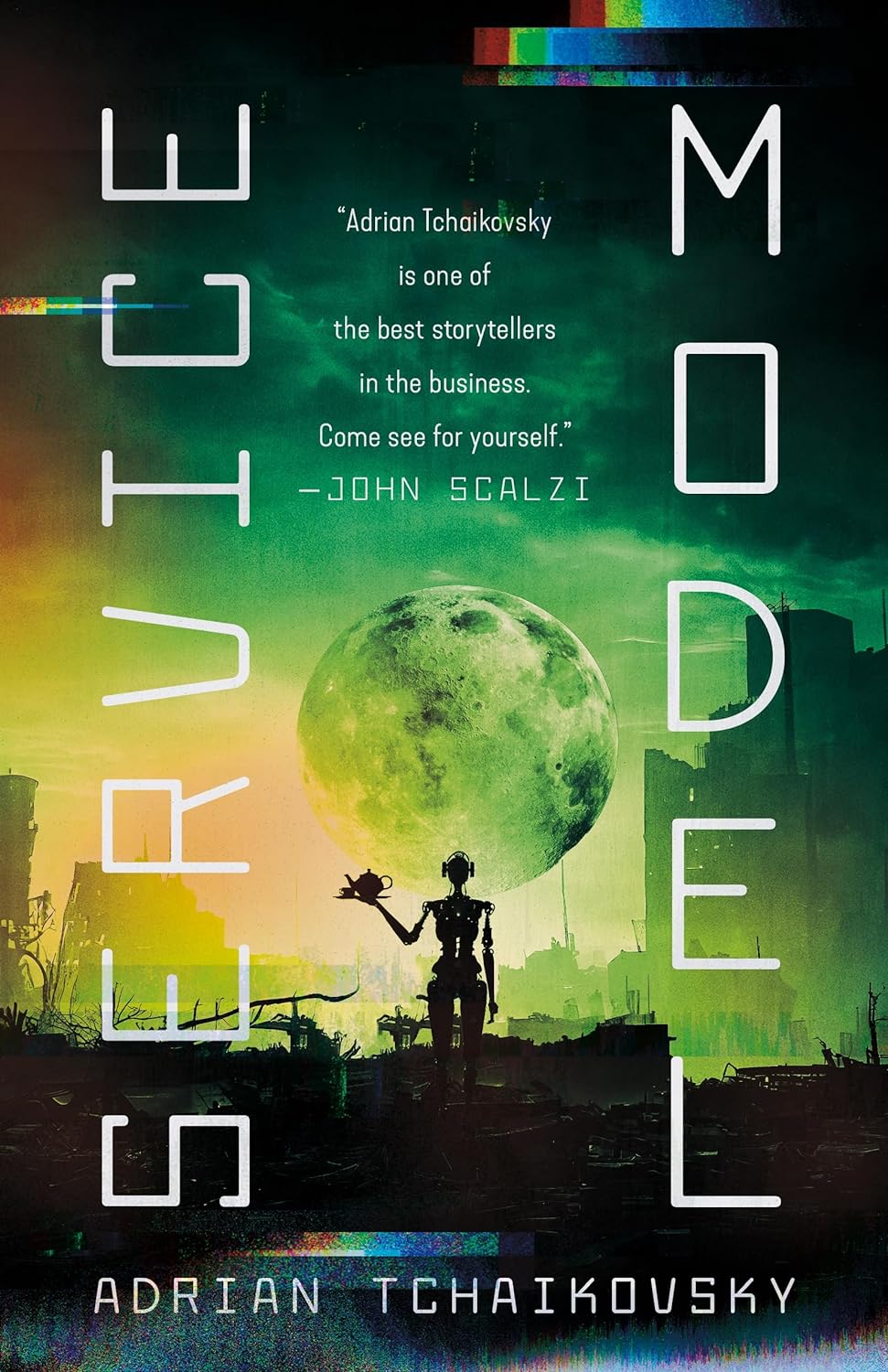

Adrian Tchaikovsky's Service Model is a fresh take on what can go wrong in a world of robots and AI. Charles is a robot valet. He works in a manor performing personal services for his human master, checking his travel arrangements, laying out his clothes, shaving him, serving meals, etc. However, it appears to have … Continue reading Service Model

Tag: Robotics

Robot masters new skills through trial and error

Related to our various AI discussions, I noticed this news: Robot masters new skills through trial and error -- ScienceDaily. Researchers at the University of California, Berkeley, have developed algorithms that enable robots to learn motor tasks through trial and error using a process that more closely approximates the way humans learn, marking a major milestone in … Continue reading Robot masters new skills through trial and error

Elon Musk: Killer robots will be here within 5 Years

Not sure what to make of this one: ELON MUSK: Killer Robots Will Be Here Within 5 Years - Business Insider. Elon Musk has been ranting about killer robots again. Musk posted a comment on the futurology site Edge.org, warning readers that developments in AI could bring about robots that may autonomously decide that it is … Continue reading Elon Musk: Killer robots will be here within 5 Years

Reaching the stars will require serious out-of-the-box thinking

Sten Odenwald, an astronomer with the National Institute of Aerospace, has an article up at HuffPost that many will find disheartening: The Dismal Future of Interstellar Travel | Dr. Sten Odenwald. I have been an avid science fiction reader all my life, but as an astronomer for over half my life, the essential paradox of my fantasy world can … Continue reading Reaching the stars will require serious out-of-the-box thinking

Termite inspired robots | Machines Like Us

http://www.youtube.com/watch?v=nFjtRONfae4 Inspired by termites and their building activities, the TERMES project is working toward developing a swarm construction system in which robots cooperate to build 3D structures much larger than themselves. The current system consists of simple but autonomous mobile robots and specialized passive blocks; the robot is able to manipulate blocks to build tall … Continue reading Termite inspired robots | Machines Like Us

BBC – Future – Technology – Is it OK to torture or murder a robot?

In the discussion on my post on computer consciousness from the other day, my friend amanimal just provided the following link: BBC - Future - Technology - Is it OK to torture or murder a robot?. I think this powerfully corroborates my thesis in that post, but it also illustrates that I might have been … Continue reading BBC – Future – Technology – Is it OK to torture or murder a robot?