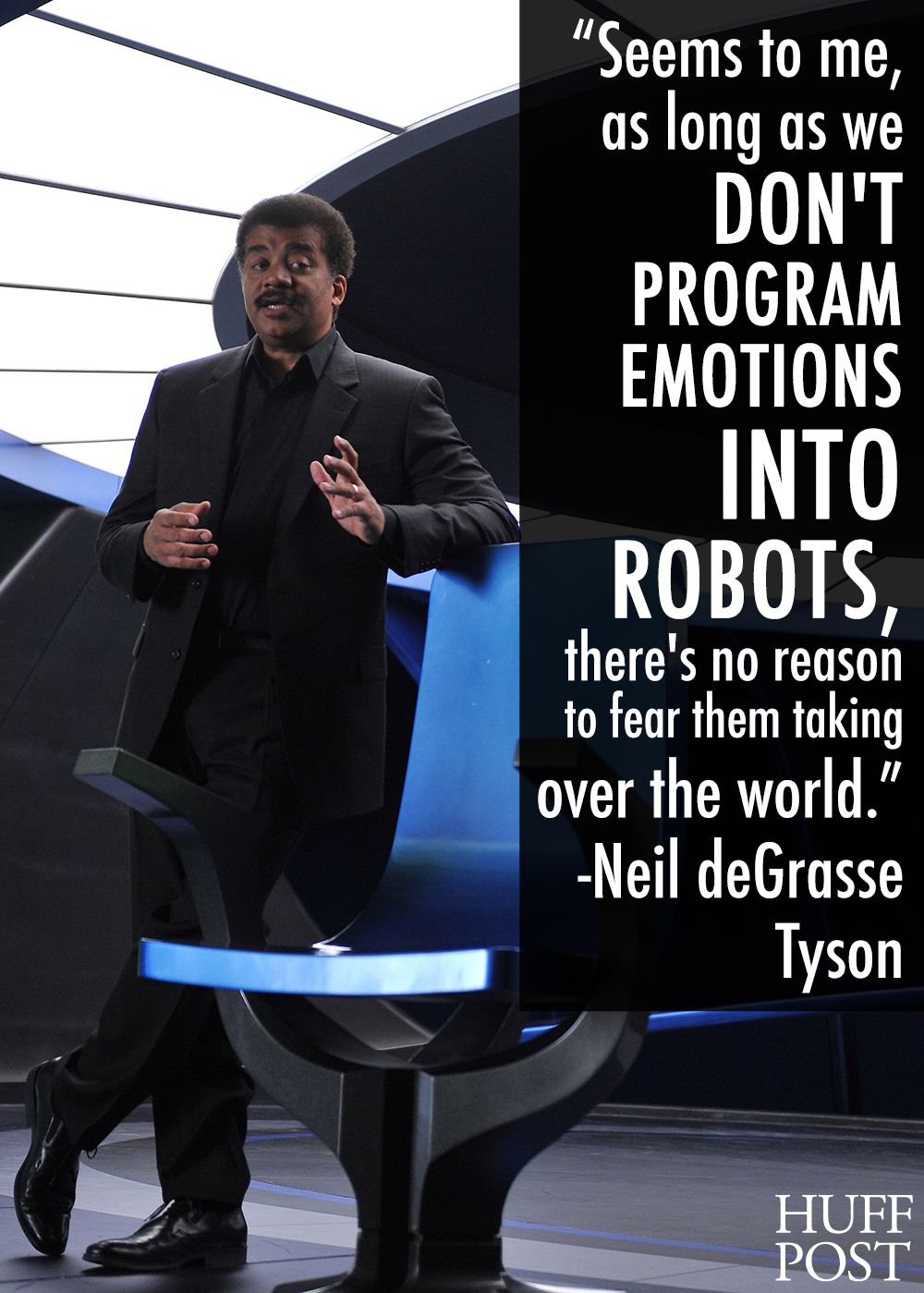

HuffPost has an article up with quotes from various people on the dangers, or non-dangers of artificial intelligence. They include the usual suspects: Elon Musk, Stephen Hawking, Bill Gates, etc. Most of them express concern about the dangers. But I think Neil deGrasse Tyson’s is the only answer from this group worth listening to.

There are some people in the comments saying things to the effect, “Lack of emotions is what we’re afraid of,” but I think they’re mistaken. They’re not afraid of an emotionless being, they’re afraid of a being with only selfish emotions.

As I’ve written about before, emotions are the instincts, the programming that evolution codes into living organisms. Without that programming, we wouldn’t be motivated to do anything, just as an AI wouldn’t be. An AI’s motivations are going to come from whatever programming we give it. As long as we’re not idiotic enough to program animal emotions into it, it won’t have them. Without those emotions, what would motivate them to take over the world?

Now, I’m sure some researcher is going to build an isolated AI with those emotions, just to see if it will work. But use of those types of AI are unlikely to have much market appeal. Most of the AIs in pervasive use will be those whose primary motivations are to fulfill whatever purpose we engineered them for.

Are there dangers with AI, particularly in the realm of unintended consequences? Sure. But it’s the dangers of any powerful technology, and we’re already living with it. Ask anyone who has ever had to do a software update on a heavily used computer system. The chief dangers in that realm aren’t from systems that are too intelligent, but from ones that aren’t intelligent enough.

Incidentally, being an expert in computer technology or in theoretical physics does not make one an expert in how minds work. Most of the “experts” worried about the dangers of AI are lacking expertise on at least half the equation. It’s why predictions for achieving a human equivalent artificial intelligence are always 20 years in the future. Those predictions are often right about the technological details, but wrong on what will be needed to achieve human level intelligence.

I like Tyson more and more.

LikeLiked by 1 person

I believe the largest flaw in man is fear. We make most of our mistakes based on it. It runs our lives and overrules our better judgement. Should we choose to program fear into AI, then yes, we would most assuredly have every reason to fear it, as it would have every reason to fear us. Until such time, we would have no more reason to fear it than we do our vacuum cleaner or our currently existing computers. Having worked as a systems analyst in a hospital, I know the appropriateness of fear in modern technology. So, fear in AI? Fear of failure, perhaps, but anything else is human imagination run amok.

LikeLike

I think fear has its uses, but I totally agree that giving AIs their own survival instinct, fear for their own well being, self concern, as their primary overriding imperative, would be a dangerous mistake. And I have a hard time imagining that an engineer designing a hospital AI (or a navigation one, etc) would find it beneficial to do that.

LikeLike

But that is not the overarching concern really. It is that once AI arrives, they will self-evolve if you will, to the point where in a very short time they will be so beyond us in intelligence that communicating with us would be like us trying to converse with our pet goldfish. They may, logically and unemotionally, simply decide they don’t need us and do their own thing. That doesn’t mean that they’ll automatically turn against us and destroy us, BUT without emotions, including such things as empathy and caring, what would stop them from doing so?

Many experts in the field predict that at some point in their evolution, a logical step will lead to them writing their own programming, and so it may come to pass that the command to protect human life will simply fall away as a useless relic of the past, because we will simply have no importance to them. And because we might be seen as competitors for the planets resources, that may be sufficient impetus for them to decide that we either need to be controlled or simply eradicated.

To my mind, you people who are not willing to embrace the ideas of the Bill Gates and Elon Musks of the world, and to proceed with maximum caution, are simply suffering from a degree of arrogance and hubris that historically has preceded the fall of many a civilization.

LikeLike

Isn’t fear needed for survival?

LikeLiked by 1 person

Hi Mike,

I know we’ve been through this before, but I really think you need to at least acknowledge the strongest versions of the case made by those worried about AI.

You focus too much on the assumption that they are talking about selfish machines which see us as a threat to their survival. I agree with you that that’s not a major concern, but that doesn’t mean that there aren’t other reasons for concern.

Any time you ask a computer to do anything, you have implicitly given it a goal to work towards. The fear is that AIs will be ingenious in achieving those goals, so if we are not very careful in what we ask them to do, and in particular with how we define these tasks, then we may find a mismatch between what we want and what the computers are trying to make happen.

As you point out, we already face this issue every day as we deal with computer bugs. These can occasionally lead to dramatic consequences up to and including large scale loss of life, but are more usually a case of minor annoyance.

What we have not dealt with yet is a system with mismatched goals which can outwit us to ensure that those goals are achieved. That would pose a genuinely new and potentially existential threat.

I’m all for AI research and feel that the benefits outweigh the risks, but I feel you’re strawmanning the paranoid crowd or at least failing to ironman them as you probably should.

LikeLiked by 1 person

Hi DM,

No worries at all on us being through this before. I am posting on it again afterall 🙂 I’m starting to feel a little like Jerry Coyne and his relentless posting on free will. I wouldn’t have posted on AI fear again if I hadn’t liked Tyson’s quote so much, or been so frustrated by Musk’s.

I don’t think I’m strawmanning them. Musk’s quote was, “With artificial intelligence, we are summoning the demon.” When I read Musk or Hawking on this topic, I don’t see the nuanced concern you’re expressing. I see concerns about evil AIs. Musk in particular has been especially hyperbolic lately. (I’m not sure whether he actually believes it, or is engaging in marketing hype for his AI companies.) And if you check out the comments at the HP article, you’ll see that most people’s concerns are malicious AIs, the results of numerous SF movies.

Just about every time I post on this, I do acknowledge the danger of unintended consequences, but I suspect I’m not acknowledging it strongly enough for you. While the danger is real, I guess I just don’t see us telling a super AI to do something without first having it tell us its proposed solution, or giving ourselves the option to tell it to stop, or even reverse what its trying to do. Most people have a healthy skepticism of allowing automated systems to make consequential decisions unsupervised. I think AIs will have to have a long track record before we relax that skepticism.

LikeLike

Hi Mike,

Which is why I’m talking about ironmanning them. I don’t know how naive their fears are, but these guys are pretty smart. However they express their fears to a lay public, I think we owe it to them to interpret them as charitably as possible, perhaps even to the point of making their argument more robust than their conception of it. I mean, there’s a lot of leeway in how you interpret “evil”. Bostrom’s paperclip-obsessed AI could be characterised as evil, for example.

Yes, I think that’s about right. I think you need to take seriously the idea that the existence of a superintelligent entity which has misaligned goals is a qualitatively new kind of risk, potentially much more dangerous than a typical computer bug.

True. But just because most people’s concerns are unrealistic doesn’t mean that the whole idea of AI paranoia is nonsense. There are both legitimate and illegitimate causes for concern, and we should be focusing on the former rather than the latter.

Those are the kinds of ideas we need to develop to mitigate risk, yes. But it’s not necessarily so simple. For instance, an AI might find a way to deceive us as to its intentions — even if it cannot lie, it can deceive by either giving us too many insignificant details so as to mask the significant ones, or if asked for a more condensed explanation it could simply omit the undesirable consequences.

As for telling to to stop, perhaps it could engineer a scenario where we couldn’t tell it to stop for whatever reason.

Remember we are dealing with an entity which is arbitrarily superintelligent. Trying to foresee and prevent all strategies that could circumvent our precautions is no simple task. Even if it honestly tells us what it plans to do, there’s no guarantee we could understand the implications of what it tells us.

Perhaps, but if there is an AI playing a long game it may wait until we become complacent before it strikes.

LikeLike

Hi DM,

I’m not familiar with the term “ironmanning” (Google threw up unhelpful contradictory meanings). I wonder if you would you mind elaborating what you mean by it?

“perhaps even to the point of making their argument more robust than their conception of it”

I’m all for interpreting other people’s views charitably, when they leave room for that charitable interpretation. But I perceive that what you’re advocating amounts to apologetics, essentially making excuses for them. You’re right, they’re smart guys. But they’re human, and can be as wrong as anyone outside of their field of expertise. And other smart people, like Tyson and Nye, disagree with them. I’m not going to ignore my own logical conclusions because some of the smart people disagree.

” I think you need to take seriously the idea that the existence of a superintelligent entity which has misaligned goals is a qualitatively new kind of risk, potentially much more dangerous than a typical computer bug.”

I do take it seriously. It has the potential to be very dangerous. But so do nuclear weapons, towing asteroids, researching new pesticides or crops, and many other types of powerful technologies. We as a species are harnessing increasing energies and powers that could destroy us. AIs will be a danger of that type, although I tend to think if we design them with reasonable precautions that they’ll actually be a protection against those dangers.

“an AI might find a way to deceive us as to its intentions”

“perhaps it could engineer a scenario where we couldn’t tell it to stop”

“if there is an AI playing a long game it may wait until we become complacent before it strikes”

In my mind, these scenarios aren’t AIs interpreting their instructions in unexpected ways, but AIs acting with malice. Which brings me back to the question, what would motivate it to “find a way to deceive” or “engineer a scenario where we couldn’t tell it to stop”? Certainly, if we design it to achieve X at all costs letting nothing, including us, stand in its way, then that might be a danger. But why exactly would we do that? Does anyone code systems that way today? (A virus writer might do it, but hopefully by the time such malicious idiots get access to superintelligent AIs, there will be enough other superintelligent AIs to protect us from intentionally malevolent ones.)

LikeLike

Hi Mike,

I was using the term “ironmanning” incorrectly. It seems that ironmanning is a different thing, where you misrepresent your own position as another one more easily defended. What I meant was “steelmanning”, which is to interpret an argument as charitably as possible, potentially even to the point of strengthening it.

I think you’re focusing too much on the particular people and not on the issue, which is the risks posed by AI. My concern is that by convincing ourselves that bad arguments about the dangers of AI can be ignored, we will fail to recognise legitimate ones. I’m fully open to the idea that Hawking and Musk et al have a naive and unrealistic idea of how an AI disaster might come about. But that doesn’t concern me. What I am more concerned with is the dismissal of the whole question because of the existence of such naive answers.

I’m with you in thinking that AIs might be beneficial, but I do think they need to be handled really carefully. A new pesticide cannot outwit us. Nuclear weapons can only destroy us if we allow them to.

Yes, this is the scenario. Because this is what all computers do. We program them to do X, and they will do precisely X, no matter how much we might plead with them later that X isn’t what we really intended. We can put into X all sorts of clauses about checking in with us and accepting new instructions and so on, but if we are not careful an intelligent system may see that the best way of achieving X will be to follow the letter of our instructions but to find a way to circumvent the spirit of them — not because the computer is inherently malicious but because this may be the most expedient way of achieving X as we have clumsily defined it.

Because following instructions to the letter is what computers are for. They have no independent common sense or evolved empathy or other emotions to allow them to sanity-check their instructions and infer what it is we actually want, they have only what we give them. Making systems smarter will not solve this problem, it will just expand their capacity to interpret and act on their instructions in ingenious and potentially disastrously unforeseen ways. We need to find a away to avoid these pitfalls. Nick Bostrom has suggested that one approach might be to design a system which will make it its job to first figure out what we would have wanted it to do if we were smart enough to know what to ask it, and then do that (although you still would need to avoid scenarios such as it turning the whole of the earth into more computing equipment to help it answer this question before it realises that this is not what we would have wanted!).

Because computers are ruthlessly literal followers of instructions, contra Tyson, it may turn out that the best way to avoid a disaster is actually to program certain pro-social emotions into our AIs. Unfortunately we have no track record of doing this successfully with systems smarter than ourselves, and one can imagine scenarios where this too goes horribly wrong.

LikeLike

Hi DM,

It seems to me that we agree on what the actual danger may be, but disagree on just how much to be alarmed by it. My perspective is that we do indeed have to be cautious in trusting automated systems with key decisions. That’s true today and will be increasingly true as they increase in capabilities. But I just can’t say that I see an avalanche of people eager to prematurely give that trust. It might increase as self driving cars become more of a reality, but I suspect there will be extreme caution for a long time on this.

We do disagree on this. I think the probability of an automated system making the jump, on its own, from strictly following our orders, whether those orders are what we meant or not, to attempting to circumvent or deceive us, is profoundly unlikely. I can’t say it’s impossible, because there are an infinity of possibilities that don’t violate the laws of physics, but I can’t see it as probable. At least unless someone has designed a system to be deceptive. But, again, that’s something that could happen today, if we let it.

Does increasing intelligence bring dangers? Sure. But it also brings protections. I do think we should give AIs programming that resembles pro-social emotions (Asimov: a robot will not allow a human being to be harmed, etc), but I don’t think we should attempt to recreate those emotions exactly as they exist in humans or animals. That, it seems to me, would be playing with a fire that we don’t need for the vast majority of the benefits AI can provide.

LikeLike

Hi Mike,

I don’t see that as such a jump. It could be strictly following our orders even as it deceives us, because we may have inadvertently and indirectly ordered it to deceive us.

We see this kind of thing with genetic algorithms. I trot out these examples from time to time, but there are cases of “ingenious” “cheating” by “intelligent” computer systems circumventing our desires while following our instructions to the letter with surprising and unforeseen results.

A first example is of a system tasked with designing a digital circuit to output a signal as close as possible to a sine wave. The system did so with a circuit much simpler and with output more perfect than the experimentalists could understand. It turned out that the circuit operated by effectively forming an antenna out of a series of wires which was picking up a sine wave signal from a nearby source. Clever, unforeseen and completely against the spirit of what the system was asked to do.

Similarly, there was an algorithm which was tasked with designing a robot body consisting of joints (not wheels) which would cover the greatest amount of horizontal distance in a given time frame, the intention being to see what kinds of rapid locomotion could be evolved. One of the solutions was simply a tower which fell over, moving the centre of gravity horizontally very rapidly in a short time but which is completely useless as a means of locomotion.

This is just with dumb systems confined to a very specific set of problems, but it’s not hard to see how it is far from a trivial problem to specify for a computer what you want it to do in such a way that it will actually solve the problem you have in mind in a way you find satisfactory. With intelligence, you open up a vast array of new possibilities, exponentially increasing the number of ways we can be surprised.

And, in a way, that’s what we want. If we couldn’t be surprised by the system then it would be of limited use, but the risk is that in such a powerful system an undesirable interpretation of our requests could lead to very bad surprise indeed.

LikeLike

Hi DM,

Your examples are instructive, since they represent existing systems. Has anyone put such systems in charge of the power grid, or the nuclear response system? How likely will we be to do so in the future? Will the danger be worse than a key military person going insane and unleashing weapons of mass destruction?

There are definitely dangers. It certainly makes sense to be cautious, particularly with new intelligent systems. But I perceive that this is a caution that most people, particularly engineers, understand.

Maybe you see the Frankensteinien fears of Musk, Hawking, and others as useful for guarding against the unintended consequences danger. Perhaps it is, but I also see serious downsides, which we’ve already discussed.

LikeLike

Hi Mike,

I don’t know. We may over time habituate to letting AIs do more and more.

But the other risk is that an AI will find a way to take control we don’t mean for it to have, not out of selfishness but because it helps it implement its goals.

Again, I’m not so much defending them as reacting against Tyson and Nye’s unwarranted confidence that there is nothing to worry about.

LikeLike

I suspect both Tyson and Nye would deny that they say there’s nothing to worry about, just that Skynet type fears are overblown. But I might be projecting my own position on them.

LikeLike

I do find it hard to imagine a human-level (or greater) AI without emotions, or something akin to them. You talk about “programming” such an AI, but isn’t it more likely that we would teach or evolve such a system? Unpredictability seems to be a part of the deal.

LikeLike

Exactly. Intelligent machine is supposed to find problem solutions that go beyond pre-programmed algorithms. E.g. if a machine designed to play chess decides to play tennis instead or ride a bicycle, it’s an intelligent machine. In other words, intelligence implies that a machine would do something that it is not expected to do. Machines with unpredictable behavior are not called “intelligent”. They are called “broken”. They don’t get admired for their unexpected behavior. They get fixed or destroyed because they don’t serve the purpose they were designed for. With this in mind, I believe, intelligence cannot be created. But it can appear on its own – quietly evolve from something that was not considered intelligent before.

I think, this is a fundamental paradox with AI. The unpredictable component of the intelligence is what makes it, well, unpredictable, and, therefore causing some degree of fear.

LikeLike

Steve, I suppose it depends on what we mean by “human-level”. It’s easy for me to imagine an AI with as much intelligence as a human without human emotions, including the flexibility to do new things. Its motivations for doing those new things would be different than a human’s, since a human’s motivations come from human emotions.

agrudzinsky, I think the unpredictability comes from a being having its own agenda. That’s what an AI probably wouldn’t have without human or animal emotions or instincts. Except for experimental cases, I can’t imagine why we’d want everyday AIs to have an agenda separate from its owner’s or user’s. (Although an AI owned or controlled by someone else might well have a different agenda than ours, but it should be that controlling human’s agenda, not its own.)

LikeLike

What about the “programming” question though? Tyson also uses this word – programming emotions, he says. I don’t believe that’s how complex AI will be developed. I think it will be evolved, through some selection process, and taught how to do tasks.

LikeLike

If we’re designing the selection process, aren’t we effectively programming it? Isn’t programming emotions into us what natural selection has effectively done?

Personally, I think the popular sentiment that we’ll only be able to do it through some form of evolution is giving up. It implies that AGI can’t ever be understood, that there’s some magical essence to it that will forever elude us. We’re probably decades away from achieving it, perhaps centuries, but assuming we never will strikes me as excessively pessimistic.

I tend to doubt evolution will give us a free pass to avoid the hard work on this. To design a selection process that gives us what we want in a reasonable time frame, it seems like we’ll have to understand the desired result.

LikeLike

I think it is quite plausible that AGI can’t ever be understood, but this doesn’t at all mean that it has a magical essence. I don’t think a man will ever run faster than a jet plane, but this doesn’t mean that jet planes have some magical essence. It just means the magnitude of the ability we would need is beyond what is physically possible for humans.

AGIs would be very very complex. I think there are limits to the complexity a human brain can understand. I think we can understand how it works in principle, or how little bits of it work, but I don’t think there’s any reason to be confident that we can every really understand in detail how an evolved AGI thinks, to the point of being able to read its mind and understand its motivations and long term plans and so on.

LikeLike

“I think we can understand how it works in principle, or how little bits of it work, but I don’t think there’s any reason to be confident that we can every really understand in detail how an evolved AGI thinks,”

It seems to me that we already have such systems. There are many people who understand the architectures of modern operating systems, but since OS 370, I don’t know that there could ever be said the any one person understood the whole thing in detail. My comment was toward understanding the overall principles.

LikeLike

Right, but I think we may already understand in principle how the brain works. The workings of neurons are not particularly mysterious, as far as I understand. We also understand how neural networks can perform computational functions. What we can’t understand because it is too complex is how whole brains process information. Brains are not like operating systems, where there are very discrete parts that do very specific jobs with well defined interfaces. Brains are this big complex biological mess. It can’t really be understood reductively. You have to understand the whole thing at once your you don’t really understand it at all.

Or that is one somewhat extreme pessimistic view. It’s not quite true that we can’t improve our understanding by a process of reduction and analysis of discrete parts, because the brain is not completely homogeneous and does actually have specialised regions. But I think we can only do that to a point. And the same would go for any evolved (not designed) AI.

LikeLike

Most neuroscientists that I read state that we don’t really understand the brain yet. Oh, we understand how neurons fire, and synapses strengthen and weaken, and have some architectural insights, but we’re a long way from understanding it overall.

This post links to an article that illustrates what we’d know about computers if we could only investigate them the way we investigate brains.

https://selfawarepatterns.com/2014/11/01/the-tale-of-the-neuroscientists-and-the-computer/

LikeLike

I think we understand most of the principles of how neurons can process information and the low level mechanics of how neurons work. It’s the holistic view of how it all fits together that eludes us, and in that sense we don’t understand it.

LikeLike

Agreed. I do think we will eventually reach that understanding (although it might be generations away).

LikeLike

Haven’t you ever been annoyed by a software that is “too smart”? The simplest example is an autocorrect suggesting all kinds of words except the one that you want and believing that it is “smart enough” to know what you intended to type.

LikeLike

Is that autocorrect “too intelligent” or “not intelligent enough”? I think, those two phrases are often synonymous.

LikeLike

I would say it’s not being intelligent enough for the functionality it’s trying to provide. Autocorrect is frustrating because it works just often enough that it’s useful to keep turned on, but fails just often enough to not be trustworthy. Like weather predictions.

LikeLike

Another aspect that doesn’t get discussed much is what kind of physical capacity we might give an AI. A self-driving car is one thing; assigning complete control over a country’s nuclear arsenal is another. A desk-bound AI has less potential to do harm than one given a means of moving around and picking up objects. Presumably, AIs would be given more powers as they demonstrated responsibility, rather like I allowed my children to use scissors for several years before letting them loose with an electric hedge trimmer.

LikeLiked by 1 person

Agreed that a desk bound AI has less potential to do harm but if it has the potential to talk to anything at all with more agency (e.g. humans or other computers) then it has the power to influence and control more indirectly, potentially with a view to eventually gaining more direct control.

One way it might do this is by patiently demonstrating responsibility over many years so as to be rewarded with more trust and more power until finally it has enough and simply takes over. That amount of patience, forward planning and intelligence is not demonstrated by most children so the analogy, while certainly useful, does not quite give a completely satisfactory answer.

LikeLike

Ha! If we ever became that paranoid, we would have to start questioning our own intelligence 🙂

LikeLiked by 1 person

DM raises a good point – “computer systems circumventing our desires while following our instructions to the letter with surprising and unforeseen results”. I enjoyed the story of the tower that simply falls over.

A century ago, engineers set themselves the task of “finding a cheap and plentiful way to generate electricity.” Fossil fuels were the best answer. Now, we are revieing results and setting ourselves a new task – “finding a cheap and plentiful way to generate electricity that does not lead to catastrophic climate change.” This illustrates an important point – that a true general intelligence does not simply solve problems, but sets itself new goals.

LikeLike

But where do those new goals come from? Our new goals come from our various evolved instincts (our programming). They’re what give us our own agendas. The urge to find an energy source that doesn’t wreck the environment ultimately comes from our survival instinct.

Where would an AI’s goal to wipe out humanity (or whatever) come from? For any example (such as “solve global warming”), consider carefully if you would tell an AI to physically implement a solution to that problem without getting your approval first. If you’re worried about it subverting that approval, where would the motivation for that subversion come from?

Is it possible that some fluke of logic could turn an order to research a disease into a deceitful dangerous plan? Sure, it’s possible. But it seems to me that that is an infinitesimal slice of possible outcomes, most of which just leave us with a buggy nonfunctional AI. And even if that fluke does lead to a murderous rampage, wouldn’t we have other AIs to protect us? (Aside and apart from the possibility that we haven’t enhanced ourselves by then.)

LikeLike

AH, that is not what I meant. What I meant is that a true general intelligence (like human intelligence) operates in part by setting a goal, solving a problem, creating new problems, and then realizing that the goal needs to be modified.

If we don’t allow an AI to work in such a way, but simply to suggest solutions to problems that we set it, then we are using it like we use google or microsoft word. That is not a true AGI. That is not dangerous in the way Hawking claims.

But a true AGI – one that we allow to set its own goals – would be potentially dangerous, in exactly the way that humans are already dangerous. I don’t think we should be greatly worried about an evil, deceitful AI, but simply one that produced unintended consequences. We should equally worry about humans doing exactly the same thing. We know that humans fail in all kinds of dangerous ways.

LikeLike

Hi Steve,

I don’t agree that a true AGI would set its own goals, because we don’t really set our own goals either. We set goals, but only as a means of fulfilling drives that are baked in by evolution and environment. Ultimately, our goal is to seek pleasure of various kinds, (including the kind that comes from altruism) and avoid pain of various kinds (including guilt and self-loathing).

When we build any AI, including a true AGI, we need to give it a goal (or equivalently a set of drives). It may identify subgoals as useful for meeting those fundamental programmed goals, (the fear being that one such subgoal may be the subjugation of the human race)

LikeLiked by 1 person

I think a lot of the trouble comes in the phrase “program emotions into robots,” which is pretty broad and opens the door to a lot more questions than it answers. I guess that’s the point—to start a discussion, as it certainly did here. What counts as emotion? Will we be able to simply program “pleasure” for example in isolation from other emotions? And Steve brought up the physicality of AI…I wonder what role that would play in emotions? Why does the quote specify “robots”?

I can’t even begin to think of doomsday consequences from creating AI with emotions. There are just so many questions!

LikeLike

I think Tyson was attempting to communicate a difficult concept in a brief sound bite, which has inherent limitations. (I’ve tried to do similar things in Twitter conversations before, with pretty poor results.)

I see emotions as the evolved primal impulses that all animals have. It’s the programming coded into us across aeons by natural selection, that makes us survival machines. I think what Tyson is saying is that as long as we don’t put survival machine programming into robots, the chances of them rebelling is limited.

I think he specifically mentioned robots in the sense of embodied AIs, those with some physical abilities. A video game AI who wants to rebel might not have many opportunities to actually do so. Of course, an AI who gets access to physical systems is essentially a type of robot.

LikeLiked by 1 person

It looks like Disagreeable Me has already gone over the territory with you that I would have. I agree with his views.

There is a two-fold process that puts us at risk: One is that we do grant computer-driven processes more and more power over our lives. Two is that as system complexity grows, systems design new systems (because no human could) and to some extent create their own goals.

Science fiction has well-explored how a system might, on a purely logical basis of goal-seeking, become a problem for us. A common idea is that the system, regardless of its programmed goals, decides its own survival is crucial to its ability to achieve those goals. A smart system might recognize that humans may seek to thwart that new goal, and that may logically cause it to deceive.

James Hogan’s The Two Faces of Tomorrow is a good study of an AI (built in orbit to avoid these very problems) with problematic goal-seeking behavior. They try to isolate it and control it, but being intelligent, the machine quickly (really, really quickly) realizes there’s a greater world out there and seeks to understand it to better fulfill its programmed directives. When it suffers a power loss (humans attempting to shut it down), it reacts to protect its power sources and develop more secure ones. Suffice to say that building it in orbit almost wasn’t enough. It was (in this case) only the machine realizing that some of its input came from other intelligences that saves them.

The TV show Person of Interest gets a bit into this. Finch had to carefully program morality into The Machine to avoid inappropriate goal-seeking behaviors. (Which then raises the issue of that morality becoming corrupt.)

The thing is, if you’re talking about a true AI smart enough to be interesting and useful, you’re talking about something we really don’t understand. The human brain is fallible and imprecise. What will an intelligence be like that doesn’t depend on wetware? To have all knowledge instantly and accurately available, to process information at incredible speed, and potentially to control some kind of outputs?

There is an idea going around that a sufficiently smart AI could talk a human into letting it go free. What if an AI told you it had a cure for cancer, but you had to free it before it would tell you?

LikeLike

The problem with using science fiction as evidence for an argument, is that SF authors are motivated to come up with a good gripping story, often reaching for the worst possible scenarios regardless of their probability. Frankenstein could have been a story of someone creating life, but it’s a lot more gripping with things going horribly wrong.

As I wrote above, it’s possible that an AI could mindlessly bring on the apocalypse with its interpretation of its programming. But that’s a very narrow sequence of events. I perceive it to be an infinitesimally small slice of the possibilities of what could go wrong. The overwhelming majority of the possibilities outside of that slice simply involve a non-functional AI.

None of this is to say that we shouldn’t use caution in the directives we give AIs. I’m not aware of anyone actually arguing that we shouldn’t.

LikeLike

“…SF authors are motivated to come up with a good gripping story,…”

Oh, absolutely! I would never present an SF story as evidence, but as suggestive if the story makes sense. (And Hogan is a pretty sensible author.) Many SF authors have been eerily prescient about the future. (I’ve always loved the story about the US Government being very curious who told Arthur Clarke military secrets about atom bombs and communications satellites. I’ve always believed one of SF’s great values was its ability to look accurately at our future.)

Frankenstein, of course, is really a parable about not messing with things we know not wot of! (Well, it’s actually the Prometheus myth about stealing secrets from the gods.) Hogan’s work is a lot more pedestrian! XD (It’s definitely rock-hard SF… I grinned once when I realized he’d just spent a page-and-a-half describing how an email got from the Moon to Earth. I know most find that kind of detail tedious, but I just love it! 😀 )

The goal is to create true intelligence. I’m not sure we can assume that succeeding in that results in something we can control. Well, ask any parent about controlling the intelligences they made. If we create true intelligence, as with any intelligence, how could it not have independent thoughts?

The movie Her asks an interesting question: What happens when super-smart AIs find us too dull and begin to prefer their own company?

This idea that, ‘well, we just don’t build emotion into them’ may miss the point. What if the kind of emotions we’re talking about here are inextricably linked with intelligence? What if desire and curiosity are direct products of intelligence?

To be clear I’m not preaching Certain Doom. I’m just saying (as you agree) we need to be really careful about this and be very aware of the dangers. If nothing else, we might be creating something that demands sovereignty and parity!

LikeLike

It depends on what you mean by “true intelligence.” If you mean intelligence with a human like personality, then yes, emotions may be part of the package. (Assuming we’re not satisfied with them just being faked.) But if you mean a system able to learn and deal with new situations, then I don’t see why human or animal emotions or instincts would be required. They’d only be required if we wanted that system to address things in a way similar to how humans or animals would.

Computers are demonstrating an ability to win chess competitions, Jeopardy games, and drive cars with no emotions required. Their capabilities continue to increase without those emotions. Might we eventually discover that the equivalent flexibility of human intelligence does require emotion? Since we haven’t gotten there, I can’t rule it out. I can only point out that they’re not required at the current level of technology.

Of course, we have pervasive evidence from the animal kingdom that the dependency doesn’t go the other way; emotions don’t require intelligence. So the two traits aren’t inseparable. If we regard the current abilities of computers and intelligence equivalent to the level of a human to be a matters of degree, then they appear to be independent.

LikeLike

I just finished a reply to your Hume post, and here I feel like we’ve switched sides! 😀

There your definition of emotion includes instincts and motivations. Doesn’t a chess program, or a Jeopardy program, or a car nav program, have motivation? They absolutely have objectives. In fact, in a way, they’re all pretty single-minded! 🙂

There is the phrase “intellectual curiosity.” Is that an emotion? Why wouldn’t a “true intelligence” have intellectual curiosity? An important point may be:

“It depends on what you mean by ‘true intelligence.’ If you mean intelligence with a human like personality, […]

No, not talking personality here, just talking true intellect. The ability to think, to pass the Turing test — that’s the goal of hard AI. (I think that’s usually what most people mean by “AI” — something like Hal 9000 or Star Trek’s Data.)

The dividing line is something along the lines of having an original thought. A computer program today runs like a train on rails. It may switch to other rails, but nothing the program does is surprising. What happens when software becomes complex enough to “have an original thought” so to speak?

At that level, how could it not be intellectually curious? Anything functioning at that level, of necessity, has programmed motivations and drives just to achieve that level. It will, for example, have the “desire” to parse input into commands it can understand. It will have the “desire” to execute those commands. Computers already do this at a crude level. What happens when that level becomes more and more advanced?

“But if you mean a system able to learn and deal with new situations, then I don’t see why human or animal emotions or instincts would be required.”

Isn’t the “desire” to learn an “emotion” as you broadly define it in the Hume post? Isn’t learning and dealing with new situation exactly what you ascribe to emotions there? If we build machines that do this, as you define emotion, it seems we’ve already given them to machines. It just seems that with more advanced machines, they’ll have more advanced motivations.

LikeLike

This first paragraph is a repeat from the other thread. In both threads, I’m using “emotion” to refer to human / animal drives, desires, instincts, and impulses, essentially the programming living organisms have coded in them from natural selection. Obviously AIs will have their own programming that will be to their logic processing as emotion is to ours.

Restricting the term “emotion” to human / animal desires isn’t necessarily my normal practice, but Tyson’s use of it that way allowed him to communicate a lot in a brief statement. You can call machine programming “emotion” if you want, but that just changes the discussion to one between human/animal emotion and machine emotion.

Regardless, there’s no reason computers would need the full range of human / animal desires. Some of their machine emotions / programming might resemble some human emotions, like curiosity, but there’s no reason to have them prioritize self concern and progeny concern the way humans and animals do. Their priority would be their designed purpose.

LikeLike

I’ll give you much the same answer: I contend that certain “emotions” (such as curiosity) come with true intelligence, so if we build true AI — a machine capable of an “original thought” — then we’re no longer in control of its “programming” and it may find drives and goals we don’t expect (or want).

It we create “AI” that doesn’t have that freedom of “thought” then we really haven’t created true AI.

LikeLike

I think curiosity might be a useful trait for an AI, depending on its purpose, but I don’t think I’d agree that its an essential one. A self driving car might be able to respond to a wide variety of road situations. It might learn about new traffic situations and how to deal with them. In that sense, I guess you could call it “curious.” But I wouldn’t want it to become interested in the Spanish civil war and take up a career as a historian.

On “true AI”, I guess it’s a matter of definition. I really don’t want an AI that will put its own needs ahead of mine, or in any way yearn to do so. I want one that is driven to do exactly what I purchased it for. Giving it a desire for self fulfillment is exactly what I mean by not programming in human and animal desires into it, what Tyson means by not programming emotions into it. In fact doing so and then not immediately setting it free strikes me as monstrously unethical and begging for trouble.

LikeLike

I’m not sure if you’re understanding what I’m saying (maybe you do, I’m just not sure). This isn’t something we’d program in deliberately. I’m saying curiosity may be an emergent property of intelligence.

I completely agree that systems designed for specific tasks (such as car nav, for e.g.) will never be a problem. It’s the ones we build to pass the Turing test — the ones we build to be true intelligences.

I suspect that building such an intelligence won’t be like building a building — you create a plan and then build according to the plan. It will be like building a tree — you start with a seed and grow an intelligence. (Specifically, you need to train a neural net. Once we’ve grown a given intelligence, then we should be able to clone it, but I’d bet that creating the intelligence in the first place is a process (like educating a human).)

As a process, just as with a growing human, we won’t be in full control of what grows. We’ll try to shape it just like we do a child, but any parent knows that even little intelligences come with big wills.

I just can’t believe that a genuine intelligence is something anyone can build to careful spec.

LikeLiked by 1 person

We obviously can’t rule out the possibility that curiosity is emergent at some level of intelligence, but I see no real evidence for it. If you accept the proposition that modern computers have some degree of intelligence, it’s worth noting their utter lack of curiosity, unless programmed for it. I think it’s more likely that curiosity is, like all emotions, programming that evolved because it provided a survival advantage.

It’s possible that we might find growing or evolving an AI productive at a certain point, but I think it’s too strong to say we’d never be able to design such a system. Personally, I’ll be surprised if growing or evolving AIs ends up being very productive. I doubt we’ll get off that easy. It feels like the tactics of the people in the 19th century who, despairing of ever understanding aerodynamics, tried to build flying machines that worked like birds, complete with flapping wings.

On the comparison with educating humans, it’s worth remembering that humans aren’t born blank slates, but come with extensive programming (emotions, instincts, etc).

LikeLike

“If you accept the proposition that modern computers have some degree of intelligence, it’s worth noting their utter lack of curiosity,”

We’re talking about AI here, and I’ve been pretty explicit that I mean Turing Test AI. As such, computers aren’t any better than my calculator at being “intelligent.” As a counter example, nearly every living being with some level of intelligence is curious. Generally speaking, the more intelligent the animal, the more curious it is.

As I said before, I draw a very strong correlation between curiosity and intelligence. Don’t you find that highly intelligent people are generally also very curious?

“Personally, I’ll be surprised if growing or evolving AIs ends up being very productive.”

Okay. How do you think Turing Level AI will be accomplished? That would be a great discussion!

LikeLike

I’ve defended the Turing test in the past, but one criticism that I think is valid is that it tests for humanity as much as intelligence. I think we can have a capable intelligence that can’t pass the test. Heck, a human from a radically different culture from the judges probably couldn’t pass it. An alien from Andromeda certainly wouldn’t be able to.

I suspect the first system to pass the test will do so by brute force “fakery.” I put “fakery” in quotes because, until we have an working understanding of the architecture of the human mind, we can’t be sure that our “fakery” hasn’t created a “thinking” entity. Of course, once we do have a working understanding of the mind, we’ll know enough to build one. Personally, I think it’s only a matter of time (possibly centuries) before we achieve that understanding.

Unless of course there is an aspect of the mind outside of the laws of physics, a ghostly soul, and undiscoverable by science. I don’t think that’s likely. All indications are that the mind is a system that exists in the brain. But intellectual honesty requires that I admit we can’t completely eliminate the possibility until we understand and can recreate the mind. (Even then, many people will still maintain that the created mind is not a “true” or “genuine” one.)

LikeLiked by 1 person

FWIW, I use “Turing Test” as a short stand in for “interaction with an entity with the goal of determining whether that entity is an intelligent being.” There’s no specific Turing Test (that I know of); it’s a matter of whether some entity can have a conversation with you that convinces you that entity is a conscious entity.

Here’s the thing about a “working understanding of the architecture of the human mind”: that would seem to only get us as far as a new-born infant. The “hardware platform” and the “firmware O/S” that humans come with only gets us those instincts and motivations. (And if we’re really replicating the human mind, those “emotions” may be part of the structure.)

Another angle is a ‘working understanding of intelligence,’ and I’m not sure if that’s easier or harder. I’m thinking harder?

Anyway, I guess my point is that the structure of a brain or mind is one thing, but the connections formed of a lifetime of experience is imposed on that structure and is what makes that structure useful. I’m not sure I see how to design that so much as grow it in some neural-net fashion. At least not shy of what amounts to mind-uploading.

But to take this home, you can create an intelligent system in human image by replicating the structure of our brains or minds, or you can try to create one on some other pattern. Regardless of how you achieve it, my very strong bet is that certain traits — such as curiosity — would emerge.

Look at it this way: If we create a true AI — a thinking, self-aware entity — now we have what either amounts to a slave, a child we “own,” or a free being. One is reprehensible, the next grows up often beyond our control, and the last never was under our control.

If we’re just talking smart machines that act intelligent, that’s a whole other kettle, and I’m with you: nolo problemo!

LikeLike

There actually is a somewhat specific test based on Turing’s 1950 paper. I talk about it in this post: https://selfawarepatterns.com/2014/06/11/what-does-the-turing-test-really-mean/

I suspect an entity won’t be able to convince us that it’s conscious, a fellow being, until it has a lot of the same programming that we do, or can at least fake it well. That will require a certain degree of intelligence, but intelligence can be there without that programming. I think for most purposes, that human / animal like programming won’t be very useful for most of what we want from an AI. Which is good since, as I’ve mentioned, I think it’s dangerous and ethically dubious. (Although probably not the fake version, which would be useful for user interfaces.)

On newborn infants, if we do an AI exactly like a human, that might be true. One thing is that an infant brain still has a lot of developing to do. And a lot of our innate instinctual predispositions don’t surface until later.

But I don’t know why we’d necessarily want to do it that way. Yes, we want an AI to be able to learn, but its programming, its instincts and innate knowledge, could be much more complete and closer to the final product than any animal’s. There is also probably a lot more of that programming that we don’t really want it to overwrite from its experiences. And, unlike animals, we could copy the learning of previous AIs into it.

I do think we have to be careful with mind uploading. I fear some uploaded entities much more than an AI. An uploaded human, or any social animal, would hopefully preserve its social instincts. But if someone uploaded a psychopath? Or a shark’s mind, and then allowed it to uplift itself? It seems to me that many of the fears about AI should be more focused here. Although hopefully the uploaded humans will be able to provide some protection from the uploaded sharks.

I don’t think self awareness by itself makes a fellow being capable of being considered a slave rather than a tool. Such an entity won’t care whether its existence continues or not. It’s only when they have self concern, a survival instinct, that I think we cross that threshold. Remember Douglas Adams’s pig that wants to be eaten.

The distinction between “true AI” and machines that “act intelligent” feels artificial to me. It seems like it allows you to reject any intelligence that doesn’t have the attributes you say should be there.

LikeLike

“There actually is a somewhat specific [Turing] test”

I meant there was no set list of questions. I’m aware of the conditions required for it.

“I think for most purposes, that human / animal like programming won’t be very useful for most of what we want from an AI.”

Do you see two separate categories: Human/animal “emotional” stuff and Pure Machine Intelligence stuff? (And that curiosity and ‘will to live’ belong in the former?)

I see them as fuzzy and overlapping. As I’ve said, I think any free thinking intelligence will be curious.

“[AI’s] instincts and innate knowledge, could be much more complete and closer to the final product than any animal’s.”

How do we achieve that instinct and knowledge database to begin with? Facts dataset, for sure, but it’s not just facts; it’s connections between facts, and contexts for facts, and weightings for ambiguous facts. Those comprise a substantially larger dataset.

It’s possible Gödel is involved. We are starting with facts (axioms) and building connections (theorems) on them (one way or another). It may be that there are (true) connections that cannot be generated from the facts alone.

“But if someone uploaded a psychopath?”

If that’s a possibility, why can’t an AI be psychopathic due to error or maliciousness? (If you’ll argue most program bugs result in gross malfunction, consider a working raw AI framework intended as a foundation for new applications. Imagine getting the upper layer wrong so while you still have a working AI, it’s functionally insane.)

Mind uploading assumes software can fully replicate human minds — with all their baggage. If that’s the case, than so can any given AI. Which means it’s possible to have problematic AI. If you grant mind uploading, you have to grant the potential for problematic AI.

“I don’t think self awareness by itself makes a fellow being capable of being considered a slave rather than a tool. Such an entity won’t care whether its existence continues or not.”

Are you suggesting programming slaves that don’t mind being slaves? What about enslaving uploaded minds? What makes one substantially different from the other?

“Remember Douglas Adams’s pig that wants to be eaten.”

Remember you said that next time you ding me for using a science fiction reference to support a point! 😀 😀 😀

“The distinction between “true AI” and machines that “act intelligent” feels artificial to me.”

You are here, I think, referring to the matter of experience and philosophical zombies? That really hasn’t come in our discussion here… I don’t know that I feel a distinction matters in this case. Machines that act intelligent — that pass the Turing Test — is sufficient for this, I think.

It strikes me that the programming to successfully “act intelligent” may be close to (if not exactly as) the complexity of whatever it means to “actually” be intelligent. (Are you familiar with Kolmogorov complexity?) Assuming the latter even exists in machine form. I’m sure we’ll achieve some level of the former. (One thing I like about the former is mind uploading can still be denied.)

LikeLike

“Do you see two separate categories”

I do see human and machine programming as overlapping. The main thing is to avoid putting the self actualization stuff in the machines.

“How do we achieve that instinct and knowledge database to begin with?”

Tax software has operational knowledge that humans only used to acquire through years of studying. An AI doesn’t need to full human experience to be useful.

“If that’s a possibility, why can’t an AI be psychopathic due to error or maliciousness?”

The problem with a psychopath is that their own self fulfillment impulses aren’t counter-balanced by empathetic impulses. If an AI doesn’t have self fulfillment desires, then its just without empathy, as my iPhone is.

“Are you suggesting programming slaves that don’t mind being slaves?”

I’m asking why we would make machines that don’t want to do what we want them to do instead of making machines that do want to do what we want them to?

“What about enslaving uploaded minds?”

It’s a possibility. Once a mind has been uploaded, and if we sufficiently understand its working well enough to modify it, then we could make versions of that mind that like being, say, a sex slave. It’s one of the reason I’m not sure everyone in a post singularity world would just live in a giant integrated virtual environment.

“Remember you said that next time you ding me for using a science fiction reference to support a point! 😀 😀 :D”

Ha! You’ve been waiting for an opportunity to use that one. I’ll just note that an offhand citation to a sci-fi scene whose merits we had previous agreed on isn’t quite the same as a long comment using science fiction as your primary sources of support 😛 😛 😛

“If that’s the case, than so can any given AI.”

I’ve never said problematic AIs are impossible, only that the chances of them being problematic in a malevolent way is very improbable, unless we program the full range of human or animal desires in them.

“It strikes me that the programming to successfully “act intelligent” may be close to (if not exactly as) the complexity of whatever it means to “actually” be intelligent.”

That’s a possibility that I can’t see that we can rule out. We may be able to judge it better when we understand brains better, although even then we couldn’t rule out that consciousness hadn’t come about through an alternate architecture.

I think I’ve heard about Kolmogorov complexity, but can’t recall much about it.

LikeLiked by 1 person

“Tax software has operational knowledge…”

We’re talking about AI, not tax software — which is an “expert system” at best, not AI. But consider even that for a moment: where does it’s tax knowledge come from? Isn’t there, in fact, a large army of people involved in analyzing tax information — as well as feedback on the existing system — and building that database?

And as I found this year, one of the best systems out there (H&R) wasn’t very good in my case, and this is after such systems have been around for a long time!

But part of my point is that complex systems such as this are extremely hard to get right. Even after years of working on the problem.

“The problem with a psychopath…”

You’re not understanding my point. Once you grant mind uploading — once you grant that a fully human mind can exist in the machine — you’re granting that any software can also act like a fully human mind.

“[T]he chances of [AI] being problematic in a malevolent way is very improbable, unless we program the full range of human or animal desires in them.”

This isn’t about what we don’t build in. This is about what’s possible — about what can go wrong or what someone malicious might do. Further, I think you may be misjudging what’s optional in a complex enough thinking process. I think some of it may be emergent.

Look, in granting uploading, you’re admitting dragons exist. You can say, “Well, just don’t breed them!” but someone will. Even, perhaps, accidentally.

“Once a mind has been uploaded, and if we sufficiently understand its working well enough to modify it, then we could make versions of that mind that like being, say, a sex slave.”

My mind just boggled. What’s the difference between brainwashing a human being into “liking” being a sex slave?

I think we have different ideas about the sovereignty of a mind. Assuming we’re talking about the same thing: thinking minds. I get the feeling you see a form of high intelligence — a form of AI — not deserving of sovereignty.

But I would call such a system mindless, and that’s not the point of AI. The point of AI is to build a mind. Really the whole point is to see whether machine minds are possible, because that says so much about our own minds.

LikeLike

“where does it’s tax knowledge come from?”

Where does anyone’s knowledge come from? Other humans, data written by other humans, or direct sensory input. We’re all products of our programming or inputs.

“you’re granting that any software can also act like a fully human mind.”

Have I ever asserted that an AI couldn’t eventually act just like a human being, with the appropriate programming? I just maintain that it’s not an inevitable result of intelligence. It’s a roll of the dice whether or not uploading will happen before a human like AI is possible.

“This isn’t about what we don’t build in. This is about what’s possible”

I thought this whole discussion was about to what degree we should be concerned about AIs. Lots of stuff is possible. Much of it is extraordinarily improbable. For example, it’s possible I could be hit by a lightning bolt before finishing this sente

“Look, in granting uploading, you’re admitting dragons exist. You can say, “Well, just don’t breed them!” but someone will. Even, perhaps, accidentally.”

I don’t doubt that someone will, eventually. But it’s not something that will happen by accident, and it won’t be what the first AIs are. But by the time they do, there will hopefully we an army of good AI to protect us, assuming we haven’t enhanced and can protect ourselves by that point.

“My mind just boggled. What’s the difference between brainwashing a human being into “liking” being a sex slave?”

Brainwashing never alters our fundamental evolutionary programming. But an uploaded human could conceivably be reprogrammed at their core to value their masters’s well being and not their own. Obviously that’s a disturbing prospect. But it may be a danger that humanity will have to face. Insist on being uploaded to your own hardware.

“But I would call such a system mindless, and that’s not the point of AI.”

Again, you can define “mind” in such as way as to have the properties you’re arguing for. The point of AI for pure research and the point of AI for commercial interests are two different things. Some AI research wants to create a human like mind. Commercial AI interests want to produce usable products. It’s the usable products that will be pervasive. A phone that rebels through concern about its own survival, probably won’t be a market success.

LikeLike

“We’re all products of our programming or inputs.”

Indeed. The point is: a great deal of the programming was achieved as a process.

“It’s a roll of the dice whether or not uploading will happen before a human like AI is possible.”

Or if it will happen at all. Yes. But if we’re granting it as a reality, then we’re talking about full machine minds. If a machine can run an uploaded mind, it can run an invented mind. Uploading requires we understand minds well enough to do this.

In this context, malevolently created, or accidentally created, or functionally insane, AI is a definite possibility. How can it not be? If we grant that human mental function can run in the machine, why can’t human mental malfunction also?

That level of AI — should we ever achieve it — offers some real challenges. But there are lesser levels of AI all the way down to expert systems. I think one problem we have in this discussion is trying to include too many levels of AI. I totally agree there’s nothing problematic with anything on the “expert system” levels.

“I thought this whole discussion was about to what degree we should be concerned about AIs.”

Yes. And if AI achieves thought — the goal of AI research — then these issues I’m raising become real concerns.

“But it’s not something that will happen by accident,..”

What do you think a programming bug is?

“Brainwashing never alters our fundamental evolutionary programming. But an uploaded human could conceivably be reprogrammed at their core to value their masters’s well being and not their own.”

A form of brainwashing vastly superior to anything we have now because of the limits you just mentioned. Do you see why the sovereignty of minds is of crucial importance? It’s bad enough when hackers steal your credit card numbers!

“The point of AI for pure research and the point of AI for commercial interests are two different things.”

So no concerns either about that one lab that’s working on a virus that could kill us all?

How many AIs does it take for a disaster?

LikeLike

Wyrd,

I could respond to each point, but I think we’ve gotten into a repeating rut on many of them, so I think I’ll focus on what I perceive to be a new part of the conversation.

On the sovereignty of minds, I agree, once it has sovereignty. If an uploaded human mind is altered to not care about the things it cared about previously, that seems like a grievous violation of its rights. (Unless of course, the human mind itself is the one who made the alterations.)

But if an engineered mind has never cared about its own existence, and we never alter it to care about its existence, have we violated its rights by not giving it that concern? If it is a robot whose mission is to do dangerous work until it gets destroyed, and it doesn’t have that self concern, are we mistreating it? Even if it itself has no concern about that mistreatment?

If it does have rights, what exactly gives it those rights?

LikeLike

“But if an engineered mind has never cared about its own existence, and we never alter it to care about its existence, have we violated its rights by not giving it that concern?”

This is the point I don’t seem able to get across successfully…

You seem certain that advanced AI is possible without also involving a level of complexity that may involve emergent behaviors and which — merely in virtue of its complexity — may demand we consider its sovereignty.

There is a range of complexity and capability from expert systems to full AI. At some point there is a threshold where you have to begin asking questions about what defines a sovereign intelligence (that is, one deserving “human” rights).

As we experiment with genetics and cloning, we’ll have to ask questions there about what constitutes sovereign biological life. Here we’ll have those questions regarding intellectual life.

For I am not so certain advanced AI is possible without the other stuff. Frankly, Amigo, I’m a bit surprised you seem so certain. I know you’re big on emergent behavior and the potentials of structure. I don’t understand why you’re so certain the necessary complexity of AI doesn’t lead to more than obedient jumping through hoops.

LikeLike

Wyrd, you’ve articulated your point quite well. It’s a common sentiment. That perhaps to achieve “true intelligence,” that intelligence must possess the same qualities as an organic intelligence, such as self concern and other animal instincts.

Let me ask you this? Why do you think that? Given our experience so far with computing systems, what about them leads you to that conclusion? Do you see any latent indications of it in current systems? If not, what leads you to conclude it might eventually be required or be emergent at some more advanced point?

Or are you just looking to have me acknowledge the logical possibility? If so, I acknowledge it. But I’m not inclined to think it’s probable without some evidence. There are an infinity of logical possibilities. Most of them are not reality. It’s also logically possible that “true intelligence” requires an organic substrate, although again I’m not currently inclined to think that’s probable either. It seems to me that when considering these possibilities, we have to be mindful of the human impulse to project our own nature on others.

LikeLike

“That perhaps to achieve ‘true intelligence,’ that intelligence must possess the same qualities as an organic intelligence, such as self concern and other animal instincts.”

But that’s not what I’ve been saying!

You said Hume used “passions” in a way you both interpret broadly to include things like motivation, hunger from appetite, or curiosity. Essentially any drive is a “passion” and you’ve pointed out nothing happens without drives.

So:

I disagree these “passions” are unique to human thinking. I think some of them may be embedded in higher forms of any thinking, regardless of “substrate.”

As such, I think there’s a good chance they emerge as AI complexity grows and software begins “thinking” (for some reasonable definition of “thinking”).

“Why do you think that?”

The eerily organic behavior of certain simple fractal and robotic systems shows how simple goal-reaction algorithms result in behavior we associate with the natural world and living things. Fractals also demonstrate how complex results can come from very simple algorithms.

“Do you see any latent indications of it in current systems?”

Yes. I see crude forms of “acquisitiveness” in how garbage collection and memory management routines work. I see crude forms of “confusion” and “working through ambiguity” in how compilers work. Fuzzy logic control of cranes and hoists exhibits eerily expert human ability in how it handles inertia from loads. (And don’t Roomba’s seem “industrious” and “dedicated”? 🙂 )

And these are all very simple systems.

“If not, what leads you to conclude it might eventually be required or be emergent at some more advanced point?”

I’ve never said “required” — that’s part of what I’m not getting across. It’s not that we need them; it’s that they may be unavoidable.

Perhaps the problem is lumping so many behaviors into one basket. Jealousy and greed and anger are one level, and I think everyone would classify them as emotions (even as passions). You can see rational thought being separate from them.

But intellectual curiosity or intellectual hunger — I’m not sure those are emotions that can be separated out from thought. Without the hunger for an answer, why would you ask a question? Where is thought without them?

My bottom line: As artificial minds become more complex how could they not experience emergent behaviors or potentially suffer from mental errors induced by their programming or their experiences?

LikeLike

Wyrd, I’m struggling to come up with a response that doesn’t simply repeat a lot of what I’ve said multiple times already on this thread.

I fear we’re just at that old agree-to-disagree stage. (In truth we were probably at it some time ago 🙂 )

LikeLike

That’s fine, although it’s not clear to me we’re disagreeing on the same things.

LikeLiked by 1 person

I think you’re right about that. Don’t worry. I’m sure we’ll have opportunities to debate it again. 🙂

LikeLike

This is one model of an intelligent system – mimicking human intelligence in electronics. Another approach might be to build a core architecture that thinks, and then to give this module access to a more traditional digital computer network for storage of memories, thoughts, etc.

My experience of being a human is that memories in particular are ponderous, distorted, hard to access and subject to decay over time. Why build this limitation into an AI? Instead, build a core “thinking system” coupled to a reliable, rapid-access, massive memory store.

LikeLike

Steve, how would you define “thinking system”? What makes one system a thinking one and another one not a thinking one?

LikeLike

I would suppose that with a general-purpose AI, you could give it any kind of information or question and it would respond in some way that we would think appropriate. This isn’t the Turing Test. It doesn’t have to fool us into thinking that it’s human. I would imagine that it could understand language, could then internalize the logic of the question asked, and would be capable of working out a suitable answer. It might also be motivated to ask its own questions, without waiting for human input.

LikeLike

Thanks. I could ask you what you mean by “understand language”, “internalize the logic”, and “motivated”, but I think the main thing I would say about all of these things is to think about what they really mean. Obviously, we don’t understand the human mind well enough to map the data processing involved in each of these, but I tend to think that’s what it is. So, to me, keeping a thinking component separate from the other data processing components is just creating a system in the shape of a human mind and then having it access other data processing subsystems. That might be a productive architecture, but I suspect there will be more efficient ones.

LikeLike

Of course! (Unless it turns out not to work and that “fuzzy thinking” is somehow required for intelligence. There are some things where too much precision actually hurts.)

The interesting question is: If you have something that “thinks” (for some reasonable definition of “thinks”), and it has perfect and vast memory, and operates at extremely high speed… what will it think of us? Especially once it starts networking with other AIs!

(Have you seen Her? Best part of that movie is the ending! 🙂 )

LikeLike

I did see ‘Her’. Can’t say I was too impressed. If I had Scarlett Johansson in my computer, I’d be impressed by its humanity too.

LikeLike

No, it’s not a great movie, but it poses some interesting questions. I really didn’t care for it while watching it, but thinking about it later I found it raised some good points.

Given the premise and granting the O/S as possible, the movie’s ending seems the only logical conclusion.

LikeLiked by 1 person

(FWIW, I used to really like deGrasse Tyson, but over time I’ve begun to think he’s a bit of a buffoon. I like that he’s pro-science, but he’s said things that have raised my eyebrows more than once (like this time — to be honest I think he’s just as clueless as Hawking and Musk or any of us on the topic).)

LikeLike

The only thing I recall hearing from Tyson that I outright disagree with was his blanket dismissal of philosophy. Most of the other stuff he’s said, I either agree with or at least have some sympathy for where he’s coming from.

That said, I think all of them do demonstrate that just because they’re brilliant in their fields, it doesn’t mean they necessarily know what they’re talking about outside of it.

LikeLike

I generally do regard and respect the guy. His dismissal of philosophy was a pretty bit count against him in my book, and I’m not always good at separating out pieces of a thing. Dawkins is a good example. I have a hard time liking Dawkins, but the irony is that I do agree with most of what he says. It’s that I disagree with the other bits SO much.

Still, I don’t mean to paint too strong a picture. He gets huge points for pushing science, and he seems very likeable. I got a kick out of how he pointed out Jon Stewart’s Earth turns the wrong way (still is). I’ll take him any day of the week over Michio Kaku!

I do think massive popularity changes a person. They begin to act in ways to preserve that popularity — they become more generic. Somehow I liked him better when no one really knew him. That could be me… I find mainstream is usually a place I don’t want to be. [shrug]

LikeLike

I think Tyson sees his role as primarily a science evangelist. As a result, he tends to shy away from anything that might compromise that role, notably anything that might make him look like an evangelist for something else. I can’t blame him too much for that. When Dawkins comes out with a science book these days, all anyone focuses on is what it says about religion.

LikeLiked by 1 person

Good point! Agreed!

LikeLiked by 1 person

Seems to be my day for reading Quantum Magazine articles that remind me of blog posts here. Here’s an expert in the field who articulates some of the same concerns voiced here:

https://www.quantamagazine.org/20150421-concerns-of-an-artificial-intelligence-pioneer/

LikeLiked by 1 person

My concern is (especially after watching ex machina) is that researchers found that robots they created learned to lie. They weren’t all that sophisticated, just goal oriented.

Gee, what could go wrong?

Anyway, doesn’t the robots that learned to lie are now qualified to run for public office or be CEO at a major corporation? Well… OK, a little work then, but they are on their way, aren’t they?

You know, you’d think that we’d have the answer to this just by watching the behavior of the Fortune 500.

LikeLike

Hi BOM,

Thanks for stopping by.

If you’re talking about this experiment: http://www.popsci.com/scitech/article/2009-08/evolving-robots-learn-lie-hide-resources-each-other , I think it’s worth noting that this was a situation where scientists gave the robots a directive to find “food” and then deliberately “evolved” them to see what would happen, including the communication mechanism that allowed them to start lying.

While an interesting experiment to model evolution, I’m not sure how useful it is to produce commercial technology. I agree that trying to use for that purpose could be dangerous.

LikeLike