I’ve written numerous times here that I tend to think that AGI (artificial general intelligence) and mind uploading are both ultimately possible. (Possibly centuries in the future, but possible.) I’ve also noted that we’ll have to have a working understanding of the mind, how it works, how it is structured, before we can do either, but that gaining that understanding is possible.

I often get push-back on this idea. One of the arguments I commonly hear is, perhaps the mind doesn’t have a structure. Maybe it’s just an unstructured mess from which our consciousness arises, or the structure may be so complicated that it’s forever outside of our ability to understand it.

I think this is unlikely, for two reasons, one broad and one narrow.

The first broad one is that everything else in biology follows recognizable systems and subsystems and has a systematic structure that we’ve been able to discover. (Think organs in a body or cell machinery.) Many of these systems are profoundly complex, but they haven’t shown themselves to be undiscoverable. Arguing that the mind is unstructured is arguing that everything is systematic until we get to the mind, then the rules change. It’s possible, but doesn’t seem likely to me.

To discuss the second more narrow reason, it’s necessary to mention a couple of facts about brains. Before I started reading neuroscience, I thought of the brain as a computer, and the mind as the software of that computer. This is a common conception held by many programmers and other people knowledgeable about computing. While it could be broadly true, there are some crucial caveats to keep in mind.

The division between hardware and software is an innovation of modern computing. Interestingly, the earliest electronic computers didn’t fully have that division, often requiring physical rewiring to be reprogrammed. The ability to load and reload new software dramatically increased the flexibility and usefulness of computing systems.

This ability is why, when we speak of the architecture of an operating system or application software, such as Microsoft Windows, we generally do so without reference to the hardware that it’s running on. To be sure, the architecture of an operating system does address the hardware, in the case of Windows with an architectural layer called the HAL (hardware abstraction layer). The HAL and device drivers deal directly with the hardware so most of the software system doesn’t have to.

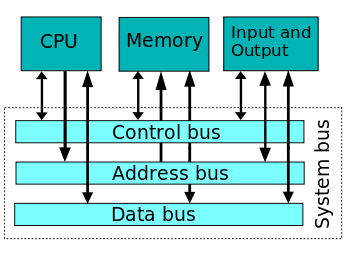

In addition, modern hardware includes a system bus, a mechanism that allows any component of the system to talk to any other component without regard to how near or far it is on the system board. And memory chips are random access, generally allowing any desired memory location to be accessed by a memory address.

For these reasons, pointing to a specific part of a CPU or memory chip for a piece of Windows functionality is a meaningless exercise. Yes, the code that implements that feature does physically exist transitorily in those chips, but the exact location of that code right then is not particularly meaningful. It’s exact location varies for a wide variety of reasons, from the timing of when it was loaded, to various transitory needs of the operating system.

It’s often noted that if we didn’t understand software, examining computer hardware in the same way we typically examine the brain would tell us very little about the software architecture. And that is right, if brains worked like modern computers.

However brains don’t work like that. There’s no mechanism to reload software and no system bus. The hardware-software divide doesn’t exist. To be clear, there’s extensive evidence that brains are information processing systems, but their architectures are very different from general purpose digital computers.

What this means is that specific functionality in the brain exists in specific locations. The functionality in most locations depends on what’s connected to that location. For example, vision processing happens in the occipital lobe. It doesn’t happen there because there’s anything necessarily special about the neurons in the occipital lobe, but because that’s where the vision processing nucleus of the thalamus connects to, and that nucleus does vision processing because that’s where the connections from the eye retinas go.

The same applies to the other processing centers in the brain. The parietal lobe processes touch sensations from throughout the body, with specific parts of the parietal lobe dealing with specific body parts. The temporal lobe processes auditory information, the frontal lobe plans and initiates movement, the cerebellum provides fine motor coordination, and so on. The parts of the brain that process sensations from feet are always in the same location. The parts that recognize certain visual shapes or colors are also generally in the same location.

There is some variance between individual brains. For instance, the language centers are usually in the left hemisphere of the brain but a few people have them on the right side. (The functionality of the two hemispheres, each controlling half of the body, is similar but not identical.) But these variations are rarely major. The location of functionality is dependent on where the connections from the senses come in, where the motor control connections come out, and where connections from other brain regions connect, and all of that is similar in individual brains of the same species.

Of course, the brain has plasticity, so if someone is blind during development, many of the neurons that typically service vision, in the absence of any signals from the eyes, can get recruited to help in the processing going on in adjacent areas. But in a healthy person, the majority of the functions can be physically identified to a specific part of the brain.

Now, there are areas of the brain which do appear to have specialized hardware. The neurons in the hippocampus are reportedly able to strengthen and weaken synapses faster than in other regions, which no doubt aids its role in long term memory storage. The amygdala, given its role in generating primal emotions, probably has more hard coded functionality than elsewhere in the brain. Most of this specialized hardware appears to be in the midbrain or lower. The neocortex regions, again, get their specialization by what connects to them.

Memories themselves are stored in the patterns of synapses (connections between neurons) throughout the brain, with visual portions of a memory stored in the visual processing centers, auditory portions in the auditory processing centers, etc. A full memory has to be retrieved (via connections) from all these regions.

The coordination of consciousness, sleep, and attention happen in the thalamus, which also appears to be heavily involved in integration of sensory information and motor control. The thalamus outsources most of its work through its extensive connections to the neocortex, which essentially serves as a gigantic expansion substrate for it. (The thalamus is often described as an information hub for the neocortex, but given its many other crucial functions, I think it’s better to see the thalamus as the main system calling subroutines in the neocortex.)

All of which is to say that studying brain modules and how they are interconnected, in other words studying the structure of the brain, is also studying the software, the architecture of the mind. This is the second reason I mentioned above. Without the software-hardware divide, the physical structure of the brain is also its logical structure, and science already has some understanding of it.

None of this is to say that the understandings of all these areas is anywhere near complete. Indeed, there are still areas of the brain whose purposes are not well understood at all. And given the enormous complexity, 86 billion neurons (most of which are in the cerebellum) and up to a quadrillion synapses, no one has yet managed to trace the neural circuits throughout to see precisely how a particular thought or decision happens.

But new imaging techniques are constantly being developed, and detailed knowledge increases each year. A final understanding is a long way off, but there’s no fundamental barrier to it being achievable.

rubbish in rubbish out, amen

LikeLike

LOLS! Hopefully you’re talking about a computer and not the post.

LikeLiked by 1 person

It seems shortsighted to say that just because something is difficult to understand that we will never understand it. Unless quantum physics and the uncertainty principle play some crucial role, we can figure this out. And even if we find out that our minds are fundamentally quantum, that would still tell us a heck of a lot about how they really work, even our understanding can never be perfect.

LikeLiked by 1 person

Good point. If quantum physics does play a role (other than the role it plays in everything), it will likely behave just like the quantum physics we already understand. If it doesn’t, that would be a new physics that we’d have to work to understand.

The possibility of exotic physics playing a role doesn’t get mentioned in the mainstream neuroscience books I’ve read. Their discussions at the lowest levels are usually focused on electricity, chemistry, and molecular machinery.

LikeLiked by 2 people

Penrose proposes mind is found in the gaps between synapses, in a quantum state, and is released at death. I haven’t explored his idea any further than this, but would be interested to hear if he’s devised some test for this.

LikeLiked by 1 person

Hmmm. Do you know if he meant in the synaptic cleft between the pre-synaptic and post-synaptic neurons, or along the neural membrane between the synapses?

LikeLike

I don’t really know. Let’s let the man explain it…

LikeLiked by 1 person

Microtubules.

LikeLike

Thanks for the video. I’ve listened to Penrose’s thoughts on this before. He gave a Google talk years ago where he spent over an hour on it. A lot of people have urged me to read his book.

The logic seems to be that consciousness is mysterious and the wave function collapse is mysterious, therefore maybe they happen in the same space. And microtubles, which are a common part of cellular machinery (not just in neurons), is where this could conceivably take place.

Well, perhaps. But as far as I can see, there’s nothing to drive this proposition, no observation that makes it necessary, just the feeling that consciousness can’t be the result of computation. It’s not a hypothesis that shows up in mainstream neuroscience books.

LikeLiked by 1 person

No, which is why I’ve never really looked too much into it. Still, good project for the Templeton Foundation to dump some money into. No harm investigating, especially when its private money.

LikeLike

Agreed. It very much sounds like their kind of project.

LikeLike

“The logic seems to be that consciousness is mysterious” – Well, as no one knows what it is, then that seems a pretty good word for it. Why should there not be some phenomena that remain mysterious to our ape brains, subject to cognitive closure? Of course, we can have Dennett’s Conscious Explained Away if we must, but it’s not for me.

LikeLiked by 2 people

A lot of people love mysteries in a way that they’re not eager to see them solved. I love them too, but in my case that love comes from the anticipation of eventually solving them. I have little sympathy for those who regret Newton taking the mystery out of the rainbow.

That said, no matter how much objective information we collect about the system, it will never add up to the feeling of actually being that system. Many see that as a problem (the hard problem), a mystery that we may never be able to solve, but I just see it as a fundamental fact.

LikeLike

Exactly so Mike, which leaves us with the issue of how we would ever know if were successful in mind uploading – inference alone?

LikeLike

My last comment appeared out of order for some reason, as if it were the first.

LikeLike

Yeah, WordPress has been screwy lately on ordering comment replies.

How would we know if we were successful with mind uploading? We’d never know beyond all doubt. Of course, none of us never know beyond all doubt that other minds exist. I think if the uploaded mind communicated to us that it was the original, and we had good reasons based on our understanding of the human brain to think it was, that’s probably the best we can hope for.

I think if uploaded-Grandma effectively acts like original-Grandma and knows things that only original-Grandma could have known, most people are going to accept uploaded-Grandma as Grandma. Of course, some people never will, and will insist that uploaded-Grandma is a zombie, a doppelganger.

LikeLiked by 1 person

That’s clever; you re-ordered the comments – did you do that or did WordPress?

Anyway, uploaded Grandma would have to be ‘born’ with all her memories in place surely? And she’d also have to be uploaded with all her proclivities and dispositions. Otherwise, she wouldn’t be a facsimile Grandma – right?

LikeLike

Haha. No, it’s all WordPress. The wrong sort order thing has been happening intermittently for the last week or two. I’ve seen it on other blogs as well. Hopefully someone at WordPress is working on it.

I’m not quite sure what you mean by “facsimile”. If you mean an effective copy, then agreed. If you mean a fake, then it all depends on what you consider to be the self, which I know you’ve blogged about before.

LikeLike

By the expression ‘mind uploading’, then I take it that you mean (re)producing a replica which is as close as is possible to the original i.e. a facsimile. A mind uploaded without memory and psychological predispositions could never come close to being a replica. Therefore, uploaded Grandma could not demonstrate to us that she was conscious based on her behaviour and displays of knowledge. We come back to not knowing if we have succeeded in our objective. I don’t see the putative ‘self’ as being relevant Mike, other than that the uploaded Grandma would need to be similarly deluded in imagining there to be some enduring agent within or about her, that egoically (reflectively) imagines itself to be the experiencer of experience. [Infinite regression]

LikeLike

I think it comes into whether or not to recognize the uploaded version as the same person. Assuming the uploaded version’s internal processes are a faithful attempt to replicate the original’s, and it behaves close enough that those who know her conclude it’s the same person, I don’t think there’s any firm ontological answer as to whether she’s “really” the same person. Some people will conclude that she is, and by their criteria, they’ll be right. Others will insist that she isn’t, and by their criteria, they’ll be right.

LikeLiked by 1 person

I think the ‘same person’ question is inapposite. It’s to take the concept of the original grandma and presuppose that within that she herself has some fixity about her, and which could be compared to the replicant. As her physicality loses 70,000,000,000,000 cells every day, and as her mind is in a constant state of flux – actually, it is nothing but a state of flux – then what is it that the replicant could be the same as?

LikeLiked by 1 person

Oh, I agree. We are waves of information, patterns that have achieved consciousness, with the pattern today composed of different atoms than it was composed a few years ago. That’s why uploading doesn’t generate any existential difficulty for me. It would just represent transferring the pattern to a new medium. (And copying it as desired, which admittedly would be weird.)

LikeLiked by 2 people

If your point is that someday it’s very likely we will understand how the brain creates mind, I quite agree! It’s just a physical system, so we will fully explore it. The only gotcha might be if we encounter something like a Heisenberg limit or a Turing-Gödel limit of some kind… We can understand and model the system, but never fully resolve its specific values.

And that might limit something like uploading or duplicating but not necessarily creating new minds. There is a huge difference between understanding how the brain functions and replicating it digitally let alone transferring a living consciousness. I think it’s important to keep those goals separate.

LikeLike

Sounds like we do agree on the main point. I’d agree that there may be unknown factors that could limit what we can do. I think you see those as more likely than I do. But hey, we are talking about the unknown.

I think we’ll have a better idea in years to come, although the final answer will likely be long after our lifetimes.

LikeLike

Yeah, I don’t really see how we can fail to eventually understand how the brain works.

LikeLiked by 1 person

Great piece – thanks! These came to mind and might be of interest:

‘Brain-mapping projects to join forces’ http://www.nature.com/news/brain-mapping-projects-to-join-forces-1.14871

‘Deep brain stimulation’ http://www.mayoclinic.org/tests-procedures/deep-brain-stimulation/home/ovc-20156088

… which I found amazing(an acquaintance has had DBS for Parkinson’s), yet we’ve been familiar with germ theory for some time now and the battle goes on.

LikeLike

Interesting. Thanks!

I didn’t know the US and European brain mapping projects were planning to collaborate. The only thing that makes me a little uneasy about it is that the European one is widely regarded as defective. It sounds like there are calls for it to be overhauled: http://www.scientificamerican.com/article/why-the-human-brain-project-went-wrong-and-how-to-fix-it/

LikeLiked by 1 person

Some thoughts about your first argument can be found here: http://asifoscope.org/2015/12/11/a-walk-through-the-forest-sources-of-structure/comment-page-1/#comment-9525 and in the article that comment belongs to.

LikeLike

Hey nannus, just replied on your blog.

LikeLiked by 1 person